Key Takeaways

- AI adoption has roughly doubled year over year, yet only single-digit percentages of companies report meaningful EBIT impact, and the gap between the two is almost entirely a workforce capability problem.

- Off-the-shelf training fails because it teaches AI as a subject, while contextual, role-specific enablement built around real workflows and real deliverables is what actually converts AI tools into measurable outcomes.

- Enablement is the multiplier on every AI investment: it’s the conversion rate between dollars spent on tools and dollars returned in value, and it belongs in the transformation budget rather than the L&D line item.

Most leaders we talk to in 2026 sense a strange tension in their AI programs. Licenses are deployed. Pilots are running. Dashboards exist. Yet the EBIT line hasn’t moved the way the board was promised it would.

The gap between AI availability and AI value has become the defining question of this transformation cycle. Our teams have delivered a few hundred deployments across manufacturing, financial services, higher education, government contracting, and SaaS. What we see consistently is that the bottleneck isn’t the models. It isn’t the infrastructure. It’s the workforce layer that wraps around both.

This piece is about why that bottleneck exists, what the research says about it, and what we’ve learned actually solves it in practice.

The Paradox at the Heart of Enterprise AI

Adoption Is Sprinting, Value Is Crawling

There’s a paradox at the center of almost every AI transformation conversation happening in 2026. Adoption is sprinting. Value creation is crawling.

McKinsey’s State of AI research tracks organizational AI adoption at roughly 72% of companies using AI in at least one business function, nearly double the year prior. Yet when researchers ask those same organizations about material EBIT contribution, only a thin sliver reports meaningful bottom-line impact (typically single digits for generative AI specifically). BCG’s Build for the Future research puts only 4 to 10% of companies in the top tier of AI performers that capture substantial, repeatable value. Gartner estimates 60 to 85% of AI projects never reach production. The root causes skew heavily toward organizational and human factors rather than model performance or infrastructure.

Why the Gap Is a People Problem

The pattern is consistent enough to treat as a stylized fact. Buying AI is easy. Using it well is hard. And the gap between the two is mostly a people problem.

Across the few hundred deployments our teams have delivered, the same pattern shows up again and again. Access isn’t adoption. Adoption isn’t usage. Usage isn’t value. The bridge between those stages is workforce enablement. In our practical experience, it’s become the single most consequential lever in enterprise AI transformation.

The People Problem, in Their Own Words

When researchers ask leaders what’s actually blocking AI value capture, the answers are strikingly unanimous and strikingly non-technical.

Skills and talent gaps top the list across every major survey, including work from McKinsey, Deloitte, PwC, and the World Economic Forum. Leaders aren’t saying their models underperform. They’re saying their people don’t know how to use them. Deloitte’s State of Generative AI in the Enterprise research finds organizational and cultural readiness lagging meaningfully behind technical readiness. Employees express simultaneously high interest and high anxiety about AI in their roles. Harvard Business Review has a name for the downstream effect: pilot purgatory. It’s the endless cycle of proofs of concept that never become infrastructure because no one built the human layer around them.

The mechanism is simple. AI capability only becomes AI value when a human being, embedded in a specific workflow, with specific context, knows how to deploy it against a specific problem. None of that comes from the technology itself.

The Skills Gap Isn’t Latent. It’s Active.

Measurable Today, Not a 2027 Problem

The gap between the AI skills employees need and the AI skills they actually have isn’t a future problem waiting to materialize. It’s showing up today, in measurable ways.

The World Economic Forum’s Future of Jobs Report lists AI literacy, analytical thinking, and technological fluency among the fastest-growing skills through 2027. A substantial majority of workers will need reskilling within five years. LinkedIn’s data shows AI skill demand growing multiple times faster than the supply of workers with those skills. Microsoft’s Work Trend Index captures the sharpest symptom: roughly three-quarters of knowledge workers now use AI at work, and a comparable proportion are bringing their own AI. That means unsanctioned tools used without training, governance, or oversight. Salesforce research confirms the demand side. Most workers feel underprepared to use AI in their roles and would welcome employer-provided training. Only a minority report receiving any.

The Rising Cost of Shadow AI

This is the shadow AI problem, and it’s not hypothetical. Employees are already using AI. The only question is whether they’re using it well. And the cost of getting it wrong is climbing.

Shadow AI carries real risks around data exposure, regulatory compliance, and quality control. Those risks now sit near the top of emerging concerns for CISOs and Chief Risk Officers. The talent market is pricing AI literacy in too. LinkedIn and Burning Glass data suggest a real wage premium for AI-fluent workers and a growing willingness among employees to leave roles that don’t offer AI skill development. Enablement is becoming a retention variable.

Why Off the Shelf Training Doesn’t Solve This

The Learning Science Problem

The instinct, when faced with a skills gap, is to buy training. The market has responded accordingly with horizontal content platforms, video libraries, and completion-tracking dashboards. The issue is that learning science has understood for decades why this model underperforms, and the AI context makes it worse.

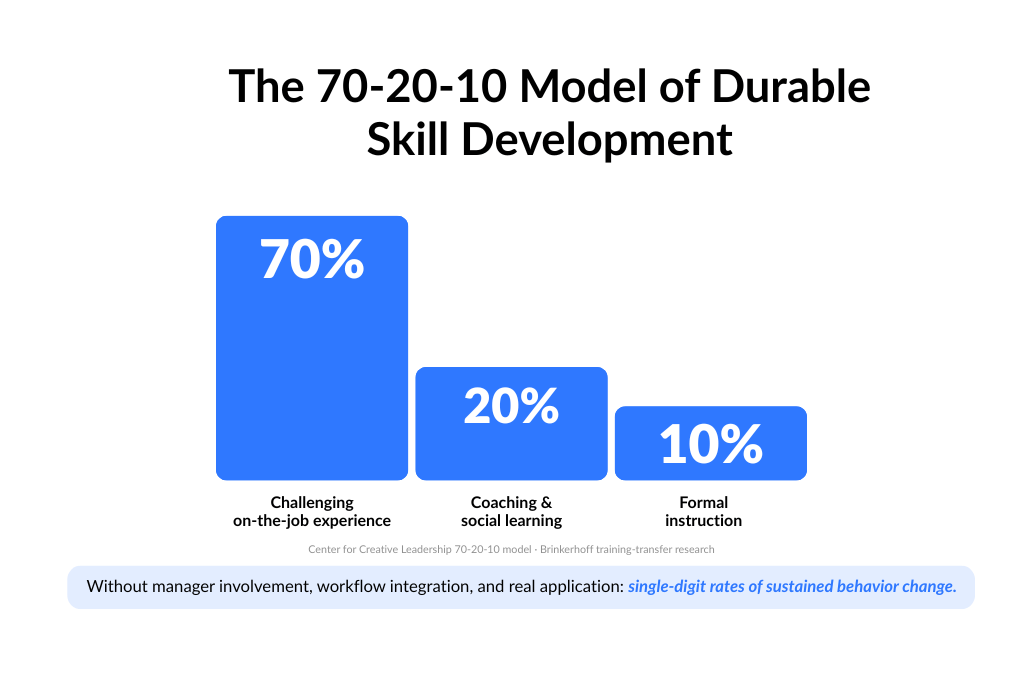

The forgetting curve tells us that without contextual reinforcement and real application, learners lose most new information within 24 to 48 hours. Passive video is particularly vulnerable. The 70-20-10 model from the Center for Creative Leadership holds that roughly 70% of durable skill development comes from challenging on-the-job experience, 20% from coaching and social learning, and only 10% from formal instruction. Programs that live entirely in that 10% reliably fail to change behavior. Brinkerhoff’s training-transfer research shows that without manager involvement, workflow integration, and real application, training investments produce single-digit rates of sustained behavior change.

AI as a Subject vs. AI as a Tool

The specific failure mode for generic AI training is that it teaches AI as a subject rather than as a tool applied to a specific task. A marketing analyst who sits through a three-hour LLM fundamentals course still doesn’t know whether, when, or how to use an LLM against their Monday morning campaign brief. That gap, between abstract capability and applied competence, is where value is won or lost.

The enablement market is bifurcating around exactly this distinction. Content-led platforms are scaling AI literacy broadly but shallowly. Practitioner-led enablement is going narrow and deep, with fewer people, more context, real deliverables, and measurable outcomes. The research and our own delivery data favor the second track for organizations that expect AI to produce ROI.

What Valere Learning Actually Looks Like

The Thesis Behind Valere Evolve

Valere Learning is how we put this into practice. It’s also the thesis behind our broader Valere Evolve ecosystem, which unifies Valere Learning (workforce enablement), Valere Labs (custom development), and Conducto + Dactic (orchestration and knowledge capture). The logic is practical rather than clever. If employees are going to operate AI-first systems, they should train on the exact systems being deployed, not on AI in the abstract. That approach drives the outcomes we measure most closely, including a 94% workforce adoption rate on deployed systems and more than $900M in measured client ROI to date.

The north star is value delivery. Learning is the vehicle.

Three Forms the Work Takes

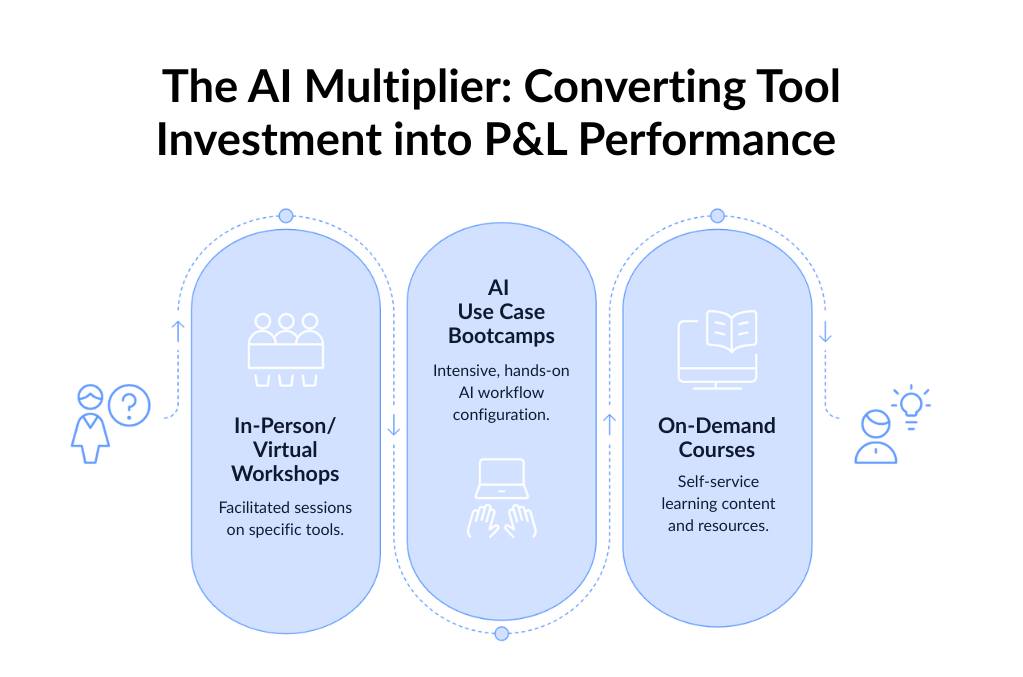

In practice it tends to take three forms.

In-Person and Virtual Workshops. Facilitated sessions built around an organization’s specific tools, team composition, and real workflows, not generic product overviews. We ground them in discovery and stakeholder alignment from the first conversation. The goal is organizational change rather than just individual skill lift.

AI Use Case Enablement Bootcamps. This is where we go deepest. Intensive, hands-on engagements where participants don’t just learn about AI tools. They configure, prompt, test, and deploy real workflows during the training itself. We scope bootcamps around a defined set of client-specific use cases and deliver them across phased workstreams. Participants leave with both working knowledge and working implementations.

On-Demand Courses and Learning Content. Polished assets, including video walkthroughs, documentation, SOPs, and structured course libraries. We build them for self-service consumption and design them to scale alongside the client’s user base over time. This is how AI literacy spreads horizontally once the depth work has established a foundation.

A Bootcamp in Action

A global manufacturing and industrial supplier engaged us to build Microsoft Copilot and Power Platform capability across Purchasing, Credit, Finance, and Service operations. The off-the-shelf approach would’ve been a generic Copilot curriculum and a completion certificate. Instead, we ran a six to eight week, 200 hour bootcamp structured around five specific use cases their teams already ran. Participants used their own organizational data to configure live workflows and Copilot Agents during the training itself. They walked out with functional, implemented solutions plus the skills to evolve those solutions on their own. That’s the difference between AI as an abstract topic and AI as an applied tool. In our experience, it’s the difference that determines whether the investment compounds or stalls.

What We’ve Learned Makes the Difference

A few things have emerged, across hundreds of engagements, as consistently decisive.

Real Deployment Experience Compounds

Every lesson, workshop, and bootcamp we run draws on our library of live deployments across very different organizational contexts. Participants learn from implementations that have survived contact with production, not from theory. It’s the single biggest thing worth asking any enablement partner: who’s actually shipped?

Role Specificity Matters as Much as Format

When a global investing infrastructure platform engaged us to deploy Amazon WorkSpaces for 1,200+ users (scaling to 5,000+), we embedded with the technical team and built training materials as they built the environment itself. We contextualized the content for two distinct audiences: IT Administrators managing lifecycle workflows, and end-users accessing virtual desktops across a compliance-sensitive global footprint. One curriculum couldn’t have served both groups well. Two purpose-built ones did. The deployment landed cleanly because we engineered the enablement into the rollout rather than bolted it on afterward.

Outcome Over Completion

The training industry’s default metric, completion rates, correlates weakly with value creation. Our bootcamps tie directly to business KPIs, and we define success as deployed AI solutions generating measurable return.

A recent example: a SaaS company in the automotive space needed to reduce customer churn. The intelligence to do it was trapped as tribal knowledge inside their Customer Success team. We used Dactic to interview the CS team and extract their undocumented understanding of churn signals. We used Conducto to generate automated risk scores. Then we built a custom dashboard where CS reps could review those scores, add qualitative context, and run context-specific intervention playbooks. The deployment mattered. The enablement, meaning teaching the team how to actually work the system, drove the 20% reduction in early-stage churn.

A similar pattern showed up with an architecture firm losing weeks every cycle to a manual, error-prone budgeting process. We developed an AI-powered platform to automate it. The more instructive part of the engagement was what came after: role-specific training for the finance team and for the department heads who’d actually own their own financial planning going forward. The technology existed the day the code shipped. The value only existed once the people knew how to operate it. From there, the firm cut its budget cycle by 60% and saw dramatic improvement in forecast accuracy.

Contextual Design: Designing for Human Judgment, Not Around It

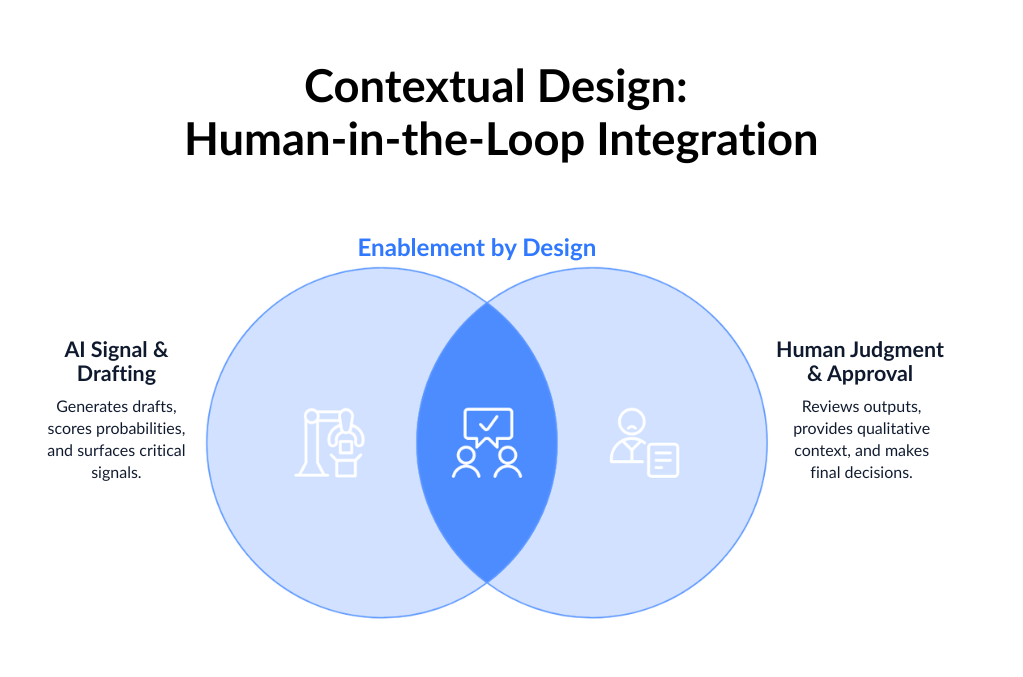

In our work with a private research university’s alumni advancement team, we embedded AI directly into the workflows staff already ran. That meant an Outlook plugin that generates pre-meeting donor briefings, and a CRM sidebar widget for profile generation, rather than asking staff to adopt a separate system. Every AI output routes through mandatory human-in-the-loop approval gates. Agents draft. Humans decide.

The same principle shows up in higher-stakes environments. For a government contracting business, we deployed an AI system that monitors federal portals and scores contract win probabilities. The real value sits in the Human-in-the-Loop Iteration dashboard, where sales teams make collaborative bid/no-bid decisions, refine scoring weights, and feed their ground-truth judgments back into the system for continuous learning. The AI surfaces signal. The humans shape what signal means over time. That feedback loop is enablement by design.

When the workflow itself is unforgiving, contextual design becomes the whole game. A trust and safety platform we partnered with needed reviewers to cross-check image and text annotations against a dense 50 page rulebook. A generic AI model would have failed the specific compliance requirements instantly. Instead, we built an AI logic bridge that maps the guidelines into precise JSON logic. In a single API call, it returns a mathematically consistent quality score, specific feedback, and a full audit trail. The enablement wasn’t about teaching reviewers AI in the abstract. It was about giving them a tool that fit their exact workflow and compliance standard.

Across these patterns, a common thread: AI creates value fastest when it meets people where their work already is, and when the humans in the loop keep the final call.

Post-Engagement Continuity

This one gets underestimated a lot. A large enterprise IT organization engaged us for a Microsoft 365 Copilot and ServiceNow integration. We could have shipped the technology and sent a user guide. Instead we structured the post-launch phase as its own enablement workstream. That included admin and end-user documentation, a formal knowledge transfer session with their IT team, and a defined hypercare period for issue triage and support. In our experience, the difference between an internal team that uses a system and one that owns and evolves it comes down almost entirely to what happens in the 90 days after go-live. So we treat those 90 days as part of the deliverable.

The Business Case: Enablement as the Multiplier

Why the ROI Argument Lands

Here’s the frame that tends to land with CFOs and COOs. The ROI argument for structured AI enablement is usually stronger than the ROI argument for most individual AI tool purchases. That’s because enablement is the multiplier on every tool purchase.

Productivity studies on AI, including the Brynjolfsson, Li, and Raymond work on generative AI in customer support, consistently show that productivity gains are largest for less experienced workers. The gains unlock specifically when employees know how to prompt, verify, and integrate AI outputs. The tool alone isn’t sufficient. The skill is the multiplier. Deloitte and Accenture workforce research estimates that organizations with mature learning and reskilling functions capture meaningfully higher returns from digital and AI investments. Some studies place the difference in AI value capture at multiples rather than percentages.

The Multiplier Effect in Practice

The multiplier effect is clearest when a small team is asked to do the work of a much larger one. A B2B marketing platform we partnered with needed to orchestrate email outreach to over 250,000 contacts with a three person team. We deployed Conducto and built a custom dashboard giving the team full visibility and control. They could monitor performance, approve campaigns, and feed new learnings back into the AI’s knowledge base. Three people, managing 210 to 300 inboxes autonomously, without adding headcount. The AI did the orchestration. The enablement did the scaling.

The strategic reframe worth making: AI enablement isn’t a training line item. It’s the conversion rate between AI investment and AI return. Every dollar spent on AI tools produces a return proportional to the skill with which it’s used.

A Few Principles for the Future

For organizations setting AI transformation strategy now, a few things have held up consistently in our work.

Fund enablement from transformation budgets, not L&D budgets. The scale required to produce real AI value capture is typically an order of magnitude beyond what traditional training budgets support, and the returns justify the reclassification.

Treat enablement as part of the AI stack, not an add-on. Which AI tools should we deploy, and how will our people use them, are the same question. Answering one without the other is how the adoption and value paradox happens.

Favor contextual, role-specific programs over horizontal curriculum. Horizontal literacy has a floor-setting role, but transformation value comes from workflow-specific depth. An underwriter, a purchasing analyst, and a tier-1 support agent each need different things. A single curriculum serves none of them well.

Measure deployed solutions, not completed courses. The program is working if people are shipping AI-powered workflows, not if they’re logging hours in an LMS.

Build for continuity. One-time engagements produce one-time results. The organizations pulling durable value out of AI treat enablement as a permanent capability rather than a project.

Conclusion: The Solution Is Only as Good as the People Using It

The central finding across the enablement research is simple enough to say in a sentence. Organizations that invest in the people layer of AI transformation at the same depth and rigor as they invest in the technology layer produce AI value at scale. The ones that don’t, don’t.

For any enterprise approaching AI seriously, meaning with P&L expectations attached, workforce enablement isn’t optional infrastructure. It’s not a nice-to-have, and it’s not a 2027 problem. It’s the mechanism that converts AI investments into outcomes. Every credible study of AI value capture returns to this point from a different angle. Our own delivery data across a few hundred deployments says the same thing. The solution is only as good as the people’s ability to use it.

Ready to Turn AI Investment Into Measurable Value?

Ready to build AI systems your workforce actually uses? Valere works with enterprise leaders, Private Equity sponsors, and portfolio companies to design, build, and scale AI deployments that convert into measurable outcomes. The tools are only as valuable as the people operating them. Whether you are evaluating adoption across existing AI investments, preparing a workforce for transformation, or rolling out new systems at scale, Valere brings the delivery experience, enablement methodology, and partnership model to turn AI potential into P&L performance.

- A Workforce AI Readiness Assessment identifying where your current AI deployments are underperforming due to gaps in role-specific training, workflow integration, and post-launch continuity

- A clear path from isolated pilots and shadow AI use to governed, role-contextual enablement programs that integrate with your real workflows, close the skills gap your teams are actually experiencing, and compound adoption with every system you deploy

- A personalized value creation roadmap moving your organization from disconnected AI experiments to a durable enablement capability, complete with human in the loop governance, embedded learning content, and the operating model required to sustain AI fluency across the business

Start building your AI enablement foundation: https://www.valere.io/

Frequently Asked Questions

How do mid-market and enterprise companies typically close the AI skills gap?

The most effective programs we’ve observed pair hands-on workflow training with the actual AI systems being deployed, rather than relying on generic literacy courses. The Center for Creative Leadership’s 70-20-10 model suggests roughly 70% of durable skill development comes from on-the-job experience. That’s why content-only approaches rarely move the needle. Practitioner-led bootcamps, role-specific workshops, and embedded enablement during rollout tend to produce measurably higher adoption than horizontal curriculum. The question to ask any partner is whether their program produces deployed solutions or just completed courses.

What does workforce AI enablement actually include?

Effective enablement generally spans three layers. First, facilitated workshops scoped to an organization’s real tools and workflows. Second, deeper bootcamps where participants configure and deploy live AI use cases during the training itself. Third, on-demand content for ongoing self-service learning. It also tends to include post-launch documentation, knowledge transfer, and a hypercare period in the 90 days after go-live. The thread across all of it is that enablement is built around specific systems and specific roles, rather than AI as an abstract topic.

Why do so many AI pilots fail to reach production?

Gartner estimates 60 to 85% of AI projects never make it into production. The reasons cluster on the organizational side: unclear workflow integration, missing change management, lack of role-specific training, and no continuity plan after initial launch. Harvard Business Review refers to this as pilot purgatory. In practice, the technology is usually ready well before the workforce is. That’s why enablement strategy matters at least as much as model selection.

How should enablement be budgeted?

The funding source increasingly matters. Traditional L&D budgets are generally sized for compliance training and skill refreshers, not for the scale required to turn AI tools into P&L impact. Organizations capturing real returns tend to fund enablement from transformation or digital investment budgets, alongside the tooling itself. Deloitte and Accenture workforce research suggests companies with mature learning functions capture multiples more value from AI investments than peers without them.

What’s the difference between shadow AI and sanctioned AI use?

Shadow AI refers to employees using AI tools on the job without formal training, governance, or oversight. Microsoft’s Work Trend Index suggests roughly three-quarters of knowledge workers now use AI at work. A comparable proportion bring their own tools. The risks cluster around data exposure, compliance exposure, and uneven output quality. Sanctioned AI use, by contrast, involves tools deployed with training, access controls, human-in-the-loop governance, and documented workflows. The gap between the two is mostly a function of whether an organization has invested in enablement.

How is AI enablement ROI typically measured?

Completion rates are the traditional training metric, but they correlate weakly with value creation. More useful measures include the number of deployed AI workflows per team, time-to-first-value for newly trained employees, productivity deltas on specific tasks, and business KPIs tied directly to the AI use case (reduced churn, faster cycle times, headcount leverage on repetitive work). Brynjolfsson, Li, and Raymond’s research on generative AI in customer support shows productivity gains are largest for less experienced workers, and only when employees know how to prompt, verify, and integrate outputs.