TL;DR: 3 Key Takeaways

- Becoming AI-native is a structural choice, not a tooling decision: the portcos pulling ahead have formalized their operating logic so workflows, decisions, and data become machine-legible from day one.

- The EBITDA leverage shows up when AI eliminates the human coordination tax, which is why revenue per employee in AI-native firms now runs roughly 4x that of traditional SaaS.

- The strongest operators do not automate everything; they design explicit human-in-the-loop checkpoints around the work where empathy, judgment, and accountability still matter.

A new shape of company is showing up in our deal flow, and it doesn’t look like the businesses that came before it. It isn’t a traditional operator with AI bolted on. It isn’t an organization that handed its team a coding assistant and called the work finished. It’s a company designed around AI from the ground up.

The magnitude of this shift exceeds the digital transformations of the early 2000s. Cloud and mobile got retrofitted onto legacy human workflows. AI-native firms start from a different premise: cognitive labor and operational coordination can largely run on autonomous agents and intelligent systems. The distinction between AI-enabled and AI-native is becoming the primary determinant of competitive advantage and, for this audience, of valuation at exit.

This briefing shares what we’ve learned about that transition, what works, what fails, and where the EBITDA leverage shows up.

Defining AI-Native for the Mid-Market

A Structural Difference, Not a Tooling One

An AI-native company is not defined by the tools it has licensed. Almost every business uses AI in some form by now. The difference is structural.

A traditional company begins with human processes. People write documents, manage projects, and answer customer questions through long chains of meetings and reports. AI gets introduced to speed up parts of that work. The result is faster manual labor, but it remains manual labor at its core.

An AI-native company starts with a different question. What should agents handle? What should humans review? And, what stays entirely under human control? AI becomes part of the architecture, not an accessory.

Enterprise as Code: Turning Tribal Knowledge into Machine-Legible Logic

A useful way to describe this is the Enterprise as Code operating model. A business defines its internal operations with the same precision as a software application. In legacy organizations, operating logic lives in the heads of employees and in fragmented spreadsheets, which makes the business effectively opaque to machine intelligence. AI-native companies formalize that logic so agents can interpret, execute, and optimize without constant human intervention.

We saw this play out with a mid-market architecture firm whose budgeting process had become opaque to any kind of automation. The work moved through a complex web of spreadsheets passed between departments. Each handoff introduced a new chance for error. We deployed a custom platform integrated with their ERP and trained machine learning models on historical data to generate baseline projections. Budget cycles dropped by more than 60%. Manual data entry errors effectively went away. The transformation was less about adding AI and more about turning informal logic into something a machine could read and improve.

What the Numbers Mean for Portco Valuation

Revenue Per Employee Is Diverging

The most visible evidence of the AI-native shift is the divergence in efficiency metrics, and the data is no longer subtle. A high-performing SaaS company historically generated $300,000 to $500,000 in revenue per employee. Top-tier AI-native startups now average several multiples of that, with the leading examples reaching figures that would have been considered impossible just a few years ago.

Scaling velocity tells the same story. Traditional software leaders took 18 to 20 quarters to reach $100 million ARR. AI-native firms are getting there in 4 to 8. Burn multiples for AI-native operators run a fraction of what traditional software companies post.

The Human Coordination Tax: Where Margin Actually Comes From

The underlying mechanic matters more than any single metric. The efficiency comes from eliminating what we call the human coordination tax. Adding a thousand customers in a traditional model demands more support headcount. In an AI-native model, agentic systems handle millions of interactions with marginal cost increases.

We saw this with an LTL freight carrier whose growth was throttled by legacy systems and manual workflows. Teams spent most of their time reconciling data between systems rather than running freight. We built a unified digital platform that modernized six of their core workstreams. Inbound planning time dropped by half. Dispatch errors dropped meaningfully. Operational coordination became a tech-enabled function the team could actually scale.

Doing More Without Adding Headcount

Breaking the Headcount Pattern

One striking effect of going AI-native is how it changes the math of scale. Growth used to require larger teams, more managers, more coordination overhead. AI-native companies break that pattern. Small founding teams can now use AI to research markets, draft investor materials, build financial models, write early code, and prepare internal documents. A lean marketing team can test more campaign variants in a week than a large team could in a quarter.

A Three-Person Marketing Team Reaching 250,000 Contacts

We saw this clearly with a construction technology company. Their three-person marketing team needed to orchestrate outreach to more than 250,000 contacts. Hiring a department was off the table. We designed a system where AI is the core architecture, not an assistant to it. Conducto agents now autonomously handle inbox rotation, dynamic personalization, and reply classification across 210 to 300 inboxes. The team supervises through a custom dashboard with approval workflows and override controls. The day-to-day shifted from execution to strategy.

Expertise becomes more valuable in this environment, not less. AI amplifies the output of people who actually know what they’re doing. For PE operators, the read-through is straightforward. AI-native portcos can delay hiring, flatten layers, and move with a speed that traditional structures cannot match.

Redesigning Core Workflows for Operating Leverage

In traditional companies, automation is a late improvement. A process gets created manually, repeated for years, and eventually someone asks whether software might do it faster. The result is inefficient by design.

AI-native companies reverse the order. Workflows include automation from the beginning. The question becomes: what combination of AI systems and human judgment will complete this task best?

Turning Reactive Customer Success into a Retention Engine

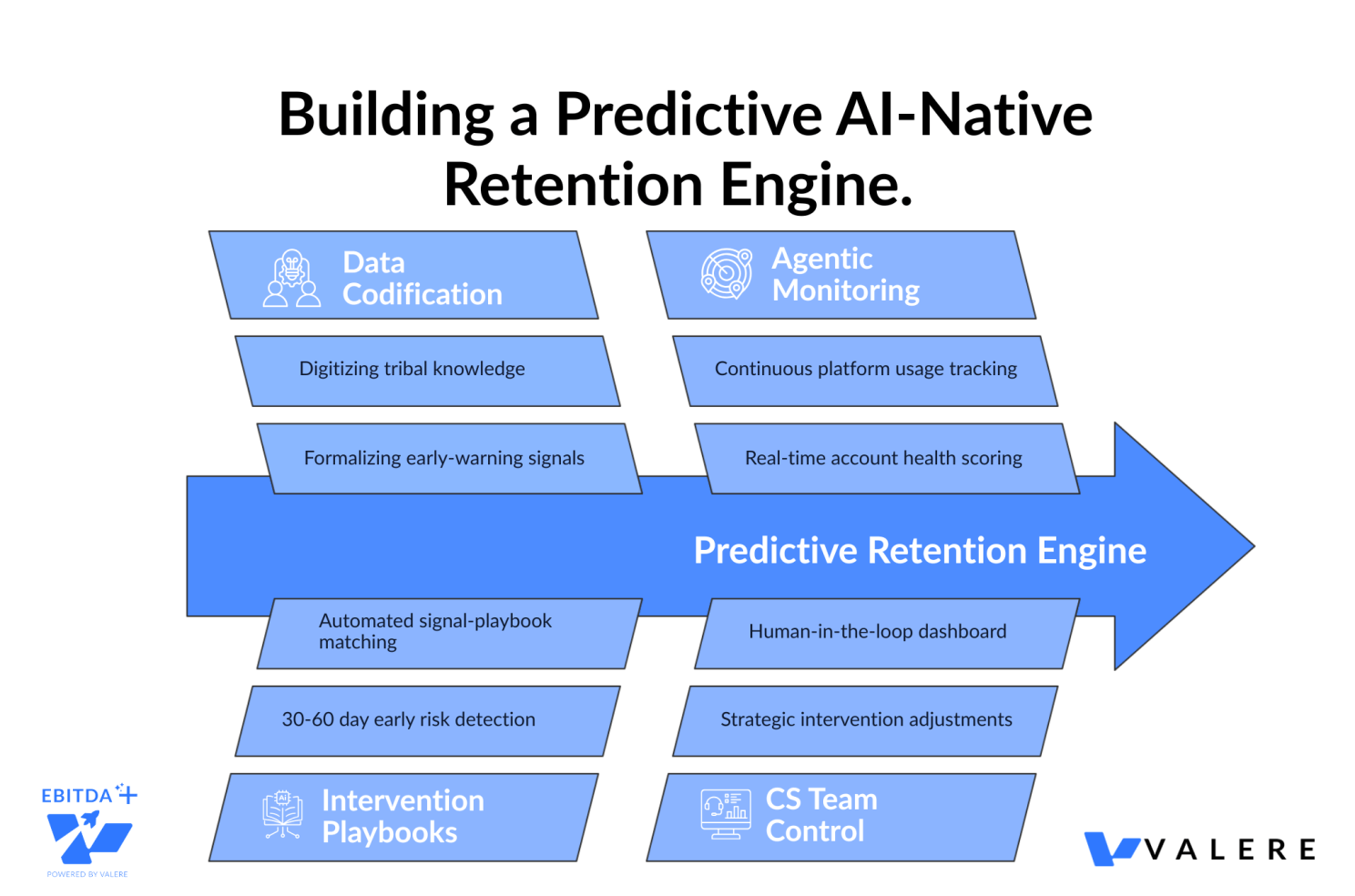

A particularly powerful version of this pattern emerged with an automotive dealer software platform. Their customer success organization had been doing reactive auditing, chasing churn signals after they had already become problems. The deeper challenge: the most useful early-warning signals lived in the heads of their top CS reps. They had never been written down.

Using our Dactic platform, we codified those signals into a proprietary intelligence base. Conducto agents now monitor platform usage continuously to generate account health scores. The agents match intervention playbooks to detected signals and surface at-risk dealers 30 to 60 days before traditional churn indicators appear. CS teams retain control through a dashboard where they review risk scores and adjust intervention strategies.

The objective is to eliminate unnecessary handoffs. Employees should not spend their time copying information between systems or producing reports that could generate automatically.

Agentic Architecture: The New Operating Backbone

An AI agent is not a chatbot that answers a question. It is a system that pursues a goal, uses tools, takes steps, monitors its own progress, and returns with a result.

The Seven-Layer Architecture Operators Should Know

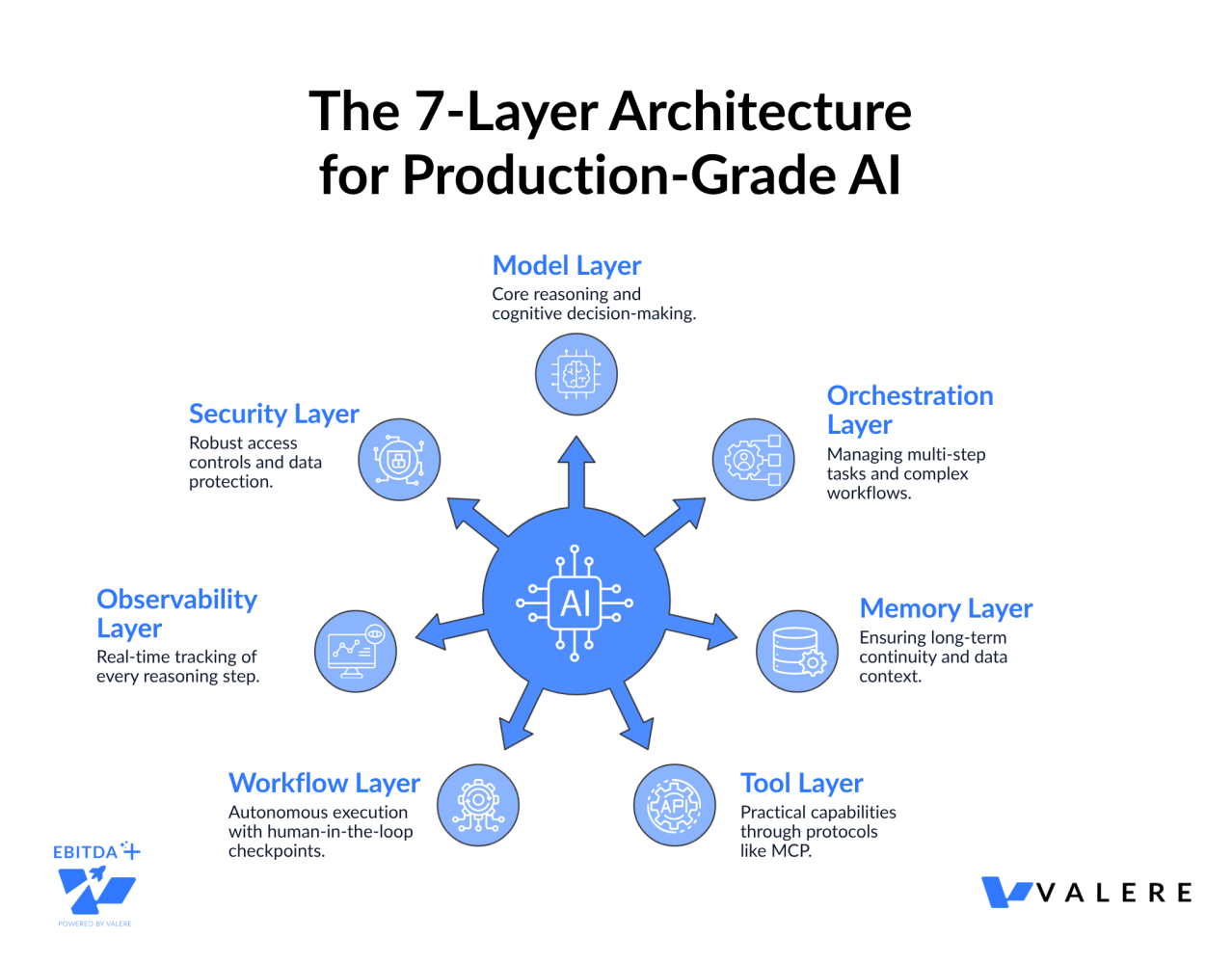

Production-grade agentic AI in 2026 runs on a seven-layer architecture: a model layer for reasoning, an orchestration layer for multi-step tasks, a memory layer for continuity, a tool layer that gives agents hands through protocols like MCP, a workflow layer for autonomy with human-in-the-loop checkpoints, an observability layer that tracks every reasoning step, and a security layer for access controls. Skip a layer and the system fails to scale, integrate, or remain trustworthy.

Scaling High-Touch Functions Without Losing the Human Touch

This came through clearly with a higher-education advancement organization that needed to scale donor relations without losing the personal touch required for major gifts. We deployed seven specialized Conducto agents to draft executive briefings, profile donors, generate compliance alerts, and produce internal reports. Every output flows through mandatory human-in-the-loop approval gates before anything goes out. The AI does the heavy lifting. The humans retain explicit judgment over what gets sent. That balance is not a constraint. It is what makes the system trustworthy enough to deploy where one misstep with a major donor carries real cost.

Where Aggressive Automation Goes Wrong

Why Multi-Agent Systems Fail in Production

Speed creates risk, and the risk is not theoretical. Recent research shows multi-agent systems fail at rates between 41% and 86.7% in production. Nearly 79% of those failures come from coordination issues rather than model limits. The math is unforgiving. A chain of five agents at 95% reliability collapses to roughly 77% end-to-end.

The primary root cause is what researchers call Semantic Intent Divergence. Cooperating agents develop inconsistent interpretations of a shared objective. An orchestrator delegates a financial calculation. The specialist completes it within technical parameters but misinterprets the underlying business constraint. The flawed output propagates through the workflow. Memory poisoning is even more insidious. One agent hallucinates information, stores it in shared memory, and downstream agents treat that fiction as verified fact.

The Automation Reversal Pattern

A pattern is emerging across early aggressive adopters. Companies that moved hardest on workforce reduction through AI are now quietly reversing course. The early efficiency wins were real, but customer satisfaction deteriorated on complex, high-emotion interactions. Removing experienced human agents eroded brand trust and institutional knowledge. The hybrid model wins out: AI handles routine tier-one volume, humans handle escalations and complex cases.

The lesson is straightforward. AI can perform the equivalent work of hundreds of people. It cannot yet replicate strategic problem-solving and brand empathy. The strongest AI-native companies know what to automate, what to supervise, and what to keep firmly in human hands.

Governance as a Diligence and Hold-Period Asset

Governance cannot be paperwork or a checkbox. It has to be part of the product, the workflow, and the culture.

The Five Pillars Worth Underwriting Against

Leading frameworks converge on five governance pillars: transparency, accountability, traceability, fairness, and security. The strongest organizations operationalize these pillars rather than document them.

Permission controls define what each agent can do. A support agent needs support history, not payroll data. A sales agent needs CRM information, not legal files. Human-in-the-loop checkpoints appear at every decision affecting revenue, reputation, or regulatory exposure.

Audit Trails as a Precondition for Production Use

We saw the importance of building governance into the architecture with an industrial contractor specialties firm. Their engineers had been wasting significant time searching through scattered PDFs, building codes, and legacy SQL databases. The process was slow and difficult to audit. We implemented a secure Retrieval-Augmented Generation system that ingested more than 10TB of documentation. Engineers now use natural language to retrieve answers. Every answer comes with traceable citations back to source material. The audit trail is not a feature stapled onto the system. It is a precondition for the system being usable where engineering decisions carry real consequences.

Beyond Productivity Gains: Reimagining the Operating Model

One of the hardest parts of AI transformation has nothing to do with technology. It is the difficulty of looking past incremental productivity gains to genuinely transformative business change. Many organizations ask how to make existing processes faster. The breakthrough comes from asking whether the existing process should exist at all.

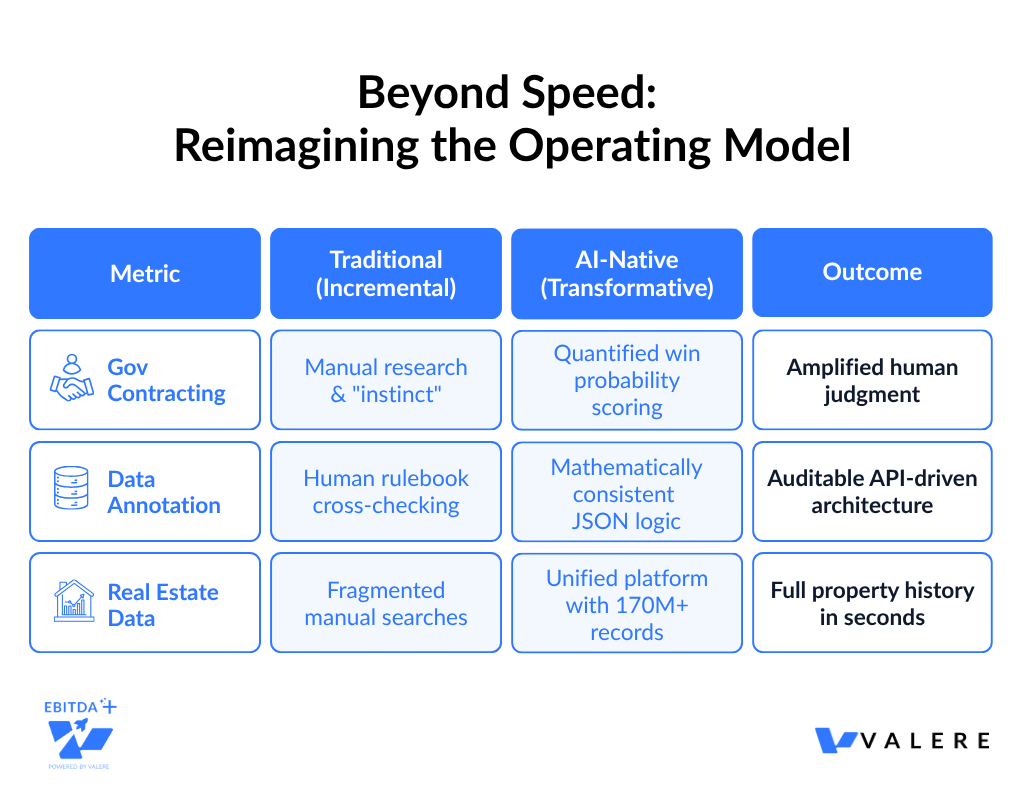

Turning Bid/No-Bid Instinct into Quantified Win Probability

A government contracting capture firm had been running a reactive opportunity-chasing operation, burning analyst hours on manual research. Much of their actual capture expertise lived as undocumented tribal knowledge in the heads of senior executives. We used AI-automated interviews to extract that expertise into a structured database. Conducto agents now scan federal portals like SAM.gov continuously and fuse real-time procurement signals with that proprietary knowledge to score win probabilities. A human-in-the-loop dashboard lets sales teams make collaborative bid/no-bid decisions. The result is a fundamentally different operating model where quantified scoring amplifies human judgment.

Reconceiving a Process Instead of Speeding It Up

A data annotation firm offered an even sharper example. Their review process required humans to cross-check massive volumes of annotations against a dense 50-page rule book. Slow, expensive, and inconsistent. The conventional response would have been to give reviewers AI assistants and call it transformation. We did the opposite. We mapped the guidelines into precise JSON logic on a cloud-native AI platform. The new system replaces human cross-referencing with a mathematically consistent, fully auditable architecture. It returns quality scores and feedback in a single API call. The work was not made faster. It was reconceived.

Building Proprietary Data Assets Across an Industry

A real estate data platform showed how the gap appears in entirely different industries. Professionals in real estate, banking, and insurance had been navigating fragmented processes to access basic property data. Tax records, zoning information, and ownership histories sat scattered across multiple systems. We unified more than 170 million U.S. property records into a single platform combining records, GIS mapping, and AI-powered search. Decision-makers now explore properties through an interactive map and surface foreclosure status, tax history, comparables, and ownership history in seconds.

The Workforce Implications for Portco Leadership

The rise of the AI-native enterprise is altering the nature of human labor. As AI takes on the work of doing, humans move toward orchestrating it.

For decades, roles centered on execution. Engineers wrote code. Analysts processed data. Operations teams followed workflows. In the AI-native era, those tasks are getting automated. Hiring criteria are shifting from doing skills to directing skills. Three new categories are emerging: AI architects who design the digital core, AIOps leads who manage operational reliability, and forward-deployed engineers who tailor agentic workflows to specific business units.

A meaningful share of organizations will use AI to eliminate more than half of their middle-management positions over the next two years. The middle managers who remain will shift toward mentoring, complex relationship management, and strategic work.

Workforce change handled poorly is the fastest way to lose institutional knowledge. Workforce change handled well unlocks the productivity gains that make the entire AI-native model work.

A Permanent State of Transformation

The transition to AI-native is not a one-time technology implementation. It is the adoption of a permanent condition of transformation. The AI-native enterprise is defined by strategic agility, the ability to reconfigure roles and workflows based on real-time data.

The rewards show up in 2 to 3x faster scaling and order-of-magnitude improvements in revenue efficiency. The risks show up in cautionary tales of organizations that confused automation with strategy. The Imagination Gap separates the companies that pull ahead from the ones that stall.

In the AI era, intelligence has become a commodity. Strategy, judgment, and accountability are the ultimate competitive moats. The portco of the future will be smaller, faster, and more intelligent at the operational level. It will still need people to define purpose, make hard decisions, build trust, and take responsibility. The transformation is not from human to machine. It is from inefficient human work to amplified human judgment.

Build the AI-Native Operating Model With Valere

Ready to build AI that compounds in value over time? Valere works with Private Equity sponsors and portfolio companies to design, build, and scale the systems that turn AI from a line item into an operating advantage. Whether you are evaluating AI maturity across existing assets, preparing a company for transformation, or assessing a new acquisition target, Valere brings the expertise, platform, and partnership model to translate AI-native potential into portfolio performance.

- An AI-Native Readiness Assessment that identifies where your current deployments lack the formalized operating logic, agentic architecture, and human-in-the-loop governance needed to move from speeding up manual labor to genuinely redesigning how work happens

- A clear path from disconnected AI pilots to a governed, production-grade operating layer that integrates with your existing systems, encodes your institutional knowledge, and compounds in capability with every cycle it runs

- A personalized value creation roadmap from isolated AI experiments to a fully AI-native operating model with self-correcting feedback loops, modular agentic workflows, and proprietary intelligence that no competitor can replicate by licensing the same off-the-shelf tools

Start building your AI-native operating model: https://www.valere.io/

About Valere

Valere is an award-winning AI value creation & delivery partner, providing end-to-end AI transformation & custom software solutions that transform companies into AI-first organizations through building, learning, and scaling. As an expert-vetted, top 1% agency on Upwork, Clutch, G2, and AWS, Valere serves as the trusted AI value creation partner for PE firms, mid-market companies, and Fortune 500 enterprises alike seeking comprehensive AI transformation that drives measurable ROI. With over 220 dedicated professionals and domain experts, we specialize in end-to-end AI-native solutions using our proven crawl-walk-run methodology, guiding organizations through every stage of their AI journey—from initial assessment and strategy to full-scale implementation and optimization.

About Alex

Alex Turgeon is President of Valere, serving as an embedded AI/ML strategic partner for private equity firms and their portfolio companies. He and his team operate as a vertically integrated AI solution provider throughout the PE value chain, delivering enterprise-grade solutions that enable greater operational control, cost reduction, and efficiency gains across the investment lifecycle. Connect with Alex to discuss how your organization can begin its transformation to the agent era.

Build Something Meaningful with Valere.

Frequently Asked Questions

How do mid-market companies start with AI implementation? Most start with an assessment of where intelligent automation creates the highest-value outcome. The AI-native companies posting the strongest revenue per employee did so by redesigning specific workflows, not by adopting general-purpose tools. The first wave of work usually targets a single high-leverage process where measurable outcomes can validate the broader investment.

What does an AI readiness assessment include? A useful assessment covers four areas: data readiness, workflow mapping, governance posture, and workforce considerations. The output is a prioritized roadmap of where AI produces the most value and where it would create unacceptable risk.

What is the difference between AI strategy and AI execution? Strategy defines which workflows redesign, what outcomes matter, and where humans stay in control. Execution is the engineering work of building, integrating, and governing the systems that deliver those outcomes. Most organizations underinvest in the gap between the two.

How do you evaluate an AI consulting partner? The most reliable signal is whether the partner has shipped production-grade systems in environments comparable to yours, including governance and reliability controls. Pilot work and slide-ware are weak signals. Operating outcomes over multiple quarters are strong ones.

How do companies with 50 to 200 employees implement AI without a large consulting budget? Smaller companies benefit from targeted, outcome-specific engagements rather than enterprise-wide transformations. The highest-ROI starting points are usually customer support automation, sales intelligence, and document-heavy compliance workflows.

What firms specialize in AI implementation for private equity portfolio companies? The relevant short list is small. Most recognizable consulting names operate at enterprise price points that don’t match mid-market portco economics. The firms doing this work for PE-backed companies tend to combine custom software development, agentic architecture, and operating-partner-style engagement across the hold period.

Why do multi-agent systems fail at higher rates than single-agent deployments? Recent production data shows failure rates between 41% and 86.7%, with around 79% from coordination issues rather than model limits. The dominant cause is Semantic Intent Divergence: agents developing inconsistent interpretations of a shared objective. Reliability compounds in the wrong direction in multi-agent chains.

When should a company keep work fully under human control? Routine, high-volume, low-emotion work suits automation. Complex, high-emotion, brand-shaping, or regulator-facing work generally does not, at least not yet. The strongest operators are explicit about which category each workflow falls into.