TL;DR: 3 Key Takeaways

-

- The Market Nobody Is Talking About: The real AI opportunity is not the budget for tools, rather it is the services and outsourcing budget that sits six times larger, remains structurally underserved by the current generation of AI tooling, and is already priced around outcomes rather than headcount.

- The Intelligence vs. Judgement Distinction: The single most important question in any AI deployment is not what the technology can do, but where in the workflow the work is rules-based intelligence that AI can own today, and where it is accumulated human judgement that still needs to stay in the loop.

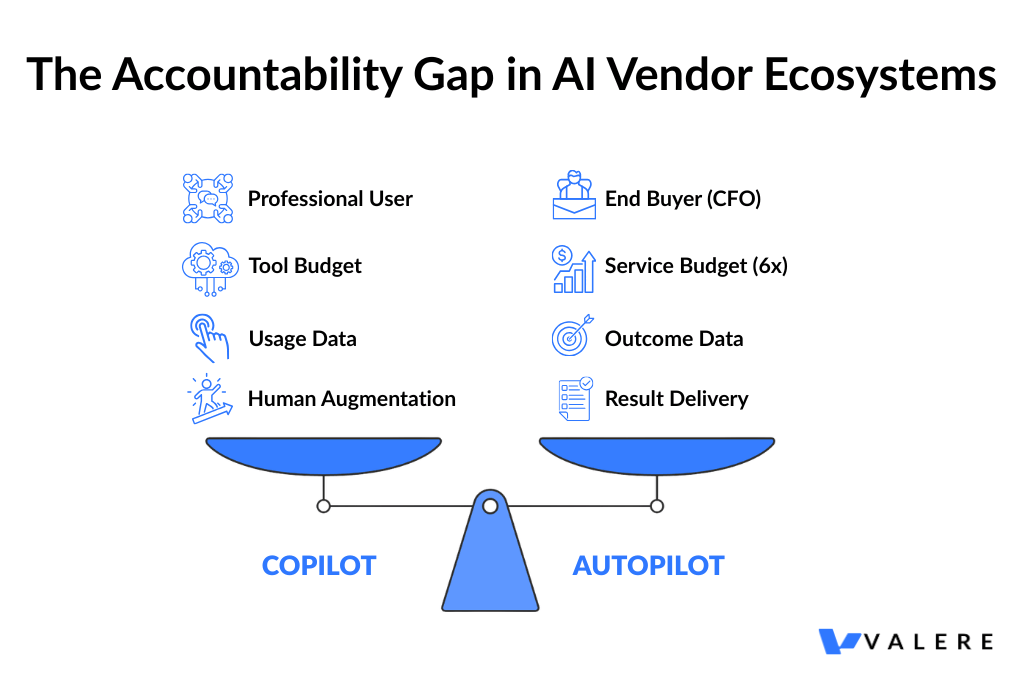

- The Model Distinction That Drives the Multiple: Copilots generate usage data; autopilots generate outcome data. That distinction is not a nuance, but rather it is the compounding mechanism that separates AI businesses building durable, defensible moats from those racing the model providers for feature parity they will eventually lose.

Since 2000, the dominant enterprise technology thesis held that the highest-value position in any market was the software layer, the tools professionals use to do their work. That assumption is under serious pressure, and from where we sit at Valere, working across professional service verticals day to day, the pressure is not theoretical.

The question we hear most often is no longer whether AI will displace professional service workflows. It is which workflows, in which sequence, and on what timeline. Where does AI handle the work reliably, and where does it still need a human in the loop? The answer varies by profession, by task type, and by how much proprietary data sits behind the system doing the work.

This report maps that boundary across ten professional service categories, drawing on publicly available market data, third-party research, and patterns we have observed directly through client engagements at Valere.

The Race You Cannot Win

Every founder building an AI-powered tool is asking the same question: what happens when the next version of the underlying model makes their product a native feature? They are right to ask. Model providers move quickly, and the capability gap that justified a standalone tool today can disappear in a single release cycle.

If you sell the tool, you are in a race against the model. If you sell the work, every improvement in the model makes your service faster, cheaper, and harder to displace. A business might spend $10,000 a year on accounting software and $120,000 a year on an accountant to close the books. The next generation of AI-native services firms will simply close the books. No software licence required, no headcount to manage, outcome delivered.

This is the structural shift from Software as a Service to Service as the Software: a reordering of the revenue model from capturing software budgets to competing for services budgets, which industry analyst estimates put at approximately six times larger by total spend.

A Market Architecturally Designed to Fail the Buyer

Before understanding where AI value creation goes next, it helps to be precise about where it has consistently fallen short, and why that failure is not accidental.

Eighty-seven percent of AI initiatives stall between pilot and production. Ninety-five percent never generate measurable ROI. These outcomes are not produced by a lack of ambition or investment on the buyer’s side. They are produced by a vendor ecosystem structurally misaligned with what buyers actually need: a delivered result.

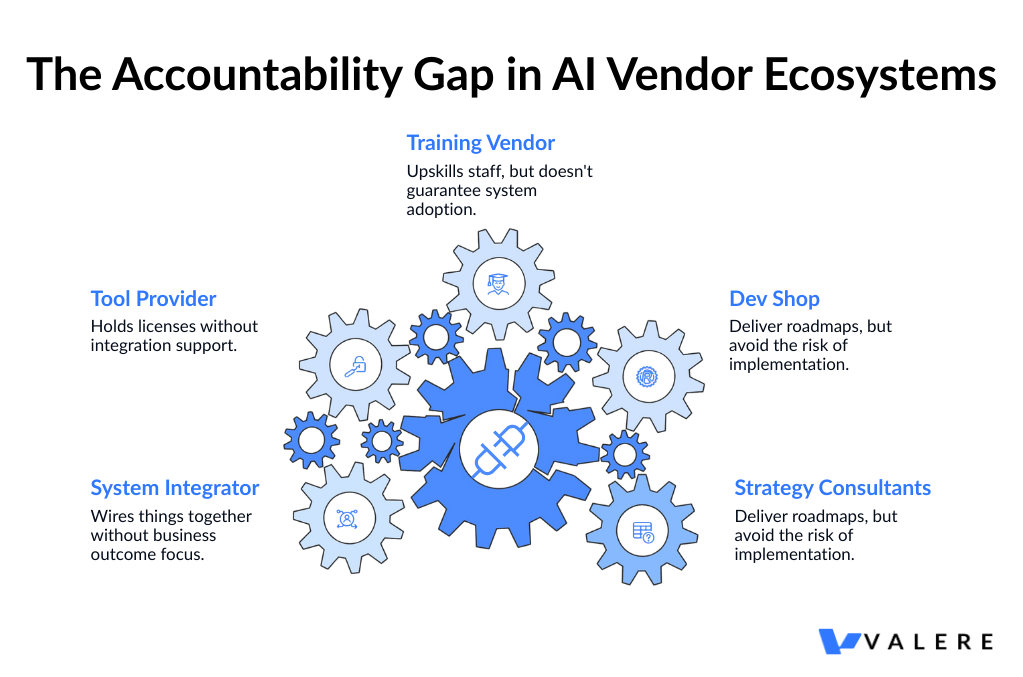

The Fragmentation Problem

The failure operates through two compounding mechanisms. The first is fragmented accountability. A typical enterprise AI engagement involves a strategy consultant who designs the roadmap, a development shop that builds the system, a training vendor who prepares the workforce, a tool provider who licenses the capability, and a system integrator who connects the components. Each vendor owns a piece. None owns the outcome. Every handoff creates an opportunity for accountability to dissolve, and the client absorbs the coordination burden they hired others to eliminate. The client becomes the de facto systems integrator, carrying responsibility for making the pieces work together without the expertise or leverage to do so effectively.

The Tool-First Trap

The second mechanism is tool-first thinking. Most AI investment is structured around capability acquisition: purchasing tools that enhance what professionals can do rather than purchasing the work itself. Any product built on a foundation model is one update away from obsolescence. Tool vendors narrow their own competitive moat with every release cycle and, in doing so, sell their clients a seat in the same race.

The practical consequences are familiar to most technology leaders: pilots that never deploy, innovation labs that never scale, chatbots that do not integrate, roadmaps without execution, training programmes disconnected from the systems people actually use. This is not an anomaly. It is the market’s default operating model.

The five percent of organisations that achieve genuine, measurable AI ROI tend to share one structural advantage: a single accountable owner of the outcome, end to end.

From Structural Failure to Service as the Software

Valere has sat with this diagnosis through a significant number of client engagements, and it shapes how we approach every piece of work. Organisations do not fail at AI because the technology is unready. They fail because nobody owns what happens after the tool gets deployed.

The engagements that generate real returns are the ones where accountability for the outcome stays in place until the result is delivered. In commercial terms, this means competing for the services and outsourcing budget rather than the software budget.

AI tools that augment professionals compete for the tool budget. AI services that deliver outcomes directly compete for the work budget, which is deeply entrenched in long-term outsourcing contracts and structurally insulated from the feature competition that erodes tool margins. Every improvement in underlying AI capability is a threat to the tool vendor. For an outcome owner, it is a tailwind.

The $87 billion experimentation economy, encompassing pilots, tooling, and innovation spend, is the entry point into this market. The far larger services economy it sits adjacent to is the long-term prize.

Intelligence vs. Judgment: The Fault Line That Defines the Opportunity

One of the more useful distinctions to emerge from working across professional service verticals is the difference between intelligence and judgment, two terms used interchangeably but describing very different kinds of work.

Defining Intelligence

Intelligence, as Valere uses it, is the ability to process complexity according to rules. Writing code, translating a specification into a working implementation, medical coding, contract drafting, insurance form completion: these are tasks where the rules are intricate but they are, ultimately, rules. Given enough training data and model capacity, AI can execute this work autonomously.

Defining Judgement

Judgment is something different. It is experience crystallised into instinct: knowing which feature to build next, deciding when to take on technical debt, sensing whether a candidate will thrive in a particular culture, recommending a strategic direction under genuine uncertainty. Judgment is built on years of practice and pattern recognition in a specific domain. It is, for now, much harder to automate.

The opportunity map for AI-native services is essentially a function of where intelligence ends and judgment begins. The higher the ratio of intelligence to judgment in a field, the sooner autonomous AI services will dominate it.

In practice, the first question that shapes our approach in any new engagement is not what AI can do here, but where in this workflow is the work intelligence, and where is it judgment. Getting this wrong, particularly overestimating how far into judgment territory AI can reliably operate, is one of the most common and costly mistakes we see in enterprise AI deployments.

How Outcome-Based Solutions Apply This Distinction

This is where Dactic, our knowledge infrastructure, plays a practical role. Rather than relying solely on what general-purpose models know, Dactic extracts the tacit domain knowledge embedded in an organisation’s people, processes, and historical decisions, and converts it into training intelligence specific to that organisation’s context. The result is AI that reflects how a particular business actually thinks about its work.

Conducto, our orchestration layer, coordinates how that intelligence is deployed across workflows at scale, progressively extending the boundary of what AI handles as domain-specific data accumulates. The governing principle is that today’s judgment becomes tomorrow’s intelligence, provided you are capturing the right data along the way.

Copilots and Autopilots: Two Very Different Business Models

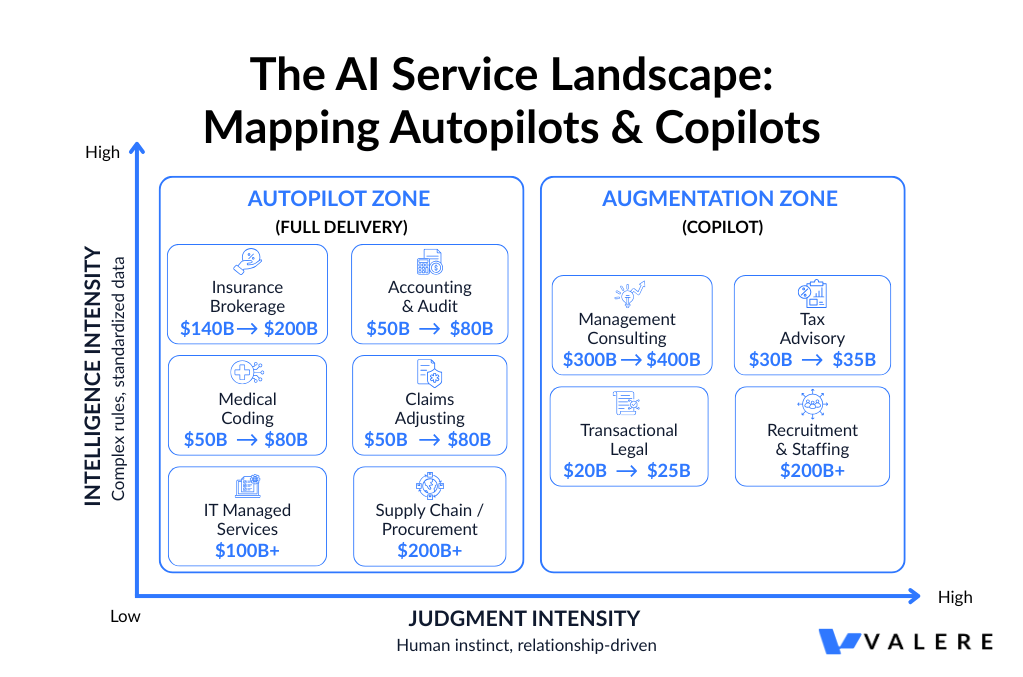

The AI services landscape organises itself around two distinct models.

A copilot sells the tool. It places AI in the hands of a professional and augments their productivity. The professional remains the customer, retains responsibility for the output, and the relationship is essentially one of software licensing. Leading AI copilot platforms sell to law firms and investment banks. These are real businesses with real revenue, but they are competing for the tool budget.

An autopilot sells the work. It takes responsibility for the outcome and delivers it directly to the end buyer, cutting out the professional intermediary. An AI-native legal autopilot sells contract drafting directly to the company that needs the NDA, not to outside counsel. An AI insurance placement service sells directly to the CFO, not to the broker.

The total labour spend across major professional service categories, including accounting, legal, insurance, healthcare administration, IT, recruitment, and consulting, runs into the trillions. Autopilots compete for a share of that market. The most defensible AI businesses are not the ones making professionals more productive. They are the ones delivering professional-grade outcomes without the professional sitting in the middle.

The Autopilot Playbook: Start Where Outsourcing Already Exists

There is a clear strategic logic to where AI service models gain traction first: outsourcing is the wedge.

When a task is already outsourced, three things are simultaneously true. The organisation has already accepted that this work can be done externally. There is an existing budget line that can be substituted cleanly without internal restructuring. And the buyer is already purchasing an outcome, not a headcount. Replacing an outsourcing contract with an AI-native services provider is a vendor swap. Replacing internal headcount is a reorganisation. One is operationally and politically straightforward. The other is not.

The practical playbook follows: start with outsourced, intelligence-heavy work, deliver the outcome reliably, and then expand toward insourced, judgment-heavy work as proprietary data accumulates and the domain model matures. The outsourced task is the wedge. The insourced work is the long-term addressable market.

In every vertical Valere has entered, the entry point has been defined by the same criteria: work that is already outsourced, quality that can be independently verified against defined standards, and a cost structure with a meaningful services component.

Mapping the Opportunity: Where Autopilots Win First

Applying this framework across major professional service categories reveals a landscape with significant near-term opportunity concentrated in a relatively small number of verticals.

Insurance Brokerage, $140 to $200 Billion

This is the largest dollar opportunity on the map, and one of the most structurally attractive. Standard commercial lines insurance is a highly standardised product. The broker’s core contribution, shopping policies across carriers, comparing coverage terms, and completing the associated paperwork, is intelligence work throughout. There is no creative interpretation, no proprietary strategic insight. It is a process of structured comparison and form completion executed by a fragmented workforce of tens of thousands of independent brokers, none of whom control enough of the customer relationship to mount a serious response to AI displacement.

The fragmentation itself is a signal worth paying attention to. In markets where a small number of dominant players control distribution, incumbents can respond to disruption by acquiring or investing in new entrants. In a market as fragmented as commercial insurance brokerage, there is no such consolidating force, and the conditions for a new entrant to build market share directly with end customers are unusually favourable. Several early-mover AI insurance placement services are already positioning on exactly this model, selling placement as an outcome to the CFO rather than selling software to the broker.

Accounting and Audit, $50 to $80 Billion Outsourced in the US

The accounting profession is facing a structural talent crisis that is accelerating AI adoption faster than almost any other sector. According to research from the American Institute of CPAs, the profession lost approximately 340,000 accountants from the workforce over roughly five years, while demand for accounting services continued to grow. A disproportionate share of currently licensed CPAs are approaching retirement age, with the licensing pipeline thinning due to long credentialing timelines and starting salaries that lag those in technology and financial services.

This is not a temporary staffing problem. It is a structural supply-demand imbalance, and in our experience, finance teams are not merely open to AI-driven accounting services. They are actively seeking them, because the human alternative is becoming genuinely difficult to source at competitive cost. The entire audit and close cycle, covering journal entries, reconciliations, variance analysis, and reporting, is intelligence work. The rules are complex and jurisdiction-specific, but they are rules. AI systems trained on sufficient volume can execute this work with a level of consistency and auditability that human teams struggle to match at scale.

Healthcare Revenue Cycle Management, $50 to $80 Billion Outsourced in the US

Healthcare carries an unearned reputation for being impenetrable to AI because the clinical work is so deeply judgment-dependent. That reputation obscures a substantial adjacent opportunity in the administrative layer, where the work is almost entirely intelligence-based.

Medical coding, the process of translating clinical notes and diagnostic records into the approximately 70,000 standardised ICD-10 codes used for billing and reimbursement per federal Centers for Medicare and Medicaid Services documentation, is a rules-based task of significant complexity. It requires deep knowledge of coding standards, payer requirements, and clinical terminology. But complexity is not the same as judgment. The codes are standardised. The mapping rules are documented. The exceptions are enumerable. Pilot programmes published by healthcare AI technology firms report coding accuracy at parity with or above experienced human coders, though independent third-party validation at scale remains limited.

The outsourcing in this market is already mature and outcome-based, with coding accuracy and claims resolution rates as standard contractual metrics. An AI service entering this market is not asking buyers to change how they procure or evaluate what they buy. It is offering a better-priced, faster-executing version of what they are already purchasing from someone else.

Claims Adjusting, $50 to $80 Billion Including TPAs

On the other side of the insurance policy lies an equally large and equally accessible market in claims adjusting. Standard-line claims, covering property damage, auto, and straightforward liability, are settled by interpreting policy language against damage schedules and setting reserves using actuarial tables. Like medical coding, this is complex intelligence work. Adjusters are applying rules, not making strategic calls.

The workforce dynamics mirror accounting. Claims adjusters are ageing out of the profession and not being replaced at sufficient volume. The market is heavily outsourced to independent adjusters and large third-party administrators, meaning the infrastructure for external outcome-based service delivery is already normalised. Insurance also represents something unusual from a strategic standpoint: two distinct AI service surfaces within a single industry, placement on one side and claims on the other, which effectively doubles the addressable opportunity within a single vertical.

The pattern that defines claims adjusting, applying a dense, structured rulebook to complex real-world information and producing a consistent, auditable outcome, appears repeatedly across adjacent domains. In one Valere engagement with an annotation quality assurance platform, human teams were manually cross-checking large volumes of outsourced image and text annotations against a detailed rulebook. The process was slow, inconsistently applied, and difficult to audit.

Valere automated this work on a major cloud AI platform, mapping the rulebook into precise JSON logic that evaluates each annotation and produces a mathematically consistent quality score with a complete audit trail. The underlying principle, that rules-based work executes more consistently at scale through AI than through human review, applies equally in claims adjusting, medical coding, and every domain where dense, structured policy governs the work.

Tax Advisory, $30 to $35 Billion

CPA licensing creates a meaningful regulatory moat in tax advisory, but the licensing requirement applies to the sign-off, not the underlying work. The substantial majority of that underlying work, covering research, analysis, compliance preparation, filing, and cross-jurisdictional reconciliation, is intelligence work that does not require a licensed professional to execute.

The multi-jurisdictional complexity that makes tax advisory expensive for SMBs and mid-market businesses is precisely what makes it attractive for AI services. No single in-house hire can maintain current expertise across federal, state, and international tax regimes simultaneously. Companies outsource this complexity because they have no realistic alternative. An AI system handling multi-jurisdiction tax compliance not only matches the outsourced alternative in capability but deepens its data advantage with every additional jurisdiction it covers, creating a compounding moat that becomes progressively harder to replicate.

An AI tax platform Valere developed in one engagement illustrates how this architecture works in practice. The platform integrates with widely-used cloud accounting software to analyse financial data, identify tax optimisation opportunities, and track regulatory changes across jurisdictions. Critically, the system design reflects the intelligence-judgment distinction directly: it automates the rules-based analysis layer, handling cross-jurisdictional compliance and optimisation modelling, while preserving human CPA judgment for client advisory decisions, report validation, and relationship management. The outcome is a model that allows CPA firms to scale capacity despite structural talent shortages, not by replacing professional judgment but by removing the intelligence work that currently consumes the majority of billable hours.

Transactional Legal Work, $20 to $25 Billion

Contract drafting, NDAs, regulatory filings, standard commercial agreements: this work sits at the high end of the intelligence ratio for the legal profession, is routinely sent to external counsel, and has a quality bar that is independently verifiable. That last characteristic matters more than it might appear. In professional services categories where the buyer cannot evaluate output quality without deep domain expertise, there is an inherent adoption barrier for AI-delivered work. Transactional legal documents do not have this problem. Whether an NDA is correctly drafted, whether a service agreement contains the requisite protective clauses, whether a regulatory filing meets the relevant requirements: these are assessments that in-house counsel and sophisticated procurement teams can make with reasonable confidence without commissioning a full external legal review.

One Valere engagement with a legal IT and administrative managed services firm illustrates how far the service autopilot principle extends into litigation support and case management. The client needed a specialised solution for a highly specific and repetitive workflow: the management of employment law case discovery, documentation, and reporting. Existing legacy processes were slow, fragmented, and prone to error. The resulting platform streamlines discovery workflows, organises case data, and delivers structured reporting as a direct digital outcome, replacing outsourced piecework with a unified, auditable system. The lesson is consistent with every other category: when work is defined enough to be outsourced, it is defined enough to be automated.

IT Managed Services, $100 Billion and Above

IT managed services has received less attention in discussions about AI services than the size of the market warrants. Every SMB outsources its IT operations to some degree. Server patching, system monitoring, user provisioning, helpdesk triage, security alert response: this is intelligence work executed on near-identical processes across thousands of similar business environments.

The existing software layer in this market sells tools to managed service providers, not to end customers. The end customer buys a service from the MSP. The AI service opportunity is to step over the MSP entirely and sell the operational outcome directly to the business at a price point that undercuts the MSP’s cost structure.

One Valere engagement with an AI-driven IT help desk provider demonstrates how this transition unfolds in practice. The client offered a human-backed Microsoft licensing help desk, a narrow but high-frequency problem for enterprise IT teams historically handled by a patchwork of consultants and manual processes. When the engagement began, the client had a functional but brittle prototype. Valere rebuilt the system as an enterprise-grade platform on a major cloud infrastructure provider, capable of handling complex licensing queries at scale. AI model fine-tuning and prompt optimisation improved response accuracy by 50 percent according to the client’s internal benchmarking, which enabled them to successfully target enterprises with over $100 million in revenue and replace their fragmented IT help-desk arrangements with a single, reliable AI-delivered outcome. The entry point was narrow: software licensing queries. The longer-term destination is the full managed IT outcome sold directly to the business.

Supply Chain and Procurement, $200 Billion and Above

Procurement is one of the more strategically interesting entry points on this map because the wedge does not require displacing an incumbent or winning an existing budget. It requires finding money that is currently being lost.

Most enterprises actively manage only the top tier of their supplier base, roughly the top 20 percent by spend, per research from a leading procurement benchmarking firm. The remaining 80 percent receive little to no active negotiation, contract management, or compliance monitoring because it is not economical to deploy human resources against relationships of that scale and value. The result is systematic contract leakage running at 2 to 5 percent of total procurement spend across the neglected tail, a figure supported by a global commercial contracting research body. An AI service that recovers this leakage is, from the buyer’s perspective, pure recovered value, with no existing budget line to compete for, no incumbent to displace, and an unusually low-friction sales motion.

Our engagement with a government contracting firm illustrates this dynamic in a procurement-adjacent context, where the problem of unmanaged opportunity at scale is particularly acute. Using Conducto and Dactic, Valere transformed the client’s approach to contract identification from reactive monitoring to proactive, intelligence-driven pipeline management. Conducto agents operate continuously to monitor government procurement portals and federal spending databases, aggregate contract budgets, and score win probability for each opportunity using a mathematical model built from the client’s own historical bid data. The resulting dashboard presents this scored intelligence to senior sales executives, who make the final strategic bid decisions, the kind of contextual, relationship-aware reasoning that remains human work. Every bid decision feeds back into the Dactic knowledge base, continuously refining the system’s domain-specific intelligence. The same architecture applies directly to commercial procurement: automated intelligence across the long tail of supplier relationships, with human judgment reserved for the decisions that genuinely warrant it.

Recruitment and Staffing, $200 Billion and Above

Recruitment presents a layered opportunity that reflects the intelligence-to-judgment spectrum particularly clearly. The top of the hiring funnel, covering candidate sourcing, resume screening, initial outreach, and skills matching, is pure intelligence work that can be automated today with high reliability. The lower funnel is a different matter. Closing a strong candidate in a competitive market, assessing cultural fit, navigating the interpersonal dynamics of a search process: this is judgment work built on years of relationship-based pattern recognition. It remains human-dependent for now.

The AI service entry point is therefore in high-volume, lower-judgment hiring, roles where matching criteria are standardised, candidate pools are large, and speed of identification is the primary differentiator. The data advantage built by operating at scale in this wedge will, over time, extend the intelligence boundary upward into increasingly sophisticated hiring decisions.

Management Consulting, $300 to $400 Billion

Management consulting represents the largest total spend on this map and, simultaneously, the most judgment-intensive work. Strategic recommendations, stakeholder alignment, navigating organisational politics, making consequential calls under genuine uncertainty: these remain deeply human activities that AI is not positioned to fully automate in the near term.

The more practically relevant question is whether consulting engagements can be disaggregated. Most consulting projects contain both intelligence components, covering data gathering, market sizing, benchmarking, financial modelling, and scenario analysis, and judgment components, covering interpretation, recommendation, persuasion, and execution. If AI can systematically automate the intelligence layer while preserving the human judgment layer, the cost and delivery speed of consulting work changes structurally.

The government contracting engagement described above is a direct illustration of this disaggregation principle. Conducto agents handle every intelligence-layer task: continuous monitoring, data aggregation, mathematical scoring, pipeline generation. Human executives handle every judgment-layer task: strategic positioning, relationship navigation, final bid decisions. The system does not attempt to replace human strategic judgment. It eliminates the intelligence work that was previously consuming the hours those humans should have been spending on judgment, freeing senior teams from data-gathering and analysis that AI can now handle at marginal cost. The organisations that build this disaggregated model at scale will carry a structural cost advantage over traditional consulting models that deploy large analyst teams to perform work that no longer requires them.

The Compounding Logic: Why Starting Position Matters

The boundaries between intelligence work and judgment work are shifting as AI systems accumulate domain-specific data. What required experienced professional judgment two years ago increasingly looks like a learnable pattern today.

This has a practical implication for competitive positioning: firms that establish AI service positions early, even in narrow and apparently modest starting wedges, are building the data assets that allow them to expand into progressively more judgment-intensive territory. The starting position determines not just the immediate addressable market but the trajectory of the compounding advantage.

Why Outcome Data Beats Usage Data

This is also why the copilot and autopilot distinction matters beyond the immediate revenue model. Copilots generate usage data. Autopilots generate outcome data, meaning data about what good work looks like in a specific domain, at scale, across thousands of real engagements. Outcome data is what allows AI systems to cross the intelligence and judgment boundary over time. It is the raw material of the durable moat.

Dactic captures outcome data from every engagement: every tax filing optimised, every contract drafted, every IT query resolved, every procurement opportunity scored. That data is not incidental to service delivery. It is the strategic asset that makes each subsequent engagement faster, more accurate, and harder to replicate by a competitor starting from zero. Conducto ensures that accumulated intelligence is deployed progressively, moving the automation boundary outward as the domain model matures.

The Pattern Aross Case Work

The pattern is observable across our case work. The AI tax platform accumulating optimisation outcomes across client filings. The IT help desk refining its accuracy across licensing queries. The government contracting system feeding every bid decision back into its domain model. In each case: narrow wedge, deep data, expanding capability.

What This Means for Organisations Making AI Investment Decisions Today

For leadership teams evaluating where to deploy AI investment, the practical priorities that emerge from this framework are relatively clear.

Start with outsourced, intelligence-heavy work. These tasks carry clear ROI, defined quality standards, existing budget lines, and no internal restructuring required. They are the fastest path to genuinely measurable outcomes.

Be honest about what tooling purchases actually deliver. The organisations generating the clearest returns from AI are those that bought outcomes rather than capabilities. Evaluating vendors on what they deliver, rather than what they enable, produces a very different shortlist.

Insist on unified accountability. The structural failure mode of most enterprise AI investment is fragmented ownership. The right question when evaluating a partner is not what does your tool do, but what outcome do you own, and what happens when it falls short.

Treat data accumulation as a strategic priority, not a byproduct. Every AI engagement that generates proprietary data about your domain, your contracts, your claims, your supplier relationships, and your candidates is building a compounding asset. Organisations that approach AI adoption as a data strategy build advantages that persist. Those treating it as a cost reduction exercise tend to find the gains are transient.

Evaluate partners on trajectory, not just current capability. The firms worth working with are those whose model accumulates proprietary domain intelligence with every engagement. A partner whose capabilities deepen as you use them is structurally different from one racing the model providers to maintain feature parity.

Published AI adoption research from McKinsey’s 2024 State of AI survey found that organisations with mature AI programmes were meaningfully more likely to report measurable cost reduction and revenue impact. The gap between organisations with and without deployed AI in high-frequency workflow categories is widening, and it is widening faster than most planning cycles account for.

Stop Buying Tools. Start Buying Outcomes

The companies that generate measurable value from AI in the next 24 months will not be the ones with the most tools. They will be the ones with a single accountable partner who owns the outcome from assessment through production. Valere works with mid-market and enterprise companies alike to deliver those outcomes directly, starting with the functions where the ROI timeline is shortest and the organizational friction is lowest. Here is what that looks like in practice:

-

- Identification of the outsourced, intelligence-heavy functions across your portfolio where AI substitution is a procurement decision rather than a restructuring, with a clear timeline and outcome metric attached to each

-

- End-to-end ownership of deployment across strategy, development, and workforce readiness, so the business result belongs to one accountable party rather than falling between vendors

-

- Compounding data assets embedded into the systems themselves, so the intelligence your organization has built does not walk out the door at the end of an engagement but continues to improve with every production cycle

If you are a company ready to move from pilot to production, Valere would like to show you what that path looks like.

Start the conversation: https://www.valere.io/

FAQ: What Practitioners Typically Ask About AI Service Delivery

How do AI autopilot services differ from AI copilot tools in practice?

Copilot tools augment a human professional’s workflow. They suggest, draft, or summarise, but the professional retains responsibility for the output. Autopilot services deliver an outcome directly, with the AI system accountable for the work product. In procurement terms, this distinction maps onto pricing models: copilots typically price on seat or usage, while autopilots price on outcomes such as policies placed, invoices reconciled, or claims settled. Research from Gartner notes that outcome-based AI contracts are growing as a share of enterprise AI spending.

What types of professional services work are most suitable for AI automation today?

Work that involves applying complex, documented rules to large volumes of structured information tends to be suitable, regardless of domain. Medical coding, insurance form completion, contract drafting for standard agreement types, accounts payable reconciliation, and IT helpdesk triage for common query categories all fit this profile. Work that requires integrating incomplete information, navigating relationships, or making consequential decisions under genuine uncertainty remains less suitable. A practical test: if a task can be fully defined in a rulebook and consistently evaluated by an experienced reviewer, AI can likely execute it reliably at scale.

Why does the intelligence-judgment distinction matter when evaluating AI vendors?

Vendors who overstate how far into judgment territory their systems can reliably operate create real adoption risk. An organisation purchasing AI services expecting autonomous execution of work that actually requires human judgment will encounter errors that are difficult to catch before they cause downstream problems. In our experience, the most reliable vendors are specific about where the automation boundary sits and what the human review layer looks like. Asking what a failure case looks like and how the system handles it, is a more diagnostic question than asking how accurate the system is.

When does it make sense to buy an AI-delivered outcome rather than an AI tool?

Outcome-based purchasing tends to make most sense when three conditions are simultaneously present: the work is already outsourced or easily separable from in-house processes; quality can be independently verified against defined criteria; and the existing cost structure includes a meaningful services component rather than only software licensing. The change management burden is lowest when AI services replace existing outsourcing contracts, because the procurement motion is a familiar vendor swap. Replacing internal headcount with AI services is a fundamentally different organisational decision, with a correspondingly different change management requirement.

How do proprietary data advantages compound over time in AI service businesses?

AI service providers that process real engagements accumulate outcome data describing what correct, high-quality work looks like in a specific domain, at scale. This data enables model fine-tuning, improved handling of edge cases, and progressive extension of the automation boundary into more complex work. Software tools that augment human professionals generate usage data, not outcome data. They record what the professional did, not whether the output was correct. This distinction is increasingly cited by investors and practitioners as the primary source of durable competitive advantage in AI services businesses. Dactic is designed specifically to capture this outcome data across every engagement and convert it into the proprietary domain intelligence that compounds the capability of each subsequent one.

What is the single most important question to ask when evaluating an AI services partner?

Who owns the outcome if it falls short? That question separates genuine outcome providers from capability vendors who have repackaged their offer in outcome language. A true autopilot partner takes accountability for the result, not just the tool, not just the process, not just the advisory, and structures the engagement around delivery rather than access. If the vendor’s answer involves explaining how their tool enables your team to achieve the outcome, you are buying a copilot regardless of how it is positioned.

About Valere

Valere is an award-winning AI-native & custom software development company that transforms mid-market companies into AI-first organizations through building, learning, and scaling.

As an expert-vetted, top 1% agency on Upwork, Clutch, G2, and AWS, Valere serves as the trusted AI value creation partner for PE firms, mid-market companies, and Fortune 500 enterprises alike seeking comprehensive AI transformation that drives measurable ROI. With over 220 dedicated professionals and domain experts, we specialize in end-to-end AI-native solutions using our proven crawl-walk-run methodology, guiding organizations through every stage of their AI journey — from initial assessment and strategy to full-scale implementation and optimization.

Our hybrid framework delivers enterprise quality at mid-market economics, prioritizing excellence through continuous process optimization, a unified culture, and rigorous hiring standards — all in service of building something meaningful for every client we partner with.

Build Something Meaningful at Valere.io