From the Desk of Guy Pistone– Weekly insights for operators at Mid-Market & PE-backed companies.

TL;DR

Most AI products fail at the team layer. Companies are spending $2–5M on AI initiatives while assembling teams that practically guarantee failure. My opinion, the top three causes are hiring in the wrong sequence, missing critical roles, and confusing AI-enabled talent with AI-native talent. A 2025 MIT study of 300+ enterprise AI deployments found that 95% of generative AI pilots deliver zero measurable P&L impact. The cost of a failed AI product launch goes beyond the build budget. It’s 12–18 months of lost market positioning you can’t recover. This newsletter breaks down the differences that separate AI products that ship from those that die in pilot purgatory. 👇

The Expensive Illusion

“We Have AI Talent” ≠ “We Can Ship AI Products”

Most companies hiring for AI products are assembling the wrong team. They hire ML engineers first (the flashiest role) and wonder why nothing ships six months later.

The pattern is predictable. Brilliant model. No data pipeline. No product thinking. No governance. Dead on arrival.

Have you thought about the full cost? If not, I did the math for you:

The Failed Launch:

- 6 ML engineers × $160K avg = ~$960K/year in talent alone (Glassdoor puts the 2026 average ML engineer salary at $159,740)

- $500K–1M in compute and tooling

- 12–18 months of development

- Result: A demo that works. A product that doesn’t.

- Total cost: $2–3M + opportunity cost

The Successful Launch:

- Balanced team (breakdown in Section 3)

- Same or lower budget, but allocated differently

- Ships in 6–9 months with production-ready architecture

- Result: Revenue-generating product

The first hire determines the trajectory, and most companies get it wrong.

If someone handed you millions to build an AI product tomorrow, who would you hire first? Most people answer with an engineer. And that instinct is exactly why so many AI launches fail. Play

The 6 Roles That Actually Ship AI Products

The sequence matters more than the headcount.

Role 1: AI Product Manager (Hire FIRST)

This is the most counterintuitive move. And the most important one.

An AI Product Manager translates business problems into AI-solvable problems. They define what the product actually needs to do. Most PMs think in features. AI PMs think in workflows and data loops.

The signal of a great one: They ask “what decision does this automate?” before “what model do we use?”

The problem is that there’s a massive talent gap. AI PM roles are growing at double-digit rates year over year, but the supply of people who can actually fill them is nowhere close. LinkedIn recorded 30% year-on-year growth in AI PM job postings. According to a Live Data analysis of 18,000+ professionals, over 12,000 people globally moved into AI PM roles between January 2024 and October 2025, double the prior year. And yet, 60% of them didn’t come from a computer science background, which tells you how wide the net is being cast. Demand is outpacing supply by a wide margin.

Cost: $130K–$200K base (Product School’s 2026 salary data puts the range at $130K–$200K, with total comp often reaching $260K+ at top firms).

Role 2: Data Engineer (Hire SECOND)

No AI product survives bad data plumbing.

Data engineers build pipelines, ensure data quality, and create the foundation that everything else depends on. Companies skip this role and pay for it six months later when the model is technically impressive, but the data feeding it is garbage.

If you read my previous newsletters on data governance and LLM selection, this will sound familiar. Models are only as good as their pipes. If you missed it, catch up with the class here.

Cost: $120K–$170K (Glassdoor 2026: average $132K, with the 25th–75th percentile ranging from $103K to $170K).

Role 3: ML/AI Engineer (Hire THIRD)

Third. Always third. Without a PM defining the problem and a data engineer building the pipes, ML engineers build impressive models that solve the wrong problem with bad data. This is the role most companies hire for immediately because it feels like “the AI hire.” It shouldn’t be.

They handle model selection, fine-tuning, evaluation, and optimization. Critical work. But only after the problem is defined and the data infrastructure exists. And remember, “The model selection crisis is about matching the right model to each workflow.” Same principle applies to the engineer building it.

Cost: $150K–$200K (Glassdoor 2026: average $160K, with top earners at $246K. Indeed reports an average of $186K).

Role 4: Domain Expert (Embed alongside the team)

AI without domain expertise produces confident, wrong outputs. We covered this in the feedback loop paradox. The scariest AI failures are the silent ones, where the system is confidently wrong for months and are left unchecked…

The domain expert mostly validates outputs and works on edge cases. But I’d also suggest you have them build evaluation criteria. Most companies look to fill this position in-house (Valere, too), but if you can’t find the talent fast enough, start looking into other resources. The most important part is that they are formally embedded into the existing team.

Take Onyx AI, for example, they were struggling with a generic LLM that hit 60% accuracy on domain-specific tasks. After domain-specific fine-tuning, guided by Valere, accuracy exceeded 90%. The domain expert is how you know what “right” looks like. See their full story here: https://www.valere.io/case-study/onyx-ai/

Cost: Typically reallocation, not new headcount, but if you need to bring someone in externally, expect $150K+ depending on industry depth. The real cost is skipping this role entirely. That’s how you end up with a model that’s 60% accurate, and nobody notices for months.

Role 5: MLOps / Platform Engineer (Hire for production)

This is where AI products go to die. The gap between it works in a notebook and it works at scale is the single most common graveyard for AI products.

MLOps engineers handle CI/CD for models, monitoring, scaling, cost optimization, and infrastructure. They’re the reason a model runs reliably in production instead of crashing every time real-world data deviates from the training set.

This is the most commonly skipped role. It’s also the #1 reason AI products stall after demo. If you recall from my “AI-First or Dead” newsletter, “model-agnostic architecture” requires someone to build and maintain it. That someone is the MLOps engineer.

Cost: $130K–$200K (Glassdoor 2026: average $161K, with the 25th–75th percentile at $132K–$199K).

Role 6: AI Governance / Ethics Lead (Hire before launch)

- Regulatory risk.

- IP exposure.

- Shadow AI.

Are the issues we’ve covered in previous editions. This Lead role owns compliance frameworks, acceptable use policies, human-in-the-loop protocols, and readiness for regulations like the EU AI Act. Forrester predicts that 60% of Fortune 100 companies will appoint a head of AI governance by the end of 2026.

“We’ll add governance later = We’ll manage risk after the damage is done.”

Cost: $140K–$180K for a dedicated hire. Alternatively, search for a fractional CAIO.

The Hiring Sequence That Actually Works

The order you build the team is your strategy. Most companies get it backwards.

The Wrong Sequence (What Most Companies Do)

- Hire ML engineers (because “we need AI talent”)

- Realize they need data (scramble to hire data engineers)

- Build a model that’s technically impressive but solves the wrong problem

- Hire a PM to “figure out the product” after the model already exists

- Skip governance entirely

- Wonder why adoption is low, and the board is frustrated

Result: 12–18 months wasted. $2–3M spent. Back to square one.

This is the pattern behind the MIT finding that large enterprises take an average of nine months to scale a pilot. Mid-market firms? About 90 days. The bureaucracy and wrong-order hiring compound the delay.

The Right Sequence

- Month 1–2: AI Product Manager + Domain Expert → Define the problem. Validate the use case. Scope the MVP.

- Month 2–3: Data Engineer → Audit existing data. Build pipelines. Establish quality baselines.

- Month 3–4: ML/AI Engineer → Now they have a clear problem, clean data, and defined success metrics.

- Month 5–6: MLOps / Platform Engineer → Production-readiness from the start, not bolted on after the fact.

- Pre-Launch: Governance Lead (or Fractional CAIO) → Compliance, risk frameworks, human-in-the-loop protocols.

Result: Ship in 6–9 months. Production-ready. Governable. Scalable.

The Skill Gap Nobody’s Talking About

The hardest role to fill is the AI-native product manager. Think about the math. There are hundreds of thousands of ML engineers on the global market. AI talent demand exceeds supply by roughly 3.2:1 across all roles, according to workforce analyses in 2025. But the gap is most acute in AI product management, where the number of people who truly understand AI-native product development is a fraction of the engineering supply.

The difference matters. AI-native PMs don’t just manage features. They design feedback loops, understand model limitations, and think in data workflows rather than feature specs.

What to look for:

- Can they explain when NOT to use AI? (Most AI PMs push AI everywhere. The good ones know when a rules-based system is the right call.)

- Do they think in workflows?

- Can they define evaluation criteria before the model is built?

- Do they understand the cost-performance tradeoffs we covered in the LLM Selection newsletter?

The Budget Reality Check

Most companies over-index on models and under-invest in everything else.

Typical (Wrong) Budget Allocation

- 60% Model development and computation

- 20% Data infrastructure

- 15% Product and design

- 5% Governance and compliance

Optimized Budget Allocation

- 30% Data infrastructure and quality

- 25% Product management and domain expertise

- 25% Model development and computation

- 10% MLOps and production infrastructure

- 10% Governance, compliance, and testing

This rebalancing reflects what the data actually shows. An MIT study found that companies purchasing specialized vendor solutions succeeded roughly 67% of the time, while internal builds succeeded only about one-third as often. The difference comes down to whether teams have the right structure behind the build.

Menlo Ventures’ 2025 enterprise AI report found that 76% of AI use cases are now purchased rather than built in-house, up from 53% a year earlier. That’s a signal that companies are learning the hard way how expensive misallocated internal builds can be.

For Mid-Market Companies ($100–250M revenue)

- First AI product budget: $500K–1.5M

- Expected timeline to production: 6–9 months with the right team sequence

- Expected ROI horizon: 12–18 months

ISG research found that AI accounts for roughly 30% of the overall IT budget increase at most enterprises in 2025. And that number is climbing. The question is whether you’ll spend it on the right things…

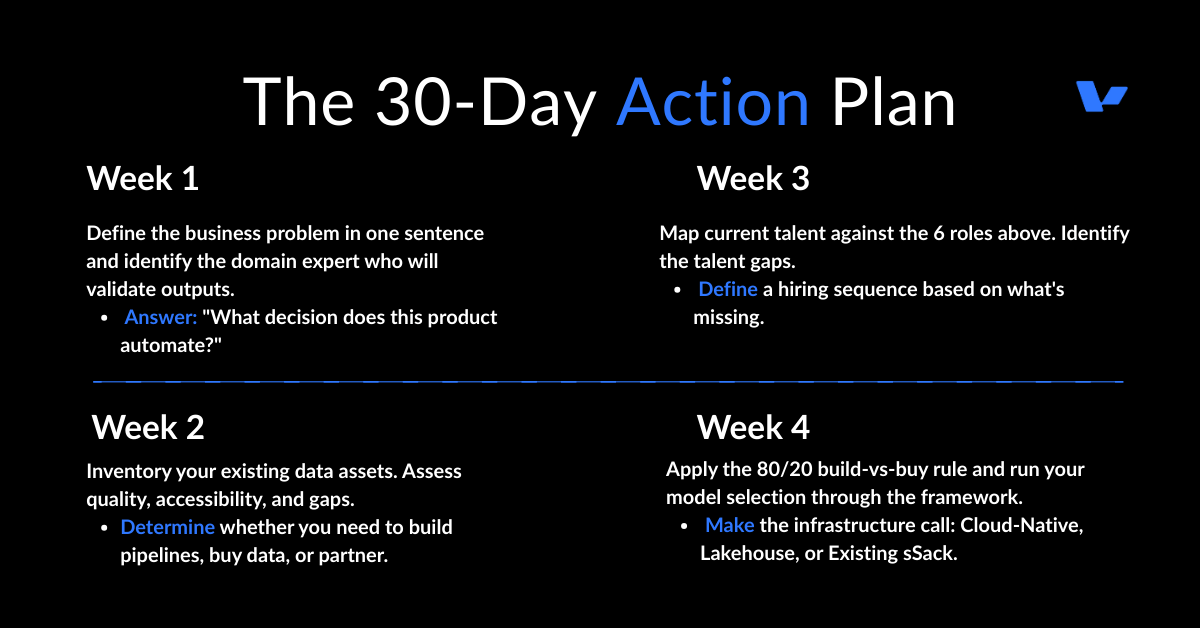

The 30-Day Action Plan

If you’re launching an AI product this year, do this first:

The Signal

The AI product crisis is a people problem wearing a technology mask. The companies that will win are the ones that assembled the right team, in the right order, solving the right problem.

Remember: Vision tells you what to build. The team defines whether it actually ships or not.

Get both right, and you’re building something that compounds.

Guy Pistone, CEO, Valere | AWS Premier Tier Partner

“Building meaningful things.”

Works Cited

- MIT Media Labs / Project NANDA, “The GenAI Divide: State of AI in Business 2025” — 95% of enterprise AI pilots deliver zero measurable P&L impact. Based on 300+ AI deployments, 150+ executive interviews, 350 employee surveys.

- Glassdoor, “Machine Learning Engineer Salary 2026” — Average salary $159,740; 25th–75th percentile: $128K–$201K.

- Product School, “The Hard Truth About Product Management Salaries in 2026” — AI Product Manager base salary range: $130K–$200K.

- Glassdoor, “Data Engineer Salary 2026” — Average salary $131,968; 25th–75th percentile: $103K–$170K.

- Glassdoor, “MLOps Engineer Salary 2026” — Average salary $161,317; 25th–75th percentile: $132K–$199K.

- Product Leadership, “Why Companies Hire AI Product Managers in 2025” — LinkedIn recorded 30% YoY growth in AI PM roles; supply significantly lags demand.

- Aakash Gupta / Live Data, “The State of AI Product Management 2025” — 12,000+ professionals moved into AI PM roles between Jan 2024–Oct 2025, 2x the prior year. 60% from non-CS backgrounds.

- Second Talent, “Top 50+ Global AI Talent Shortage Statistics 2025” — AI talent demand exceeds supply 3.2:1 globally; 1.6M+ open positions vs. ~518K qualified candidates.

- Menlo Ventures, “2025: The State of Generative AI in the Enterprise” — 76% of AI use cases purchased vs. built in-house, up from 53% in 2024. Enterprise generative AI spending reached $37B in 2025.

- Virtualization Review / MIT, “MIT Report Finds Most AI Business Investments Fail” — Large enterprises take avg. 9 months to scale a pilot; mid-market firms average ~90 days. Vendor-led solutions succeed ~67% of the time vs. ~33% for internal builds.

- ISG Market Lens, “2025 IT Budgets and Spending Study” — AI accounts for ~30% of overall IT budget increases; enterprise AI spending rising 5.7% YoY.

- TechJack Solutions, “AI Governance Salary Data 2026” — Forrester predicts 60% of Fortune 100 to appoint head of AI governance by end of 2026. AI Governance Lead salary range: $140K–$180K.

- World Economic Forum, “How We Can Balance AI Overcapacity and Talent Shortages” — 94% of leaders face AI-critical skill shortages; Bain projects 2.3M AI-specific jobs by 2027 vs. 1.2M available talent.

- Indeed, “Machine Learning Engineer Salary” — Average salary $186,141 based on 4,500+ salaries as of Feb 2026

Discover why leading companies trust Valere

Clutch G2 Peerspot Behance