What It Means for Your Business and How to Get Ahead of It

TL;DR: 3 Key Takeaways

- The 2026 National AI Policy Framework signals the end of fragmented state regulation, replacing it with a single federal standard that opens a meaningful window for organizations ready to build serious AI capability now.

- Courts are actively establishing what constitutes legally defensible AI, making data provenance and auditability foundational requirements rather than compliance afterthoughts.

- Most AI programs that underperform do so not because of technical failure, but because workforce readiness, change management, and adoption get treated as secondary priorities.

Enterprise AI conversations have, for the past several years, centered on a fairly consistent set of promises: automate the repetitive work, surface useful insights, generate content faster. Those are real gains. But they are productivity improvements layered on top of operating models that were never designed with intelligence in mind.

The organizations we work with are not struggling to find AI tools. They are struggling to get those tools to compound in value rather than plateau. That distinction is where this article begins. The AI field has crossed a threshold that changes the nature of the problem entirely. The era of generative synthesis is giving way to something more consequential: World Models. Understanding what that shift means, not just technically but economically and organizationally, is where most of our client engagements actually start.

What the 2026 AI Policy Framework Actually Means for Business Leaders

On March 20, 2026, the Trump administration released its National Policy Framework for Artificial Intelligence. The framework is a sweeping set of legislative recommendations that signals a meaningful shift in how the federal government intends to govern, accelerate, and leverage AI as a tool of economic and national competitiveness. For business leaders, this is not a background policy document. It is a structural inflection point with real implications for compliance strategy, procurement, workforce planning, and how organizations build AI systems going forward.

We have worked alongside organizations at every stage of AI adoption over the past several years, from initial strategy through full-scale implementation. What we have consistently found is that the companies who read these signals early tend to capture the most value from them. What follows is our read on what this framework actually means and where the practical implications land.

The Era of Fragmented State AI Regulation Is Ending

The most consequential element of the 2026 Framework is its aggressive push for federal preemption. The administration’s core argument is that fifty different state regulatory regimes create an unacceptable drag on America’s ability to compete with China’s state-directed AI programs. Washington’s answer is a single national rulebook.

The Enforcement Mechanism

The Department of Justice stood up the AI Litigation Task Force in January 2026, chaired by Attorney General Pam Bondi. Its mandate is to challenge state laws the Department of Commerce identifies as onerous. States that resist face a significant lever: the potential withholding of federal funding, including the $42 billion BEAD broadband program.

State laws already in the crosshairs include Colorado’s SB 24-205 (mandatory bias mitigation and impact assessments), California’s SB 53 (safety incident reporting), California’s AB 2013 (training data transparency), Texas’s TRAIGA (prohibited uses and governance protocols), and Illinois’s HB 3773 (employment-based AI notice requirements).

What This Means for Your Compliance Strategy

If your compliance strategy has been built around navigating a patchwork of state regulations, the ground is shifting. That does not mean the work done to date is wasted. Much of the underlying governance infrastructure transfers. The more important question is which parts of your compliance posture are tied to state-specific mandates versus which parts reflect sound general practice.

Organizations that have been holding off for regulatory clarity now have it. Based on how these cycles tend to move, the window to build serious internal AI capability under a relatively permissive federal standard will not stay open indefinitely.

Child Safety: The One Area Where Regulation Is Getting Stricter

While the administration’s overall posture is deregulatory, child protection is a clear and explicit carve-out.

What the Framework Requires

The Take It Down Act, signed in May 2025, requires platforms to remove AI-generated non-consensual intimate imagery within 48 hours of notification and establishes federal criminal penalties for violators. The 2026 Framework builds on this by calling on Congress to mandate age-assurance requirements for AI platforms likely accessed by minors, require features that reduce risks of sexual exploitation and self-harm, and affirm that COPPA protections apply fully to AI systems. This includes restrictions on using minor-generated data for model training or advertising.

If your product could reasonably be accessed by a user under 13, COPPA’s data collection and parental consent requirements apply regardless of whether you marketed the product to children. Products in education, entertainment, sports, and general consumer categories should review their use cases to determine where minor access is likely and what architectural changes are required.

What We Learned Building for Young Users

We ran into this directly on a recent project. The client was building an AI-powered mentorship platform for youth sports with AI coaching features and access to professional athletes. The product concept was strong, but getting the build right meant treating COPPA compliance and teen-focused security as foundational architecture requirements rather than features to layer on later.

Age-appropriate guardrails had to be baked in from the start. Both because it was the right approach, and because anything less creates the kind of legal exposure that is painful to deal with post-launch. By the time you are retrofitting, you are already behind.

Intellectual Property: The Courts Are Deciding, But You Cannot Wait

The administration believes training AI on copyrighted material constitutes transformative fair use. However, it has declined to push Congress to codify that view, leaving resolution to the courts. Given the volume of pending litigation, that process will take years.

Where the Courts Stand Today

Courts are beginning to treat general-purpose model training as transformative, while drawing hard lines against piracy and shadow library use. Bartz v. Anthropic found that training on lawfully acquired books constitutes fair use. Thomson Reuters v. ROSS rejected fair use where training on legal headnotes caused demonstrable market harm.

The pattern is clear: how you source your training data and whether you can prove it matters enormously. The Framework also calls for a federal digital persona protection standard aligned with the NO FAKES Act, which would establish IP rights in an individual’s voice and likeness with a DMCA-style takedown mechanism.

Building Defensible Data Architecture in Practice

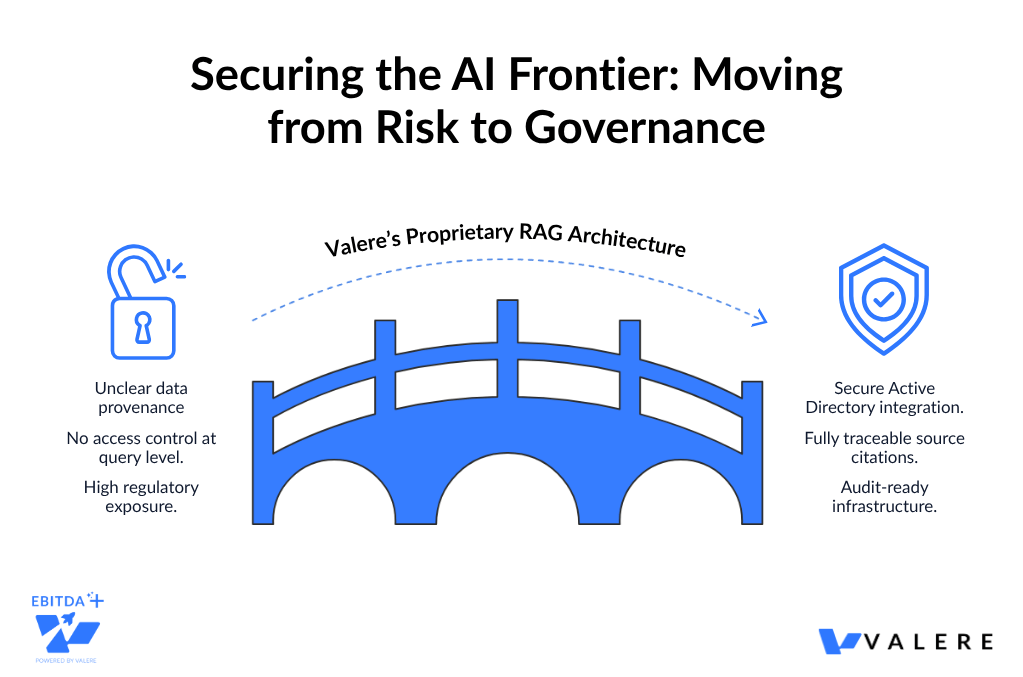

We have solved data provenance problems in real production environments. One project involved building an AI system for a national specialty contractor. Their engineers needed to search and reason across more than ten terabytes of proprietary building codes, blueprints, and technical documentation.

The challenge was not just technical. The system also needed to restrict access to authorized data only, with every output traceable to a specific source document. We used a Retrieval-Augmented Generation architecture protected by Active Directory-based access control, enforcing permissions at the query level rather than as an afterthought. Engineers received traceable citations with every output, and the company got both IP protection and legal defensibility built into the system by design.

Organizations with clean, documented, auditable data pipelines are well-positioned as courts continue to establish what is legally defensible. Cleaning it up retroactively is significantly harder than doing it right from the start.

Federal Procurement and Auditability: The Stakes Just Got Real

The proposed TRUMP AMERICA AI Act would codify unbiased AI principles as requirements for federal procurement. These include truthfulness, historical accuracy, scientific objectivity, and ideological neutrality. The Act would also mandate annual third-party audits of high-risk government AI systems, with financial penalties for noncompliant vendors. For anyone selling into the federal ecosystem, auditability is no longer optional.

The Core Question for Systems Already in Production

For systems already running, the key question is whether decision logic is captured and retrievable. Systems built on probabilistic outputs without logging or traceability create the most exposure. Retrofitting is possible but involves meaningful architectural work.

How We Have Built for Federal Standards

We have built in this space across several engagements. One involved creating a secure, AI-powered government contracting platform built natively on AWS GovCloud, designed to unify the full capture lifecycle with integrated compliance checks and document intelligence. The GovCloud requirement was not a technical preference. It was a procurement prerequisite. Federal buyers have specific infrastructure expectations, and meeting them is a condition of doing business.

In another engagement, a regulatory compliance technology firm needed AI decisions to survive federal scrutiny. Their challenge was reviewing complex applicant data against a dense 50-page rulebook, at scale, consistently, and with a full audit trail. We replaced probabilistic AI outputs with a deterministic logic bridge built on AWS Bedrock. Strict JSON schemas evaluate each case against the rulebook and produce an immutable audit trail in a single API call. Every decision is traceable.

Auditability in Healthcare

In healthcare, we encountered a similar challenge. An automated AI communications platform lacked a defensible oversight mechanism, which is a serious problem in a heavily regulated environment. We built a Human-in-the-Loop validation portal integrated with Salesforce and AWS Bedrock. It acts as a mandatory gate before any AI-generated communication goes out. Administrators can demonstrate exactly what was sent, when, and on what basis.

The consistent thread across these engagements: if you cannot explain what your AI did and why, you are exposed. Building explainability in after the fact is expensive and usually incomplete. Systems designed with auditability at their core perform far better than systems with documentation added afterward.

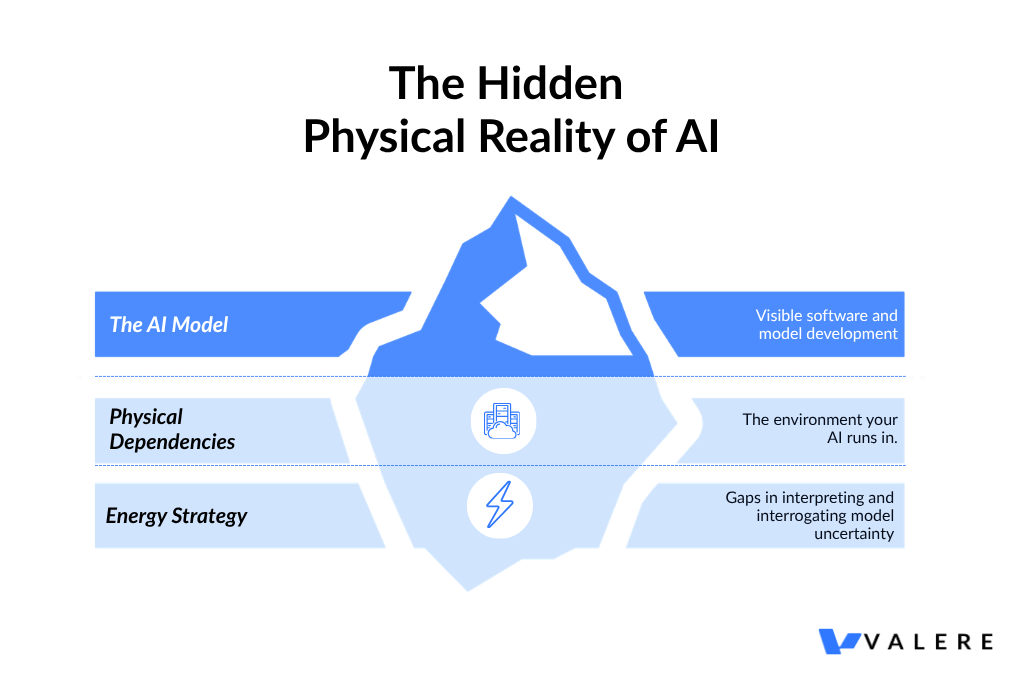

AI Infrastructure Is a Physical Problem, Not Just a Digital One

Many organizations still treat the energy footprint of AI as someone else’s concern. The 2026 Framework makes clear it is not.

The Capital Commitment Behind the Policy

On March 4, 2026, seven major technology companies signed the Ratepayer Protection Pledge. Amazon, Google, Meta, Microsoft, OpenAI, Oracle, and xAI committed to bear the full infrastructure costs of powering their data centers rather than passing those costs to residential consumers. The numbers are significant: approximately $700 billion in aggregate capital expenditure in 2026 alone, projected to reach $820 billion in 2027. That is six times the level of 2022.

The proposed DATA Act of 2026, introduced by Senator Tom Cotton, goes further. It proposes to exempt fully off-grid power systems from Federal Power Act oversight, enabling operators to bypass grid interconnection queues that now average five years nationally and over nine years in California.

When Software Projects Become Infrastructure Problems

This intersection of digital and physical infrastructure comes up more than most technology teams expect. In one engagement, we worked with an education technology company building an AI platform to help educators redesign physical classrooms using computer vision and mixed-reality technology. What started as a software project had significant physical infrastructure dependencies that had to be addressed early. The same dynamic plays out regularly in enterprise AI buildouts: the environment your AI runs in matters as much as the model itself.

If your organization is planning serious AI infrastructure investment, energy strategy needs to be part of the technology conversation, not a facilities afterthought.

The Workforce Reality That Does Not Get Enough Attention

The 2026 Framework calls on Congress to integrate AI training into existing apprenticeship programs and Pell Grant mechanisms, expand federal study of workforce realignment, and bolster land-grant universities as regional AI hubs.

The Disclosure Requirements Coming for Employers

The TRUMP AMERICA AI Act adds disclosure requirements that will affect publicly traded companies and federal agencies. Both would need to report on the workforce impact of AI adoption, including data on layoffs, retraining, and new hiring tied to AI programs specifically. Building that attribution into program management from the start is significantly easier than reconstructing it retroactively for a reporting requirement.

The More Immediate Problem Most Organizations Are Not Solving

What gets less attention is the operational reality most organizations are already living. Most AI implementations we have been part of have underperformed not because of technical failures, but because the humans using the systems were not ready for them. Leadership had not aligned on what AI-first meant operationally. Frontline employees were not trained on how to work with AI outputs effectively. Middle management was skeptical and quietly resistant. Meanwhile, the technology worked fine. It just was not being used.

When AI Surfaces What You Were Not Looking For

We have also seen AI surface challenges that were not on anyone’s radar going in. Deploying AI to evaluate workforce health, specifically detecting early burnout indicators and identifying fragmentation in distributed teams, revealed dynamics that traditional management tools had completely missed. That is genuinely useful intelligence, but it requires organizations to have the cultural readiness to act on it constructively rather than simply collect the data.

The workforce dimension of AI transformation is where most programs either gain momentum or quietly stall. Getting it right means treating skill-building and change management with the same rigor as the technical implementation. In our experience, most organizations do not prioritize this sufficiently on the first pass.

What We Have Found Consistently Makes the Difference

A few things tend to separate the AI programs that deliver from the ones that stall.

Know where you actually stand. Not just whether you have AI tools deployed, but whether your data is clean enough to train on, your governance is defensible enough to audit, and your workforce is engaged enough to actually use what you build. The gap between having AI and generating outcomes from it is usually wider than leadership expects.

Take data provenance seriously now. Courts are actively establishing what is legally defensible. Organizations with clean, documented, auditable data pipelines are well-positioned. The ones without them carry real exposure, and cleaning it up retroactively is significantly harder than doing it right from the start.

Build audit trails into your systems, not on top of them. In regulated sectors especially, the ability to explain AI decisions is increasingly a legal and procurement requirement.

Do not underinvest in the people side. Technically excellent implementations can still generate mediocre outcomes when adoption lags. Workforce readiness, change management, and AI literacy are not soft considerations. They determine whether an AI program actually delivers on its business case.

Design for scale before you need it. Pilots that work but cannot scale are one of the more expensive lessons in AI. The architectural decisions made early tend to compound, in both directions.

2026 Is a Pivotal Year

The 2026 National Policy Framework is the clearest federal signal yet that AI leadership is a national imperative. The government intends to remove the structural obstacles standing between American industry and that goal.

The practical implications range from child safety requirements in consumer products, to audit mandates in government contracting, to data provenance standards that courts are actively enforcing. None of these are abstract. They show up in architecture decisions, procurement requirements, workforce strategies, and compliance infrastructure.

Across the organizations navigating these dynamics well, we consistently see the same orientation: they treat AI as a strategic capability to build rather than a product to buy. That distinction, between genuine organizational capability and vendor dependency, tends to be what separates the companies that lead from the ones that follow.

Federal preemption is clearing the friction. The tools are available. The policy environment is as permissive as it is likely to be. What remains is execution, and the organizations that move with intention in 2026 will look back on this as the year they pulled ahead.

Turn Policy Clarity Into a Competitive Advantage

The 2026 framework has removed a meaningful layer of uncertainty for organizations ready to act. Valere works with mid-market companies, PE-backed portfolios, and enterprise technology firms to design, build, and scale AI systems that are auditable by design, compliant from the start, and built to compound in value over time.

Whether you are assessing your current AI posture against the new federal standard, building toward procurement readiness in a regulated sector, or standing up AI capability for the first time with the right architecture underneath it, Valere brings the expertise, platform, and partnership model to turn the current policy environment into a durable organizational advantage.

- A Policy and Compliance Readiness Assessment that maps your current AI deployments against the 2026 Framework’s auditability, data provenance, child safety, and workforce disclosure requirements, identifying where your exposure is before it becomes a problem

- A clear path from fragmented AI pilots to a governed, auditable AI program that integrates with your existing operations, meets federal procurement standards, and is built to scale without needing to be rebuilt

- A personalized AI value creation roadmap that moves you from compliance-reactive to compliance-ready, with human-in-the-loop governance, traceable decision architecture, and proprietary organizational intelligence that no competitor can replicate by licensing the same tools

Start building with the 2026 framework in mind: https://www.valere.io/

Frequently Asked Questions

What does federal preemption actually mean for businesses currently building around state AI regulations?

Federal preemption means a single national standard supersedes conflicting state laws. For businesses that built compliance programs around state-specific requirements, those frameworks may no longer apply. Much of the underlying governance infrastructure transfers, but organizations should audit which parts of their compliance strategy are tied to state-specific mandates versus which parts reflect sound general practice worth preserving regardless.

How are courts currently treating AI training data and copyright?

Courts are leaning toward treating training on lawfully acquired material as transformative fair use, while taking a harder line on data sourced from piracy, shadow libraries, or unauthorized scraping. The key factors courts are examining include how training data was acquired, whether source material appears directly in outputs, and whether the training use creates market harm for original creators. How you document and source your training data is increasingly the difference between a defensible position and a liability.

What does the COPPA extension to AI systems mean for companies building consumer products?

If your product could reasonably reach a user under 13, COPPA’s data collection and parental consent requirements apply regardless of whether you marketed the product to children. The 2026 Framework extends this logic to AI systems explicitly, including restrictions on using data generated by minors for model training or targeted advertising. Products in education, entertainment, sports, and general consumer categories should assess where minor access is likely and what architectural changes that requires.

How do audit trail requirements affect AI systems that are already in production?

Audit trail requirements in federal procurement focus on the ability to explain what an AI system did, why it reached a particular output, and who authorized the action. For systems already in production, the key question is whether that information is captured and retrievable. Systems built on probabilistic outputs without logging or traceability create the most exposure. Retrofitting is possible but involves meaningful architectural work. Organizations in regulated sectors should assess their current systems against these requirements now, rather than waiting for a procurement or compliance event to force the issue.

What does the workforce disclosure requirement mean in practice?

For publicly traded companies and federal agencies under the proposed TRUMP AMERICA AI Act, disclosure requirements cover AI-related workforce impacts including layoffs, retraining investments, and new hiring tied to AI adoption. Organizations need tracking systems that attribute workforce changes to AI programs specifically. Building that attribution into program management from the start is significantly easier than reconstructing it retroactively.