TL;DR: 3 Key Takeaways

- Model access is not a competitive advantage because every company gets the same capabilities at the same time, and the real edge lives in the proprietary data and institutional knowledge underneath the AI.

- The most common reason AI initiatives stall is a knowledge capture failure, where teams automate the idealized version of a process rather than how work actually gets done.

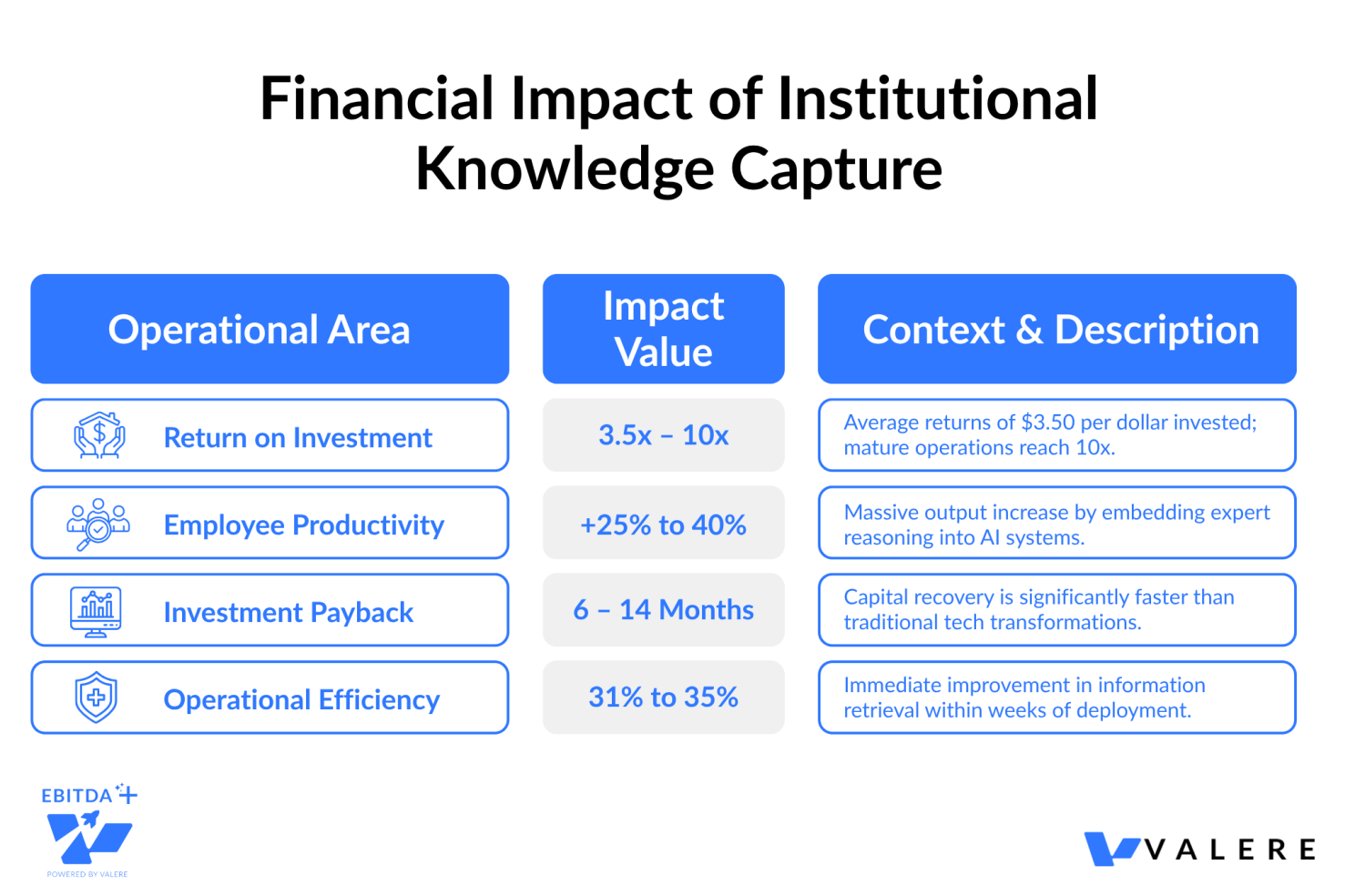

- Portcos that ground their AI in real operational workflows consistently outperform those that don’t, with documented returns averaging 3.50 dollars per dollar invested and some mature deployments reaching 10x.

Most PE-backed companies have the same AI story right now. Leadership got access to a frontier model, stood up a few tools, and declared an initiative underway. The board deck has a slide on it. The operating team checks it quarterly.

And it’s going to produce roughly the same results as every competitor in the space. They’re all running the same models on the same publicly available data.

Model Access Is Table Stakes

When OpenAI or Anthropic ships a new release, every company on the planet gets access to the same capabilities at once. Any early-mover advantage compresses to near zero within six to eight weeks. Proprietary data is where the durable edge lives. The operational patterns your most experienced people have built over careers. The early warning signals your best reps recognize before they show up in any dashboard. The judgment calls your senior engineers make when the process documentation runs out. That knowledge is specific to your business, and no competitor can buy it off a shelf.

The Gap Nobody Talks About

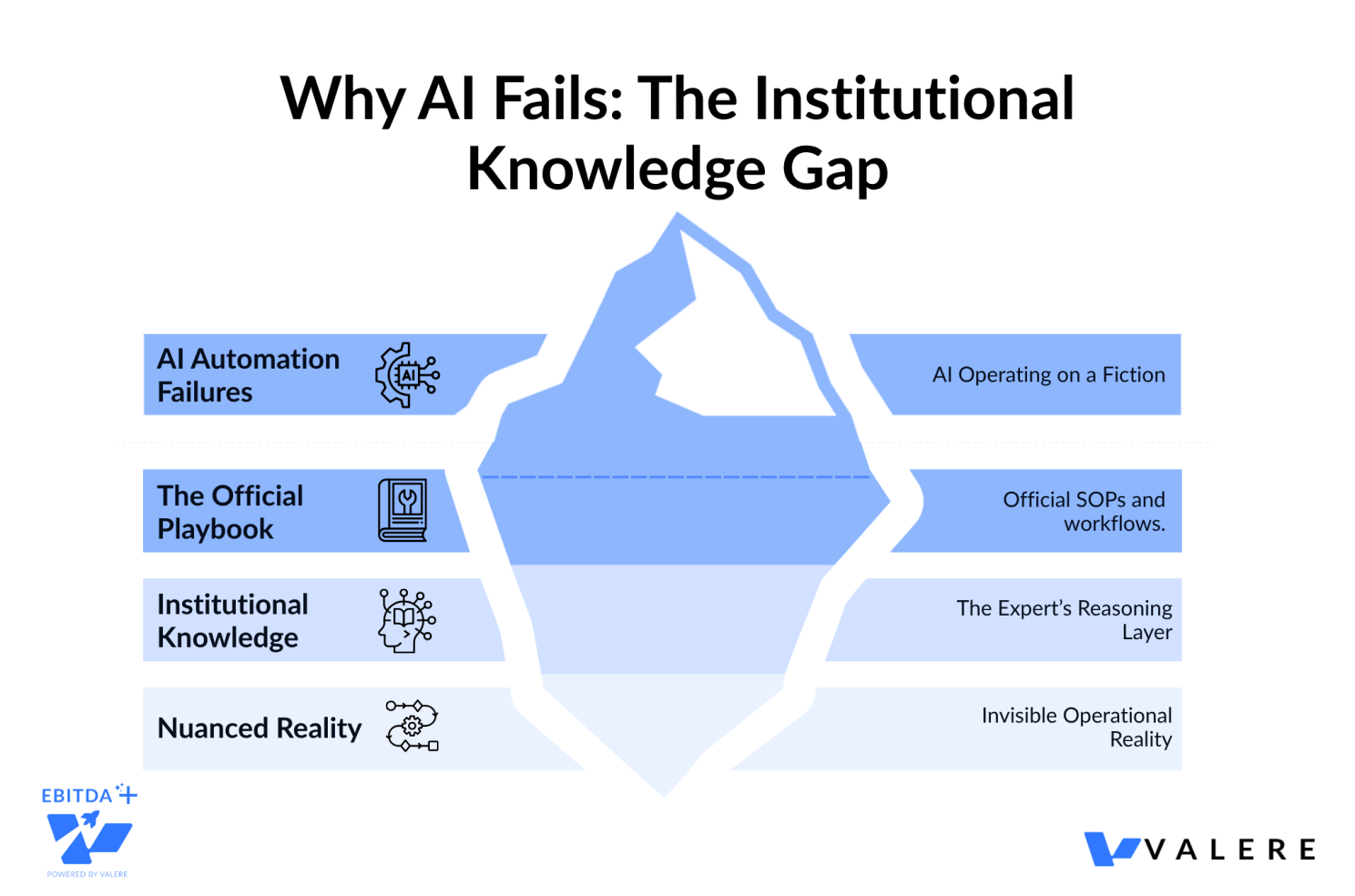

Most businesses have SOPs and workflows that describe how they think they operate. Leadership can point to documentation, process maps, and playbooks. The problem is that the real institutional knowledge lives somewhere else entirely: in ticketing systems, message threads, service logs, and the heads of people who have been doing the work for years.

That gap between the documented process and the actual process is precisely why most AI automation initiatives fail. They automate the idealized version of how work is supposed to happen. The AI runs cleanly against a process that, in practice, nobody actually follows, because the real version is more nuanced, more situational, and far harder to write down.

We see this consistently across portco engagements. A team will configure an automation against their official SOP and wonder why adoption is low and outputs feel off. The automation isn’t wrong. It’s just operating on a fiction. The people doing the work stopped following that SOP two years ago because they found a better way, and that better way exists nowhere in any system.

The answer isn’t better documentation. It’s reverse-engineering reality first, then building automation on top of what’s actually true.

The Institutional Knowledge Problem Is Worse Than Most Operators Think

There’s a slow-moving crisis playing out across every industry, and most organizations are managing it by ignoring it. Tenured employees carry expertise that never made it into any system. Reasoning frameworks built over fifteen-year careers live in people’s heads. Pattern recognition that drives your best performers never makes it into any playbook. That knowledge walks out the door every time someone retires or moves on.

The Scale of the Problem

In U.S. manufacturing alone, undocumented expertise loss drives an estimated 92 billion dollars in annual productivity losses. About 31% of service engineers are currently over 55. The sector projects 3.8 million job vacancies by 2033, with the majority tied directly to retirement. This is an immediate problem for any portco operating in something asset-heavy, services-heavy, or expertise-dependent.

What It Looks Like Inside a Portfolio Company

We worked with a PE firm whose deal evaluation edge concentrated in the pattern recognition of a small number of senior partners. Their ability to score opportunities well was built from accumulated judgment over careers, and none of it was documented anywhere. Every time a partner transitioned out, a piece of that edge left with them. They had access to the same deal flow databases and financial models as every other firm in the market. The reasoning layer sitting on top of those tools was what they couldn’t systematize. That’s where the alpha actually lived.

Where AI Initiatives Stall

The failure mode we see most consistently is straightforward. AI gets deployed without the organization’s actual institutional knowledge embedded in it. Teams end up with a highly capable system that has no idea how their business works, who their customers really are, or how their best people approach a hard problem.

Ops leaders at mid-market companies run into this constantly. They’ve tried automation before, with platforms like Zapier, Make, or AgentForce, and it didn’t deliver. The tools weren’t the problem. The foundation was. Those platforms assume you already understand your own processes well enough to configure them correctly. Most companies don’t, and the ones that think they do are usually working from the documented version rather than the real one.

Traditional consulting doesn’t solve it either. A firm comes in, documents the as-is state, hands over a report, and leaves. There’s no living feedback loop. Six months later the process has drifted and the documentation is already outdated.

A Common Portco Example

One portco we worked with had a churn prediction problem. Their models could identify churn after it was already happening. What they couldn’t catch were the early warning patterns: subtle shifts in support ticket language, slight changes in engagement behavior, the signals experienced CS reps had learned to read over years. The model had no way to act on what those reps knew because nobody had captured it.

Closing the gap between what the AI could access and what the business actually knew was the entire solution.

What Doing It Right Looks Like

The portcos that move fastest start with an honest inventory of where institutional knowledge lives. Which processes exist only in people’s heads? What would the organization lose if its ten most experienced people left next quarter? That’s the starting point.

Surface the Real Workflows First

The gap between how work is supposed to happen and how it actually happens is almost always larger than leadership expects. Building the knowledge foundation requires two inputs working together. First, a systematic scan of operational data including tickets, logs, communications, and records to map how work actually gets done in practice. Second, structured interviews with the subject matter experts who carry expertise that never made it into any system. Together, those inputs produce something traditional documentation never achieves: an accurate picture of operational reality.

Every agent and automation built on top of that foundation traces back to the knowledge library. That traceability matters for two reasons. It gives managers visibility into why an agent did what it did, and it creates an audit trail that generic platform-level agents simply can’t provide.

What This Produces in Practice

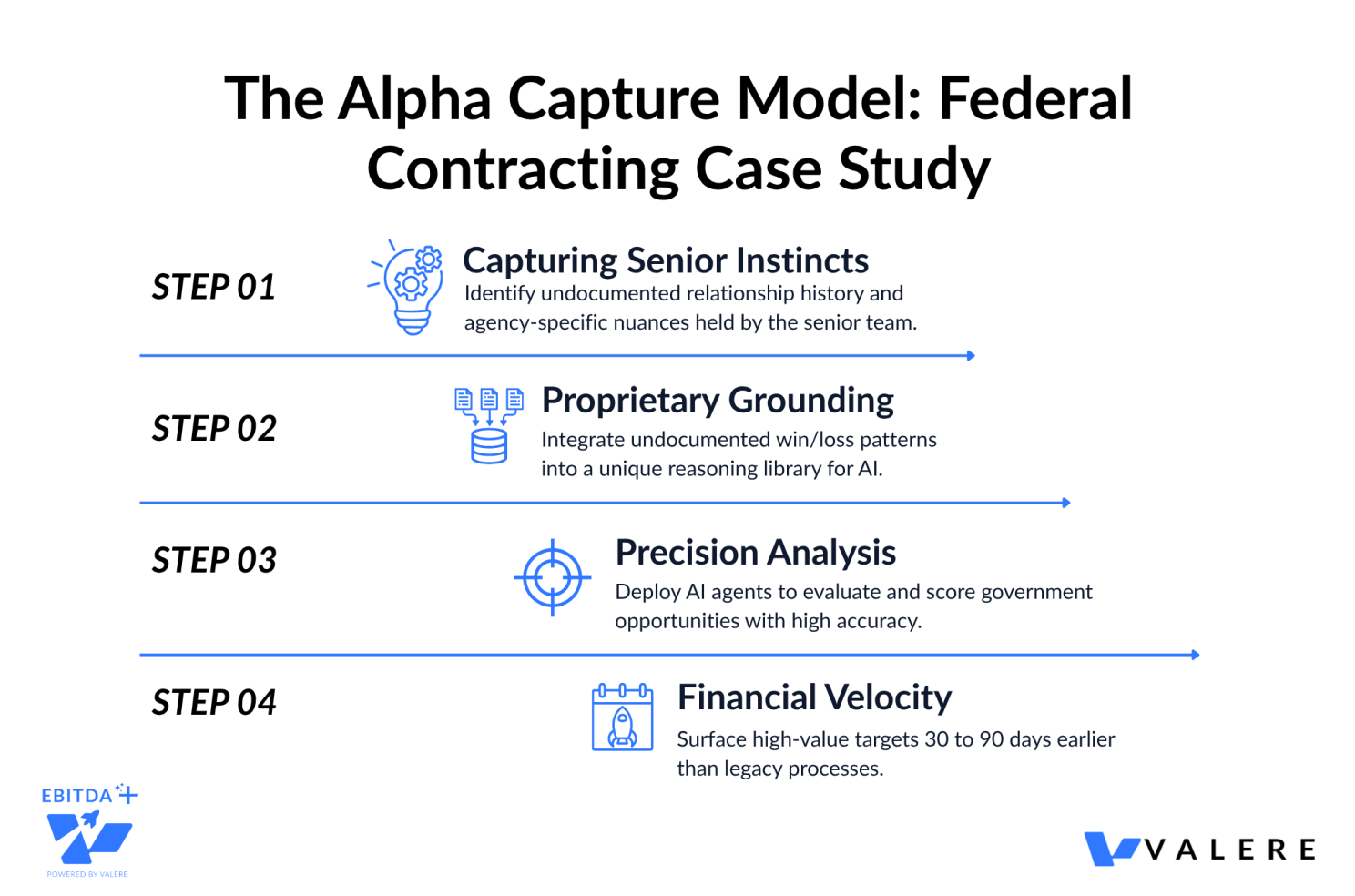

A federal contracting firm we worked with used this approach to capture the deal evaluation instincts of its senior team. Agency-specific relationship history, contracting nuances, and undocumented win and loss patterns: none of it lived in any system. After knowledge capture and deployment, their AI could score government opportunities with real precision and surface high-value targets 30 to 90 days earlier than their previous process allowed. That’s a data advantage, and data advantages hold up when someone releases a new model version.

A national construction delivery network had a similar experience. Subcontractor compliance protocols existed on paper. In practice, field teams had developed their own workflows: workarounds that were often more effective than the official process but completely invisible to leadership. The scan combined with field-level interviews surfaced the actual operating reality. That became the foundation for agents that worked with the business as it genuinely functioned, not as it was supposed to function.

Keeping the Knowledge Current

One of the more underappreciated problems in AI deployment is process drift. A knowledge base that’s accurate at deployment becomes less accurate as the business evolves. The real workflows shift, new edge cases emerge, and the AI gradually falls out of sync with operational reality.

The solution is a structured feedback loop that runs on a regular cycle, surfacing process changes and knowledge gaps for manager review before anything updates automatically. Humans stay in control of how the AI evolves. The knowledge library stays current as the company grows. That ongoing cycle is what transforms a one-time deployment into a compounding asset rather than a static tool.

The Compounding Effect Most Value Creation Plans Underestimate

Every interaction within a properly grounded AI system generates new data that refines its accuracy. The more embedded the system becomes in core workflows, the more operational intelligence it accumulates. The gap between what that system can do and what a competitor running generic AI can do widens every quarter.

The Analogy That Makes This Concrete

Tesla’s autonomy program runs on billions of miles of real-world sensor data that competitors cannot purchase. Amazon’s recommendation engine draws on 25 years of behavioral data that no budget can reconstruct overnight. The same compounding dynamic applies at the portco level, and it starts the moment knowledge capture begins rather than when model access is granted.

The Financial Outcomes We See Consistently

Organizations that systematically capture institutional knowledge and integrate it into their AI systems report returns of around 3.50 dollars for every dollar invested. Mature operations-heavy deployments reach 10x. Information retrieval improves 31 to 35 percent within weeks of implementation. Employee productivity increases 25 to 40 percent. Most initiatives recoup investment within 6 to 14 months, which is considerably faster than most enterprise technology transformations deliver.

The Gap Is Where the Value Lives

The organizations making meaningful progress on AI-driven EBITDA are the ones that treated knowledge capture as a prerequisite rather than an afterthought. That discipline is what separates AI that compounds in value from AI that generates activity without moving a number.

The PE firm wins because its systems carry reasoning its competitors have never captured. The federal contractor wins because its AI knows things about opportunity evaluation that no public dataset contains. Portcos getting the most out of AI investments have built systems with knowledge that no competitor can replicate by purchasing the same tools. That gap widens every quarter it goes unmatched.

An AI initiative built without that foundation produces generic outputs on a generic timeline. For any portco approaching exit, that’s a value creation gap worth addressing now.

Turn Your Company’s Institutional Knowledge Into a Structural AI Advantage

Ready to build AI that compounds in value over time? Valere works with private equity sponsors and portfolio companies to design, build, and scale AI systems that deliver measurable outcomes. Whether you are evaluating AI maturity across existing assets, preparing a company for transformation, or assessing a new acquisition target, Valere brings the expertise, platform, and partnership model to turn institutional knowledge into portfolio performance.

Start building an AI foundation your competitors can’t buy: https://www.valere.io/

About Valere

Valere is an award-winning AI value creation & delivery partner, providing end-to-end AI transformation & custom software solutions that transform companies into AI-first organizations through building, learning, and scaling. As an expert-vetted, top 1% agency on Upwork, Clutch, G2, and AWS, Valere serves as the trusted AI value creation partner for PE firms, mid-market companies, and Fortune 500 enterprises alike seeking comprehensive AI transformation that drives measurable ROI. With over 220 dedicated professionals and domain experts, we specialize in end-to-end AI-native solutions using our proven crawl-walk-run methodology, guiding organizations through every stage of their AI journey—from initial assessment and strategy to full-scale implementation and optimization.

About Alex

Alex Turgeon is President of Valere, serving as an embedded AI/ML strategic partner for private equity firms and their portfolio companies. He and his team operate as a vertically integrated AI solution provider throughout the PE value chain, delivering enterprise-grade solutions that enable greater operational control, cost reduction, and efficiency gains across the investment lifecycle. Connect with Alex to discuss how your organization can begin its transformation to the agent era.

Build Something Meaningful with Valere.

Frequently Asked Questions

How do PE-backed companies typically start an AI initiative?

Most begin with a readiness assessment that inventories existing data infrastructure, identifies where institutional knowledge concentrates, and maps which operational areas carry the highest ROI potential. Starting without that baseline typically leads to misallocated investment and pilots that don’t scale. The assessment phase is where the difference between a productive rollout and an expensive stall usually gets decided.

Why do automation platforms like Zapier, Make, or AgentForce often fall short for mid-market companies?

These platforms give teams powerful tools but assume the organization already understands its own processes well enough to configure them correctly. That assumption breaks down more often than most operators expect. The workflows that get configured are usually the documented ones, and those frequently diverge from how work actually happens. The result is automation that runs cleanly against a process that the team has already moved away from in practice.

What does institutional knowledge capture actually involve in practice?

It combines two inputs. First, a systematic scan of existing operational data including tickets, logs, communications, and records to map how work actually gets done. Second, structured interviews with subject matter experts who carry expertise that never made it into any system. The output is a documented knowledge base that AI systems can train on and act from, replacing reliance on generic pre-training data that has no visibility into how the specific business operates.

Why does AI deployment typically fail to deliver measurable EBITDA impact?

The most common cause is deploying capable models without grounding them in the organization’s actual operational context. The model can reason well but knows nothing about the business, its customers, or its people. Outputs end up generic, adoption stays low, and the initiative produces activity without results. The gap between what the AI can access and what experienced employees actually know is almost always the root issue.

How long does it take to see returns from a properly structured AI deployment?

Based on engagements across mid-market and PE-backed businesses, most initiatives recoup investment within 6 to 14 months when knowledge capture is a foundation rather than a later phase. Teams that skip that step see longer timelines and more variable results, typically cycling through model and vendor changes rather than addressing the underlying data gap.

What makes AI-driven value creation defensible at exit?

Proprietary data and embedded institutional knowledge cannot be licensed or replicated by a competitor purchasing the same AI tools. When AI systems run on the specific operational intelligence, customer patterns, and expert reasoning of a business, they create a structural information asymmetry. That asymmetry shows up in efficiency metrics, decision speed, and output quality in ways a buyer can evaluate and value. Generic AI deployments offer little differentiation because every competitor accesses the same baseline.