By: Alex Turgeon, President at Valere

TL;DR: 3 Key Takeaways

- The six architectural pillars of enterprise agent platforms– deterministic contracts, decoupled state management, human-in-the-loop design, self-correcting feedback loops, security isolation, and enterprise-grade observability- are what separate AI deployments that scale profitably from the 40% of agentic AI projects estimated to face cancellation by 2027.

- Private equity firms that standardize AI agent infrastructure across their portfolios can reduce per-PortCo implementation costs, enforce consistent governance and security, and create compound returns as continuous learning loops improve system accuracy over the hold period.

- Schema enforcement, durable state persistence, MCP gateways, and reasoning traces are not optional infrastructure for enterprise AI agents but rather the minimum architectural requirements that determine whether a deployment is production-ready or permanently stuck in pilot mode.

When private equity firms talk about AI value creation, the conversation usually starts with use cases. An operations partner spots an opportunity to automate claims processing at a healthcare portco. A deal team hears that a target’s customer service function could be transformed by AI. A portfolio CEO returns from a conference excited about deploying agents that can handle procurement end to end.

These are the right instincts. But the conversation almost always skips a layer, the architectural layer, and that skip is where value creation plans go to die.

Gartner predicts that 40% of enterprise applications will include task-specific AI agents by the end of 2026, up from less than 5% today. Agentic AI could generate nearly 30% of enterprise application software revenue by 2035, surpassing $450 billion. That is an enormous value creation surface. But an estimated 40% of agentic AI projects face cancellation by 2027 due to reliability gaps and unclear ROI. The difference between firms that capture value and those that burn capital on stalled pilots is not which use cases got selected. It is how well the foundation underneath got built.

Below translates the engineering decisions that determine whether AI agents work at enterprise scale into language that PE operating partners, portco executives, and board members can use to evaluate investments, pressure-test vendor claims, and govern these systems. Each pillar is grounded in examples from Valere’s enterprise engagements that illustrate how these architectural principles translate into measurable business outcomes.

Architecture as Moat

Among executives whose organizations have deployed AI agents in production, 74% report achieving ROI within the first year, and 39% of those reporting productivity gains have seen productivity at least double. But nearly two-thirds of organizations have not yet begun scaling AI across the enterprise, and 70% discover that data infrastructure is fundamentally inadequate only after launching ambitious AI initiatives.

This gap is where PE firms have both a structural advantage and a structural risk. The advantage: PE firms can impose architectural discipline across a portfolio in ways that organically managed companies often cannot. The risk: without understanding what good architecture looks like, operating teams will either overspend on platforms that do not fit, or underspend on foundations and end up with brittle pilots that never reach justifiable scale.

Pillar One: Deterministic Contracts for Probabilistic AI

Large language models are probabilistic. Every response varies slightly. That is what makes them powerful for reasoning and language tasks. But enterprise systems, ERPs, payment processors, compliance engines, CRMs, are deterministic. A wrong currency code, a misformatted date, a missing customer ID. These errors propagate silently through connected systems and surface days later as reconciliation nightmares, compliance violations, or corrupted reporting.

The architectural solution is contract-first design. Before any agent is built, the engineering team defines the exact format of every input and output using schemas, formal blueprints that describe every data field, its type, whether it is required, and what values are acceptable. A validation layer then sits between the AI model and every downstream system. If the output does not match the contract, the layer rejects it automatically before it reaches any system of record. Libraries like Pydantic in Python and Zod in TypeScript make this enforcement fast and reliable.

Valere Enterprise QA Automation. A client’s quality assurance team faced a severe bottleneck as manual reviewers cross-checked image and text annotations against a dense 50-page rulebook. Raw probabilistic AI would have produced hallucinations and missed edge cases. Valere replaced fuzzy text outputs with an automated logic bridge using AWS Bedrock, mapping the 50-page guidelines into precise JSON logic. The system now provides a mathematically consistent quality score and a full audit trail in a single API call, completely removing the unpredictability of standard LLM generation.

The Private Equity Takeaway. When evaluating a portco’s AI capabilities, ask one question: show the schema definitions and validation layer for every agent output that touches a system of record. If the answer is vague, the system is not production-grade regardless of how impressive the demo looks.

Pillar Two: Decoupled State Management

Most enterprise tasks worth automating unfold over time. A procurement agent gathers quotes, waits for approval, then issues a purchase order. An insurance claims agent collects documentation, flags exceptions, and processes payment. These workflows take days or weeks.

The architectural problem: if the agent’s reasoning logic and its memory of progress are tangled together, any interruption, a server restart, a network drop, a dependent system going down, means starting over from scratch. At scale, this destroys throughput and trust.

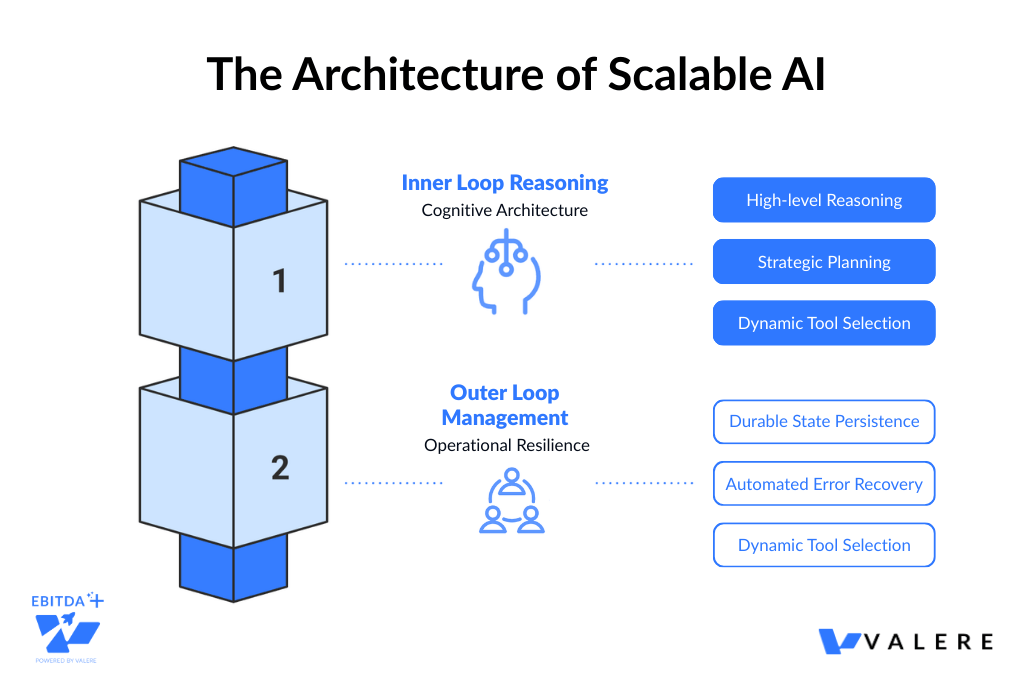

The solution separates the agent into two layers. The Inner Loop handles reasoning: breaking down requests, planning steps, calling tools, evaluating results. The Outer Loop handles persistence: storing progress, managing retries, surviving interruptions, and connecting to user interfaces. Each task is modeled as a state machine where every transition is recorded as a durable event, like a checkpoint in a video game, enabling exact resumption after any interruption.

Valere High-Volume Email Orchestration. A client’s marketing team needed to orchestrate campaigns to over 250,000 contacts without adding headcount or triggering ISP spam filters. No standard agent could hold the state of hundreds of thousands of concurrent threads. Valere decoupled the state using a proprietary orchestration framework where the Brain layer handles reasoning while separate execution agents manage infrastructure, autonomously executing nonlinear workflows across 210 to 300 inboxes, handling inbox rotation, dynamic personalization, and unsubscribe compliance without breaking state.

The Private Equity Takeaway. During due diligence, ask: how does the agent system handle interruptions mid-task? Can it resume from a checkpoint? If the answer involves not having encountered that problem yet, the pilot has not been stress-tested.

Pillar Three: Human-in-the-Loop Architecture

Autonomous should never mean unsupervised. The architecture embeds oversight as a systematic feature that activates precisely when needed through confidence-based triggering. When an agent’s confidence falls below a threshold, or when the action falls into a predefined high-risk category, the system pauses that specific task and routes it to a human reviewer asynchronously. Other agents and tasks continue unblocked. The reviewer sees the agent’s reasoning, the relevant data, and the proposed action. Every decision is recorded.

In sophisticated implementations, this layer functions as middleware intercepting the agent’s tool calls and applying policy rules to determine whether each call can proceed automatically or requires review.

Valere Healthcare AI Compliance. A healthcare client lacked oversight for automated AI patient communications, presenting massive liability. Valere architected a human-in-the-loop portal acting as a definitive gate between the AI model and the patient. The system routes AI-generated messages to administrators for review, modification, and approval before delivery, integrating with Salesforce and AWS Bedrock for performance metrics and automated audit trails.

The Private Equity Takeaway. Only one in five companies has a mature governance model for agentic AI. Ask to see the policy rules governing agent escalation and the audit log from the last month. If neither exists in structured form, the system is carrying governance risk that will eventually materialize.

Pillar Four: Self-Correcting Feedback Loops

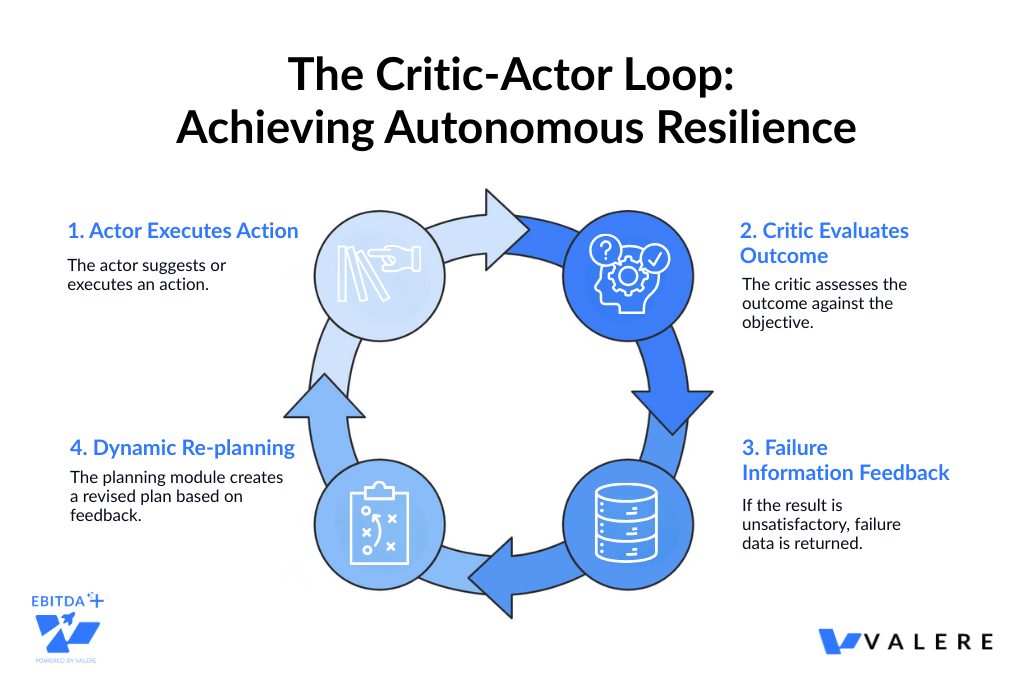

The Critic-Actor model addresses production resilience. One module (the actor) executes an action. A separate module (the critic) evaluates the result against the original objective. If the result falls short, failure information feeds back into the planning module, which generates a genuinely new plan, not a simple retry.

This feedback loop runs on event-driven architecture where every action, result, error, and correction flows as a discrete event routed to the appropriate module. The most powerful implementations extend this into continuous learning loops where systems improve over time by incorporating human judgment and real-world outcomes.

Valere Government Contracting Intelligence. A government contracting client needed to shift from reactive opportunity chasing to intelligence-driven sales. Valere captured tribal knowledge from sales executives to create a proprietary intelligence base for scoring new SAM.gov solicitations. The critical feature: a human-in-the-loop iteration layer where bid/no-bid decisions and scoring weight adjustments feed asynchronously back into the intelligence base, creating a continuous learning loop that dynamically updates future scoring based on real-world outcomes.

The Private Equity Takeaway. The continuous learning loop is especially relevant for PE. System accuracy and value compound over the hold period, improving the exit multiple on the AI investment. Ask to see error recovery logs and accuracy trends since deployment.

Pillar Five: Security as Architecture

AI agents are reasoning systems that interpret instructions, make decisions, and take actions, which means manipulation vectors that traditional software does not have. The four primary risks:

- Privilege escalation, where an agent obtains access beyond its intended scope

- Data leakage via prompts, where crafted inputs trick the agent into revealing sensitive information

- Autonomous attack amplification, where a compromised agent uses tool access to propagate an attack

- Model drift, where gradual behavioral changes cause deviation from intended policies

The foundational principle is isolation. The AI model should never have direct access to credentials, databases, or file systems. All actions execute in sandboxed environments using technologies like Firecracker microVMs or gVisor containers. Credentials are injected at runtime, scoped to specific tasks, and revoked on completion.

The Model Context Protocol (MCP), originally created by Anthropic and now governed by the Linux Foundation with over 97 million monthly SDK downloads, provides structured tool discovery through an intermediary server. However, MCP does not natively provide governance. Enterprises need an MCP Gateway centralizing authentication, authorization, rate limiting, policy enforcement, and audit logging. Idempotency tokens prevent duplicate executions during recovery scenarios.

Valere Industrial Document Search. Engineers at a large contracting client wasted hours searching through over 10 terabytes of PDFs and legacy SQL databases. Pointing an AI agent at the full dataset would violate least-privilege principles. Valere built a RAG system with strict sandbox boundaries using Active Directory-based access control. Users receive natural-language answers and traceable citations drawn only from data explicitly authorized for their role.

The Private Equity Takeaway. During due diligence, ask: do AI agents have their own IAM identity? What is the maximum privilege any agent can hold? Show the tool call audit log from the last 30 days. These questions reveal more about AI maturity than any slide deck.

Pillar Six: Enterprise-Grade Observability and Cost Optimization

Two operational challenges dominate at scale: understanding what agents are doing, and controlling what agents cost. The AI Gateway or model router addresses both by routing requests to appropriately sized models (reducing inference costs by 30 to 60%) and providing unified visibility into spend, error rates, latency, and usage. Reasoning traces, hierarchical records capturing every step of an agent’s decision-making, are essential for debugging, compliance, and continuous improvement.

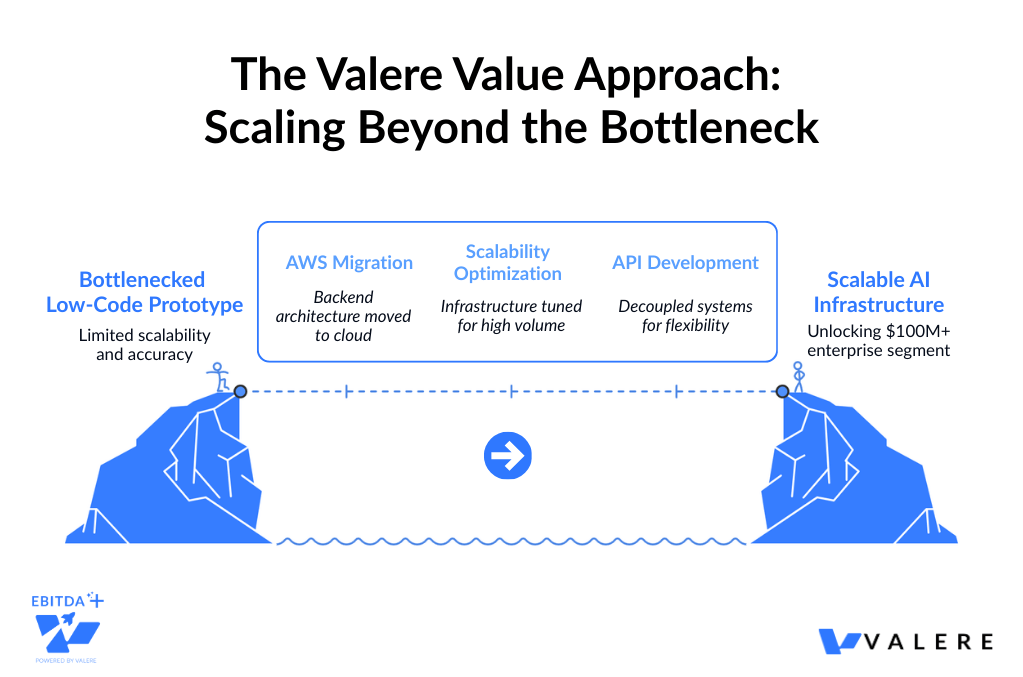

Valere Enterprise AI Platform Migration. A client’s AI-powered licensing chatbot, built on a low-code framework, struggled with scalability and complex reasoning, effectively capping growth. Valere led a full migration to AWS with extensive load testing, scalability optimization, and custom API development. The result: 50% improvement in response accuracy and infrastructure scaled to unlock a $100M-plus enterprise customer segment. The low-code prototype that worked at pilot scale had become the bottleneck blocking enterprise value creation.

The Private Equity Takeaway. Require every portco deploying agents to implement an AI gateway with cost tracking from day one. The 40% project cancellation rate is not primarily a technology failure. It is an infrastructure maturity failure.

Build vs. Buy Through a Portfolio Lens

For PE portfolio companies, the answer is almost always hybrid. Enterprise platforms (Salesforce Agentforce, Microsoft Copilot Studio, Google Agentspace, AWS Bedrock AgentCore) provide the operational backbone. Internal teams or experienced implementation partners build the domain-specific agent logic that differentiates the business. The strategic question is not build or buy but rather: where does competitive advantage lie, and where is infrastructure commodity?

At the portfolio level, standardizing on a common agent infrastructure layer creates platform arbitrage: better vendor terms, shared implementation patterns, and cross-portfolio AI operations capability that reduces per-company overhead.

Due Diligence Questions: What to Ask Before You Invest or Deploy

Whether evaluating an acquisition target or reviewing a portco deployment plan, these questions map to each pillar:

- Deterministic contracts: Are formal schemas defined for every agent I/O? Is there automated validation before data reaches systems of record?

- State management: Can the team demonstrate task resumption from a checkpoint after simulated failure?

- Human oversight: What policy rules govern escalation? Is the audit trail structured and accessible?

- Self-correction: What is the self-recovery rate? Does the system incorporate feedback to improve over time?

- Security: Do agents have IAM identities? Is there centralized tool call governance through an MCP gateway?

- Observability: What is the cost per agent action and how has it trended? Are reasoning traces captured?

The Bottom Line

Enterprise agent platforms represent a genuine architectural shift in how intelligence, automation, and human judgment combine inside an organization. The six pillars form the engineering foundation that separates scalable, trustworthy AI from expensive, stalled experiments. These are not academic concepts. These are the architectural decisions that determine whether an agent deployment generates measurable returns or becomes another pilot that never graduated to production.

The firms that invest in getting this foundation right, at the portco level and across the portfolio, will capture near-term productivity gains and compound that advantage as agent capabilities accelerate, regulatory frameworks crystallize, and the gap between architecturally mature organizations and everyone else becomes the defining competitive divide of the next decade.

The architecture is the alpha. Build accordingly.

Start Building the Architecture Behind Scalable AI

The gap between a promising AI pilot and a production-grade agent platform is not going to close with the next model release. It closes with architecture. Valere works with private equity firms and their portfolio companies to architect, deploy, and scale enterprise AI agent platforms built on the six pillars that separate lasting value creation from stalled experiments. Here is what you will walk away with:

- An Architecture Readiness Assessment identifying where your current AI deployments lack the schema enforcement, durable state management, and security isolation needed to operate at enterprise scale

- A clear path from fragmented AI pilots to governed, observable systems that integrate with your existing infrastructure, enforce compliance, and compound in accuracy over time

- A personalized value creation roadmap from isolated agent experiments to production-grade platforms with human-in-the-loop governance, self-correcting feedback loops, and centralized cost optimization

Start building your agent architecture: valere.io