From the Desk of Guy Pistone — Weekly insights for operators at mid-market & PE-backed companies

TL;DR

Most executives assume AI is like standard software. Build it once, run it forever. The ugly truth is that models begin decaying the moment they hit production, suffering from Model Collapse, where they start training on their own bad habits. The fix isn’t better code; it is re-engineering your operational workflow to ensure a human-in-the-loop constantly injects ground truth back into the system to stop the rot.

The Day 2 Problem

There is a dirty secret in AI development that I hardly hear vendors admit during the pitch meeting… The hardest part isn’t building the model. It’s keeping it smart once it meets the real world.

I see this pattern constantly. A company launches a pilot. The demo is crowned flawless with an accuracy of 94%. Everyone high-fives, but three months later, the customer support bot starts hallucinating policies that don’t exist. The forecasting model is also missing revenue by 15%. How will you explain it?

Most executives just panic and think: “Did the code break?” No. The code is probably fine. What happened is that the data reality changed, and your model is still living in the past.

Standard software (like your ERP or CRM) is deterministic. If it worked yesterday, it will work today. AI is probabilistic and organic. Which means it decays.

If you treat AI like standard software, you aren’t building a tool; you’re building a ticking time bomb of technical debt.

Why Models Get Dumber (The Mechanics of Failure)

When I diagnose a failing AI project, it usually comes down to one of two reasons. One is annoying; the other is catastrophic… Let’s start with the best of a bad situation…

1. Data Drift (The Annoying One)

This happens because the world changes. That’s not news to anyone, right? Whatever you’re considering, consumer behavior, interest rates, slang, I could go on and on, but what they have in common is that they all evolve. If you trained your sales bot on data from 2021, it’s trying to sell to a 2021 customer in a 2026 world.

The relationship between input (data) and output (prediction) has drifted. This is manageable if you monitor it.

2. Model Collapse (The Catastrophic One)

This is what you call a broken feedback loop. How do you end up here? Simple, in a rush to automate everything, companies often feed the AI’s output back into the system as training data for the next version.

- The Cycle: The AI guesses that the system assumes is right. So, AI re-trains on its own guess. See the issue here?

It’s like making a photocopy of a photocopy. Each time you copy, the image turns into black sludge. Bringing it back to AI, the model becomes an echo chamber of its own biases, becoming increasingly confident about increasingly wrong answers.

This is why automation without human oversight isn’t just risky… It’s mathematically destined to fail.

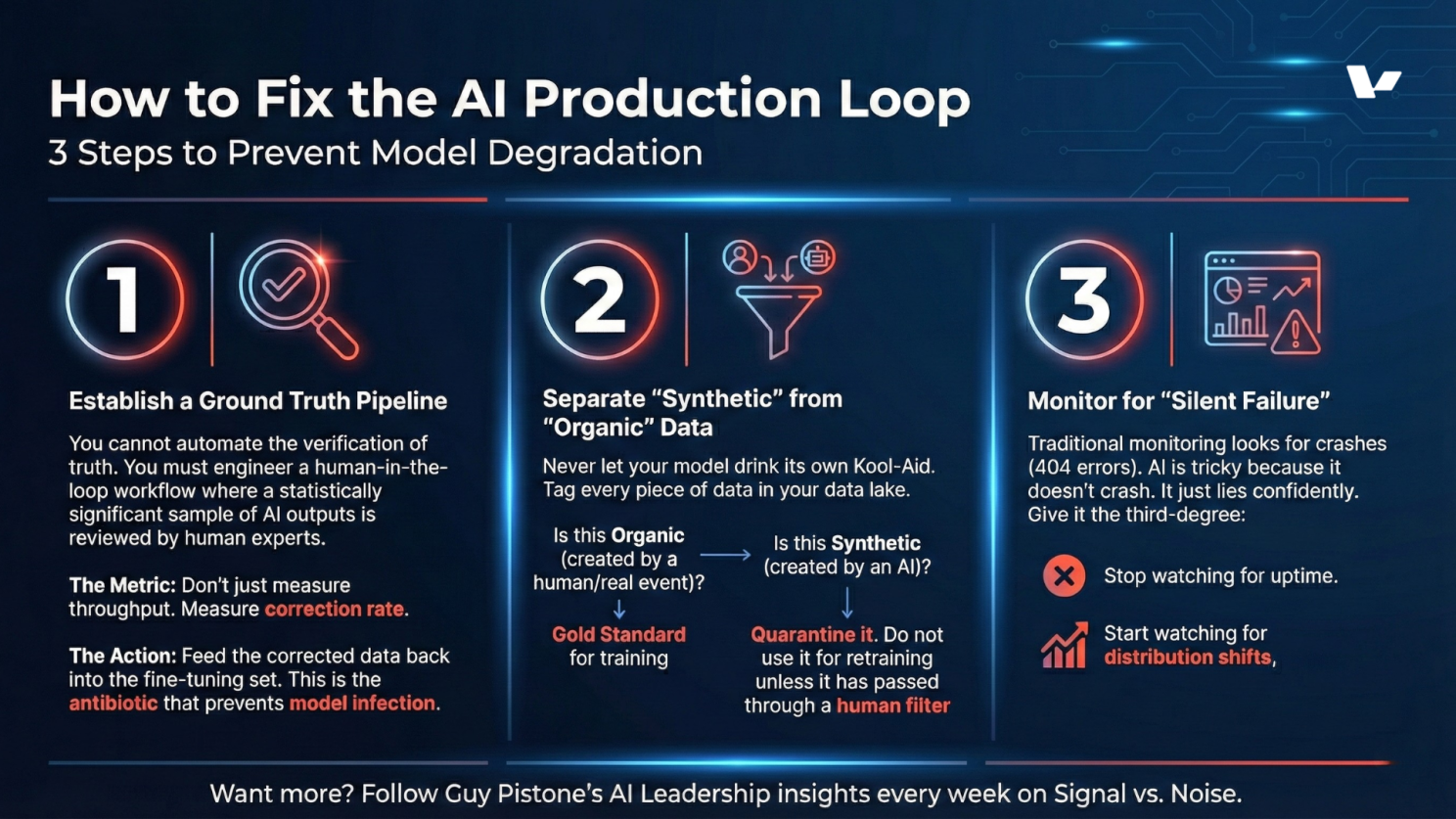

3. Architectural Fixes for Production Stability

You don’t need a better model. You need a better operating system around the model. Here is how I advise clients to fix the loop.

If your chatbot suddenly answers 50% of queries with the same phrase, or if your fraud model’s approval rate jumps from 2% to 10% overnight, you have a drift problem.

The Operator Takeaway

The error most leaders make is thinking of AI as a Project (Start -> Build -> Launch -> Done). AI is not a project. It is a process.

My quote of the week:

Stop treating AI like a building you finish. Treat it like a garden. If you stop weeding (monitoring) and watering (retraining), it dies.

If you are budgeting for AI, here is my new rule of thumb: For every $1 you spend on building the model, set aside $1 for the pipeline to monitor and retrain it.

If you can’t afford the maintenance, you can’t afford the model.

Ready to stop the “pilot failure” and build systems that survive the real world? Book a 30-Minute Audit.

Guy Pistone | CEO, Valere | AWS Premier Tier Partner

Building meaningful things.

Works Cited

- The Curse of Recursion: Training on Generated Data Makes Models Forget (ArXiv) – The definitive paper on why models collapse when fed synthetic data. Read the paper https://arxiv.org/abs/2305.17493

- Productionizing Machine Learning: From Deployment to Drift Detection (Databricks) – A technical guide on spotting drift before it breaks your product. Read the guide https://www.databricks.com/blog/2019/09/18/productionizing-machine-learning-from-deployment-to-drift-detection.html

- Most AI Initiatives Fail. This Framework Can Help. (Harvard Business Review) – Why the organizational “backbone” matters more than the model. Read the article https://www.hbs.edu/faculty/Pages/item.aspx?num=68181