The Bottom Line Up Front

Some of the smartest AI teams in the world are failing.

Not because they lack intelligence.

Because they lack alignment.

We’re past the point where data, talent, or model architecture explain the gap. AI initiatives aren’t falling short because the tech doesn’t work.

They’re falling short because the system around the tech is broken.

Disconnected teams. Disconnected systems. Everyone trying to do too much in one place. Sound familiar?

The Hidden Pattern Behind AI Failure

Enterprise AI spend is at an all-time high. So… why are results flat?

- 74% of companies have yet to deliver tangible value from AI. Boston Consulting Group, 2024

- Just 5% of generative AI pilots show measurable business impact. MIT x Fortune, 2025

The issue isn’t a lack of innovation. It’s orchestration.

Too many organizations are trying to brute-force their way through complexity with oversized models, bloated teams, or worse, single points of failure.

AI systems, like human organizations, break when everything depends on one brain.

Real-World Lessons in Misalignment

🩺 Babylon Health

Valuation: $4.2B → Outcome: bankrupt.

They promised AI-driven healthcare for everyone. But they scaled the story faster than the infrastructure. Product, medical, and regulatory teams operated in silos.

The tech was overhyped. The system was underbuilt.

👉 Lesson: Intelligence without alignment collapses.

Source: WIRED

💼 Amazon Recruiting AI

An internal model learned to downgrade resumes from women. Not because it was malicious, but because it mirrored systemic bias in historical hiring data.

Smart model. Flawed system. Predictable result.

👉 Lesson: Algorithms don’t fix bad structures. They amplify them.

Source: Reuters

In both cases, the failure wasn’t capability. It was architecture.

The Mirror Between Teams and Models

Why do these failures sound familiar? Because it’s the same reason human teams underperform.

Too much centralization. Too many disconnected parts. Too much intelligence crammed into one place, hoping it all holds together.

This is exactly where AI is headed, and where it breaks.

Large Language Models are impressive, but bloated. They try to reason, remember, and act, all in one place.

The result? High cost. Slow latency. Inconsistent output.

Sound like any leadership teams you’ve worked with?

The Shift: From Monoliths to Modular Systems

The next generation of AI (and the next generation of organizations) will win for the same reason:

They separate roles. They coordinate through shared structure. They design for alignment.

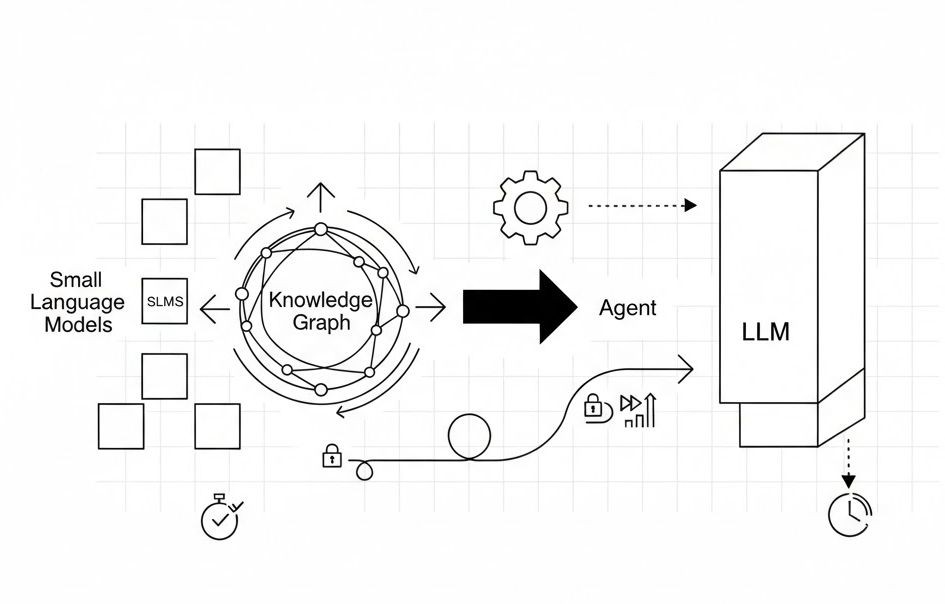

Agentic systems do this by pairing Small Language Models with Knowledge Graphs. SLMs handle the reasoning. Graphs store and update knowledge externally.

Together, they deliver faster, cheaper, more accurate results, not because the models got bigger, but because the system got smarter. As Anthony Alcaraz put it: “Agentic systems don’t just benefit from SLMs. They architecturally require them.”

The exact same principle applies to leadership.

The best teams don’t centralize all the thinking. They distribute it clearly, intentionally, with structure.

The Real Advantage in 2025

The edge isn’t intelligence anymore. It’s alignment.

Bigger models won’t save broken workflows. Brilliant hires can’t fix chaotic systems. Talent doesn’t scale dysfunction.

Whether you’re training agents or leading teams, your architecture of alignment decides whether you win, or flatline.