By: Alex Turgeon, President at Valere

TL;DR: 3 Key Takeaways

- World Models move AI from a productivity tool to a decision infrastructure, enabling portfolio companies to simulate thousands of operational futures before committing real capital, turning prediction into a direct driver of EBITDA and exit value.

- The competitive moat World Models create is built on proprietary organizational intelligence: unlike licensed software, a model trained on a company’s own decision history, customer patterns, and institutional knowledge cannot be replicated by any competitor, and grows more defensible with every quarter it runs.

- Private Equity Operating Partners who prioritize AI infrastructure investment during the hold period, capturing institutional knowledge, orchestrating intelligent systems at scale, and closing the feedback loop between outcomes and the model, are building a compounding advantage in operating performance and exit positioning that will be very difficult to close from behind.

Private equity value creation has always been a race against the clock. You acquire a business, apply operational improvements, and generate returns within a defined hold period. The playbook is familiar: cost rationalization, revenue growth, margin expansion, and multiple arbitrage.

Artificial intelligence entered that conversation promising productivity improvements, automating repetitive tasks, surfacing operational insights, and accelerating content generation. Those gains are real. But they are incremental improvements layered on top of operating models that were never designed with intelligence at their core. For most sponsors and their portfolio companies, AI has delivered efficiency. It has not yet delivered transformation.

That is about to change. The AI field has crossed a meaningful threshold. The era of generative synthesis, meaning AI that produces convincing outputs, is giving way to something far more consequential: World Models. Understanding what that shift means for portfolio performance, competitive positioning, and exit value is where Valere’s most important client conversations begin.

What Is a World Model?

A World Model is an AI system that learns an internal representation of how an environment works, then uses that representation to predict future states and support planning. It does not merely recognize patterns or generate plausible outputs. It captures dynamics, meaning how situations evolve, how actions produce consequences, and how the future branches depending on what you do right now.

The practical distinction is significant. A standard generative AI tool answers the question of what something looks like. A World Model answers what happens next and what should be done about it.

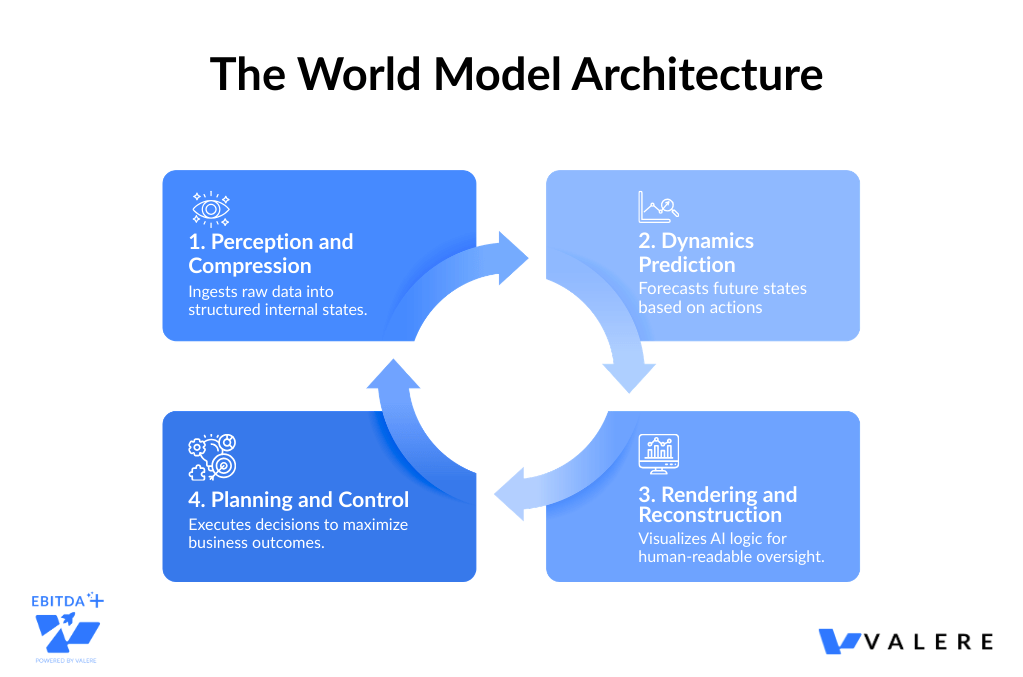

This architecture rests on four integrated layers. Perception and compression ingests raw inputs such as cameras, sensors, logs, and environments and reduces them into a structured internal state. Dynamics prediction takes that state plus a candidate action and forecasts what the world looks like next. Rendering and reconstruction translates the model’s internal representations back into human-readable outputs. Planning and control uses those predicted futures to select actions that optimize for defined outcomes including safety, efficiency, task completion, and business value.

This architecture is already reshaping industries at scale. Waymo’s World Model stress-tests autonomous driving edge cases through simulation rather than costly real-world exposure. World Labs generates persistent, editable 3D environments from simple inputs. Roblox’s Cube Foundation Model produces not just geometry but functional objects that behave as users expect, with outputs grounded in causal understanding rather than surface pattern matching.

The pattern is consistent: the most valuable AI systems going forward will not generate the most convincing outputs. They will understand the world well enough to simulate it, and simulate it well enough to guide real decisions.

Why World Models Change the Private Equity Investment Thesis

From Productivity Tool to Decision Infrastructure

For operating and value creation partners, World Models represent a fundamental shift in what AI actually is. This is the difference between AI that produces a convincing dashboard and AI that reliably drives EBITDA. World Models function as simulation engines capable of running thousands of hypothetical futures before a single real operational decision is made. The strategic implications compound quickly. Scenario planning that used to take weeks of analyst time runs continuously and on demand. Customer risk, market risk, and operational risk become predictable rather than reactive. Institutional knowledge locked in the heads of senior operators and subject matter experts becomes a scalable, proprietary asset rather than a retention risk.

The Gap Between Capability and Captured Value

The history of enterprise AI is full of proof-of-concepts that never scaled and pilots that never compounded. The failure pattern is almost always the same: AI gets layered onto an operating model built for a pre-AI world. The technology improves. The operating model does not. The gap between capability and captured value quietly widens.

For portfolio companies, that gap has a specific financial cost. It shows up in bloated cost structures that should have been automated, in churn that analytics should have predicted, in procurement decisions made without predictive intelligence, and in management bandwidth consumed by operational firefighting that a well-orchestrated AI layer could have prevented.

World Models make that gap more expensive to ignore. The organizations deploying this architecture are not slightly better at executing than their peers. They are operating on a different logic entirely, testing strategies in simulation before committing real capital, encoding institutional knowledge as a proprietary training asset, and building intelligence infrastructure that appreciates with every cycle it runs. The companies that build this infrastructure during the hold period will command premium multiples at exit. The ones that do not will face increasing pressure from competitors that have.

Outcomes, Not Features

Valere’s engagement model is built around a specific premise: clients are not purchasing platforms with features. They are purchasing outcomes delivered as software.

A model trained on a portfolio company’s proprietary decision history, including its wins, its losses, its customer patterns, and its operational edge cases, is an asset no competitor can replicate by licensing the same tools. The accumulated intelligence of consequential business decisions, made actionable, creates durable competitive advantage. That is what drives multiple expansion at exit. It is also what makes a company increasingly defensible in its market the longer the model runs.

For PE sponsors evaluating AI initiatives across their portfolio, the right question is not which tools are being licensed. It is what proprietary intelligence is being built.

The Infrastructure Behind Compounding Portfolio Intelligence

Through years of working alongside private equity sponsors and their portfolio companies, a consistent pattern has emerged: the organizations that capture the most value from AI are not the ones that deploy the most tools. They are the ones that build the right infrastructure. That distinction shapes everything about how Valere approaches AI transformation.

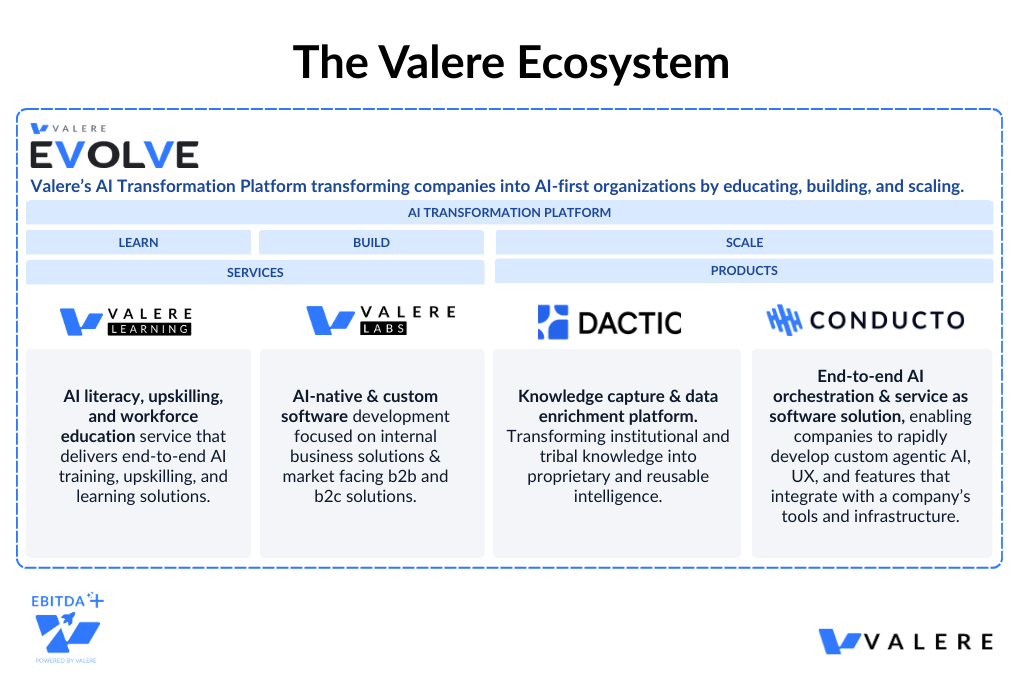

Valere Evolve is the platform that came out of that practical experience. It is not a suite of features assembled to address every possible use case. It is an integrated ecosystem built in response to the specific challenges that repeatedly surface when deploying AI at the operating company level: organizations that hold enormous reservoirs of institutional knowledge they cannot yet use, AI pilots that generate promising results but never scale, and intelligence systems that plateau rather than compound because no feedback loop connects outcomes back to the model.

Each component of the Evolve platform was developed to address one of those challenges directly. What follows is a closer look at each.

Valere Learning: Closing the Organizational Readiness Gap

One of the most consistent findings across countless engagements is that technology readiness and organizational readiness are rarely at the same level. A company can have strong data infrastructure and a well-architected model and still fail to generate returns because the people working alongside that system do not know how to interrogate it, challenge it, or recognize when it is producing outputs that drift from operational reality.

This gap shows up in predictable ways. A finance leader who does not understand how a forecasting model weights historical inputs will default to overriding it rather than refining it. A customer success manager who cannot interpret an account health score will treat it as background noise rather than an actionable signal. In both cases, the value of the underlying AI system erodes not because the model is wrong but because the organization is not equipped to work with it effectively.

Valere Learning was built in response to that pattern. The approach is calibrated to meet employees where they actually are, from frontline operators to executive leadership, and focused on building the practical competency needed to work productively alongside AI systems: interpreting predictions, interrogating uncertainty, and recognizing when a simulation is drifting from the reality it was trained on. In a World Model environment, those are not advanced skills. They are the baseline of an AI-forward operating organization.

Valere Labs: Closing the Implementation and Customization Gap

Across engagements, one of the clearest predictors of whether an AI deployment compounds or plateaus is how deeply it is integrated with the specific operational reality of the business running it. Generic implementations trained on industry-wide data tend to produce generic intelligence. The model learns what is broadly true across thousands of companies rather than what is specifically true about this company, its customers, its competitive dynamics, and its decision history.

World Models are not off-the-shelf products, and the most consequential implementations have reflected that. Valere Labs handles the custom software development and managed services work that makes World Model concepts operationally real: simulation environments built around specific business contexts, data pipelines designed around the actual structure of a company’s information architecture, integration layers that connect model outputs to the workflows where decisions actually get made, and model infrastructure that evolves as the business evolves.

The most important aspect of this work, learned across multiple deployment cycles, is continuity. The organizations that see the greatest compounding effect are the ones that treat AI infrastructure as a living system requiring ongoing refinement rather than a project with a completion date. Valere Labs stays engaged as a long-term partner in that process, which is where the real appreciation in model value tends to occur.

Dactic: Closing the Knowledge Capture Gap

The single greatest bottleneck encountered in AI value creation across portfolio companies is not model capability. It is the gap between the intelligence that exists inside an organization and the intelligence that is actually available to train on.

Most operating companies are sitting on years of consequential decision history: bid outcomes, customer retention patterns, operational failures, pricing decisions, and the qualitative judgment calls made by experienced operators who have developed a calibrated intuition for the business. Almost none of it is machine-readable. It exists in the heads of senior operators, buried in unstructured documents, embedded in spreadsheet comments, or encoded in institutional habits that have never been formally documented.

What has been observed repeatedly is that when a key operator leaves a portfolio company, a meaningful portion of that company’s competitive intelligence leaves with them. Dactic was developed to address that problem systematically. It transforms unstructured organizational knowledge into the structured, high-quality training signal that World Models require. The result is that a company’s most valuable institutional intelligence becomes a durable portfolio asset rather than a retention risk, and models trained on that intelligence reflect the specific operational reality of the business rather than a generic approximation of its industry.

Conducto: Closing the Orchestration and Compounding Gap

Building a capable AI system is one challenge. Ensuring that intelligence actually flows to the right decision points, at the right time, with the right governance in place, and that outcomes feed back into the model to improve the next prediction, is an entirely different engineering problem. It is also the one most commonly underestimated at the outset of a deployment.

What has been seen in practice is that as AI deployments scale across business functions and portfolio companies, orchestration becomes the central constraint. Without it, predictions get generated but not acted on. Agent outputs operate without auditability. Feedback from real-world outcomes never routes back to the learning layer. The system produces intelligence that does not compound because nothing closes the loop.

Conducto addresses that constraint directly. It governs how predictions flow across decision points, how agent actions are tracked and auditable, and critically, how outcomes route back to Dactic so that every cycle of the model makes the next one more accurate. The relationship between Dactic and Conducto reflects something learned across multiple enterprise deployments: knowledge capture and orchestration are not independent capabilities. They are two halves of the same compounding mechanism. Dactic encodes what the organization knows. Conducto puts that knowledge to work at scale and ensures that what the organization learns continues to feed back into what it knows.

Three Dimensions of World Model Value in Practice

Looking across Valere’s deployments, three consistent dimensions of value creation emerge that map directly to operating priorities.

Dimension One: Simulation Replaces Costly Experimentation

In every domain where World Models are deployed, the economic mechanism is essentially the same: costly, slow, risky real-world experimentation gives way to cheaper, faster, lower-risk synthetic simulation. For portfolio companies, this logic expands the decision space and compresses the cost of being wrong. Organizations can test strategies, stress-test operating plans, and model the downstream consequences of capital allocation decisions at a scale that simply was not feasible when every test required real resources and real risk exposure.

Education Technology: Valere Labs applied this capability for an EdTech client rethinking how physical learning environments are designed. Physical classroom redesigns are expensive, disruptive, and difficult to fund through traditional approval processes. Using computer vision and mixed-reality technology, Valere built a platform that enables stakeholders to simulate layout changes before a single piece of furniture moves, inhabiting the proposed environment, testing assumptions, and refining decisions entirely in simulation. The result collapsed the cost and risk of physical experimentation while dramatically accelerating the approval cycle.

Professional Services Financial Planning: The same simulation logic was applied to financial planning for a professional services firm relying on manual spreadsheet models, making meaningful scenario planning nearly impossible to execute with confidence or speed. Custom machine learning models trained on the firm’s historical financial data created a predictive baseline, enabling on-demand scenario planning and continuous financial simulation. Budget cycles shortened by over 60%, and what was once a time-consuming annual exercise became a real-time capability running as conditions change. For a business, that is the difference between reacting to the year and steering through it.

Dimension Two: Behavior Replaces Appearance

The defining characteristic of a World Model is that it produces outputs that behave predictably under real-world interaction and not just outputs that look plausible at a glance. For businesses, this is the distinction between AI that generates convincing management reports and AI that reliably improves operating performance.

Annotation Quality and Compliance: In an engagement with a data annotation firm, teams were required to cross-check complex image and text annotations against a 50-page rulebook. Generative AI applied naively would pattern-match surface-level text and produce outputs that appeared compliant while violating underlying logic, an unacceptable risk profile in any regulated or quality-critical environment. Using AWS Bedrock, Valere Labs built an automated logic bridge mapping the full rulebook into precise JSON logic structures. The AI encodes the causal rules of the business explicitly and applies them consistently at scale, producing mathematically consistent quality scores and fully auditable decision trails.

SaaS Customer Retention: In an engagement with a SaaS platform serving automotive dealers, the challenge was identifying customer risk before it became expensive churn, the kind of operating performance problem that quietly erodes portfolio EBITDA without triggering traditional early-warning indicators. Valere used Dactic to codify the qualitative early-warning signals that experienced Customer Success teams recognize as churn precursors but had never formalized. Conducto then combines that encoded knowledge with real-time account data to generate continuous account health scores, identifying at-risk accounts 30 to 60 days before traditional indicators surface and automatically prescribing intervention playbooks derived from previously successful retention outcomes. For a SaaS business, this translates directly into net revenue retention improvement, a metric that compounds meaningfully toward exit valuation.

Dimension Three: Intelligence Compounds, Not Depreciates

This is the dimension most consequential to Private Equity value creation strategy. A static software system begins depreciating the day it ships. A well-architected AI system begins appreciating. Every cycle of prediction, action, outcome, and feedback makes the model more accurate, more specific to the organization running it, and harder for a competitor starting from a generic baseline to replicate.

Government Contracting and Bid Intelligence: In an engagement with a firm operating in government procurement, the client held a significant but largely inaccessible competitive asset: the deep institutional knowledge of senior sales executives and subject matter experts. That knowledge existed only in people’s heads, undocumented, unstructured, and impossible to scale. Valere deployed Dactic to extract and encode that knowledge into a structured intelligence baseline. Conducto agents now continuously monitor government procurement portals and surface win probability scores for new contract opportunities. When a sales leader makes a bid decision, that judgment feeds back into Dactic. Each human decision enriches the model. Each contract outcome sharpens the next prediction. Today’s human judgment literally becomes tomorrow’s AI intelligence, and no competitor starting from a generic model can replicate that compounding effect. For a PE sponsor, this is the architecture of a genuine competitive moat, one that grows more defensible with every quarter the hold period runs.

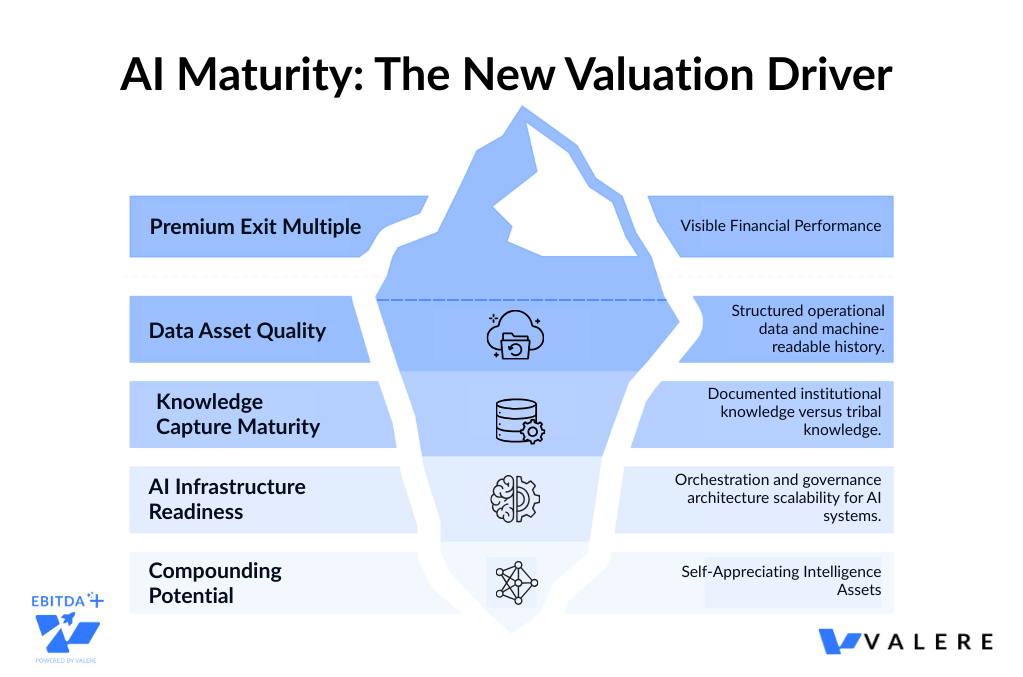

The Due Diligence Question: Evaluating AI Maturity in Acquisition Targets

World Models introduce a new and important dimension to how operating partners should assess acquisition targets and evaluate portfolio readiness for AI transformation.

Organizations that will command premium multiples going forward are those accumulating proprietary intelligence assets including structured data, decision histories, and operational knowledge bases that can serve as the training signal for World Models. Organizations with well-structured historical data and documented institutional knowledge are dramatically closer to meaningful AI value creation than organizations that have been operating with generic tools and unstructured processes.

This suggests several additions worth building into investment evaluation frameworks.

- Data asset quality: How structured is the target’s operational data? Is the decision history accessible and machine-readable?

- Knowledge capture maturity: How much institutional knowledge exists only in people’s heads versus documented, structured processes?

- AI infrastructure readiness: What orchestration and governance architecture exists, or would need to be built, to operate AI systems safely in this business?

- Compounding potential: What feedback loops exist between operational outcomes and the systems that inform future decisions?

A business that scores well on these dimensions is not just an AI-ready acquisition. It is a platform for compounding intelligence value throughout the hold period and a more defensible asset at exit.

Open Challenges: A Straight Assessment

World Models are powerful. They are also imperfect, and for institutional investors and operators deploying capital, those imperfections deserve honest acknowledgment.

Causal correctness versus pattern matching. A model can learn correlations that look right and still fundamentally misunderstand causes. In a business context, this means an AI system can appear to predict outcomes accurately under normal conditions, then fail precisely when something novel occurs such as an economic disruption, a new competitive entrant, or a regulatory change. Building systems that encode genuine causal understanding rather than sophisticated statistical mimicry requires structured knowledge capture and governed orchestration. This is a design challenge and not a theoretical limitation, and it is solvable with the right architecture.

Data bias and missing edge cases. World Models learn from what they see. If certain scenarios are rare in training data, the model will underperform exactly where the stakes are highest. A significant part of Dactic’s knowledge capture work involves ensuring that an organization’s rarest and most valuable institutional knowledge is represented in the training signal and not just the high-frequency routine that populates most enterprise data systems.

Governance and production risk. Regulated portfolio companies feel this most acutely. In healthcare, AI-generated patient communications introduce real compliance and patient safety risk. A hallucination is not an inconvenience. It is a liability event. In regulated industry engagements, Valere has engineered Human-in-the-Loop observability frameworks: portals where administrators review, modify, and test AI-generated outputs in a controlled sandbox before they reach an end user. Conducto’s orchestration architecture is built with this auditability at its core. Every decision path stays traceable. Every agent action remains governable. For operating partners managing compliance risk across a portfolio, this governance layer is not optional. It is foundational.

Conclusion: Build, Learn, Scale

The World Model era is not on the horizon. It is here. The sponsors and operating partners who build the infrastructure of compounding intelligence inside their portfolio companies now are constructing a durable advantage in performance and exit positioning that will be very difficult to close from behind.

The client examples in this article are not isolated wins. They are points on a compounding curve, and the curve accelerates.

Sales leaders in government contracting make better bids because last quarter’s bid decisions are now encoded inside the model. Customer Success teams in SaaS retain accounts they would have lost because yesterday’s churn signals are now inside the prediction engine. Annotation quality holds at enterprise scale because the logic of a 50-page compliance rulebook is now inside the causal architecture. Professional services firms plan in hours what used to take weeks because their operational history is now inside the forecast model. Patient communications in healthcare are safer and faster because human clinical judgment is now inside the governance loop.

Not a single dashboard. Not a single deployment. A continuously improving intelligence layer that gets more specific, more accurate, and more valuable with every cycle it runs, compounding inside portfolio companies across every year of the hold period.

The organizations that will lead over the next decade of value creation are the ones building this infrastructure now. That is the real promise of World Models. And for PE sponsors and the operating leaders inside their portfolios, it is the most important infrastructure investment of this decade.

Ready to build AI that compounds in value over time?

Valere works with Private Equity sponsors and portfolio companies to design, build, and scale AI systems that deliver measurable outcomes. Whether you are evaluating AI maturity across existing assets, preparing a company for transformation, or assessing a new acquisition target, Valere brings the expertise, platform, and partnership model to turn World Model potential into portfolio performance.

- A World Model Readiness Assessment identifying where your current AI deployments lack the knowledge capture, causal architecture, and orchestration governance needed to move from pattern matching to genuine decision intelligence

- A clear path from disconnected AI pilots to a governed simulation and prediction layer that integrates with your existing operations, encodes your institutional knowledge, and compounds in accuracy with every cycle it runs

- A personalized value creation roadmap from isolated AI experiments to production grade World Model infrastructure with human in the loop governance, self correcting feedback loops, and proprietary intelligence that no competitor can replicate by licensing the same tools

Start building your World Model architecture: valere.io

Frequently Asked Questions

How do World Models differ from the generative AI tools our companes are already using?

Standard generative AI tools predict what text or content should look like next, based on patterns in training data. World Models go further: they learn an internal representation of how an environment actually works, including cause-and-effect relationships, and use that to predict future states and evaluate decisions before they are made. In practice, this means the difference between AI that produces plausible outputs and AI that reliably drives operating outcomes. The distinction matters most in the domains PE operators care most about including financial planning, customer retention, compliance, procurement, and competitive positioning.

What does a typical company implementation involve, and how long until it impacts performance?

Implementations vary by company size, data maturity, and use case. The most common starting point is knowledge capture, ensuring the institutional intelligence embedded in processes, decisions, and operational history is structured well enough to serve as a training signal. From there, the work involves custom model development, workflow integration, and governance design. Organizations with well-structured historical data and documented processes tend to reach meaningful performance results faster. The engagements described in this article, including 60%+ budget cycle compression and churn prediction 30 to 60 days ahead of traditional indicators, are representative of what mid-market companies achieve within a 12 to 18 month horizon.

How does AI transformation affect the exit of multiple conversations?

The most direct connection is competitive moat. A company that has built proprietary AI infrastructure, with models trained on its specific operational history, orchestrated at scale across business functions, and compounding with every cycle, is a categorically different asset than one that has licensed generic tools. Buyers at exit, whether strategic or financial, increasingly recognize the difference and price it accordingly. The secondary connection is operating performance: companies running World Model-driven retention, forecasting, and procurement systems for two or three years will typically show the kind of sustained EBITDA improvement and net revenue retention that expands multiples independently of the AI narrative.

What separates AI implementations that compound from ones that plateau?

The primary factor is whether feedback from real-world outcomes flows back into the model. A deployment that generates predictions, takes actions, and then discards results is a static tool. A deployment that captures the gap between what the model predicted and what actually happened, and routes that gap back as a training signal, improves continuously. Architecturally, this requires both a knowledge capture layer in Dactic and an orchestration layer in Conducto that routes outcomes back to the model. Without both, intelligence depreciates. With both, it appreciates every quarter it runs.

How should we think about build versus buy for AI capabilities?

The answer depends on how much of the capability’s value derives from the company’s specific data and operational context. For capabilities requiring deep integration with proprietary data and institutional knowledge such as customer retention intelligence, bid prediction, and financial forecasting, custom development produces a more durable and defensible asset. The resulting model encodes a specific operational reality that no competitor can replicate by licensing the same tools. For capabilities that are largely domain-agnostic, off-the-shelf solutions are often faster and more cost-effective. The higher the dependence on proprietary data and context, the stronger the case for custom development and the greater the moat it creates.