By: Alex Turgeon, President at Valere

TL;DR: 3 Key Takeaways

- Professional services spend runs at roughly six times total software spend across a typical company, and AI-native firms are increasingly delivering those outcomes directly, making this an order of magnitude larger opportunity than most current AI investment theses are priced against.

- The ratio of intelligence work to judgement work in any given function is the most reliable diagnostic for AI readiness, and the boundary between the two is not fixed as AI systems accumulate domain-specific outcome data over time.

- The difference between a copilot and an autopilot is not a product detail but a thesis about value capture, as every improvement in the underlying AI models makes an autopilot service faster and cheaper while exposing a copilot vendor to existential commoditisation risk in a single release cycle.

Most investors and operating partners are asking the wrong question about AI. The debate over whether AI will displace professional service workflows is largely settled. The question worth working through now is sequence, timeline, and which companies in your portfolio are exposed versus positioned. Those are not the same conversation.

What makes this moment different from prior technology cycles is the size of the market being disrupted. For three decades, enterprise technology investment followed a familiar pattern: back the software layer, collect the multiple. SaaS refined that model into something clean and predictable. But professional services spend runs at roughly six times total software spend across a typical company. That is the market AI is beginning to move into, and it is an order of magnitude larger than what most current AI investment theses are priced against. We have seen this play out directly across engagements with PE-backed companies at various stages of AI readiness, and the gap between what investors expect to be addressable and what is actually on the table is consistently larger than anticipated. Getting the sequencing wrong, or conflating the exposed companies with the positioned ones, is where a significant amount of capital is going to get misallocated over the next few years.

Why Most AI Spending Isn’t Working

Before mapping the opportunity, it is worth being honest about why most AI spending is not working, because the failure tends to be architectural rather than technical.

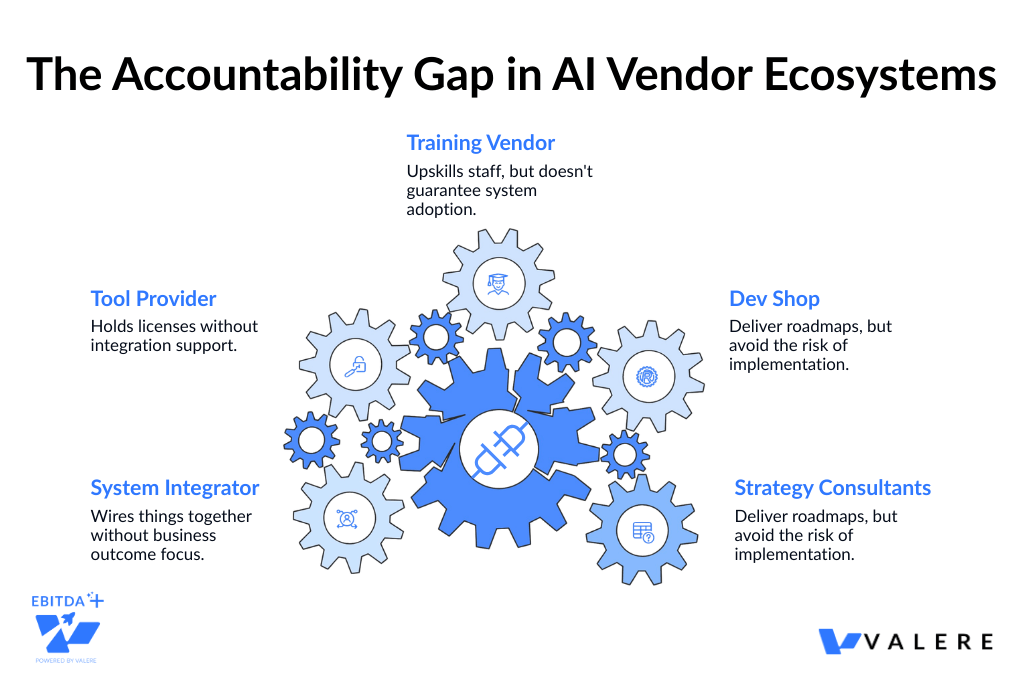

Research from McKinsey and various industry analyses consistently finds that the majority of AI initiatives stall between pilot and production, with a very small share ever generating measurable ROI. In nearly every case, the root cause is not a technology problem. It is an accountability problem baked into how the market is structured. Companies do not fail at AI because they lack resources or ambition. They fail because the vendor ecosystem distributes responsibility across so many parties that no single one is truly accountable for the result. Strategy consultants produce the roadmap. A dev shop builds the system. A training vendor runs the courses. A tool provider holds the licence. A system integrator wires it together. The client ends up coordinating all of them, absorbing exactly the burden they were paying others to carry.

This pattern has played out in engagements we have worked on directly, including one where a client had spent north of $400,000 across four vendors over eighteen months, with a pilot that still had not reached production. The work was technically sound at each layer. Nobody had failed their individual scope. But the actual business result belonged to no one, which is functionally the same as failing.

The tool-first model creates a specific additional problem. Any AI product built on a foundation model is one update cycle away from having its differentiation erased. The tool that improved team productivity by 20% gets replaced by a native feature in the next platform release. Tool vendors are in a race where their moat narrows with every product update, and their customers are running that same race alongside them without always realising it.

There is a second failure mode that sits underneath all of this and gets discussed less often. Models trained on public data can process complexity at scale. What they cannot do is replicate the accumulated judgement inside a specific organisation, including the instincts, the pattern recognition, and the unwritten rules that actually drive decisions in a particular business. That gap between general AI capability and organisation-specific intelligence is where most deployments underdeliver, including ones that technically reach production.

This shapes how we think about every engagement. Deploying AI into a company is not primarily a technology problem. It is a knowledge capture problem. The real question is how to extract the tacit knowledge your best people carry and encode it in a form that AI systems can actually use. Working with a government contracting firm, this distinction became very concrete. The agents we built could monitor procurement portals, aggregate contract data, and score opportunities mathematically. But the scoring logic that made those outputs genuinely useful came from structured extraction of how their most effective business development people actually evaluated opportunities. Without that layer, the system would have been accurate in a general sense and wrong in the ways that mattered commercially. It would have looked like it was working while quietly underperforming.

That is the problem most vendor ecosystems are simply not designed to solve.

The Market Nobody Is Talking About

Look at any company and you will find the same basic pattern. A business spends $10,000 a year on accounting software and $120,000 a year on the team doing the accounting. Across professional services categories, total services spend runs at roughly six times total software spend. That is the market AI is beginning to move into, and it is an order of magnitude larger than what most AI investment theses are currently priced against.

The distinction that makes this concrete is the difference between a copilot model and an autopilot model. A copilot sells the tool. The professional still does the work, still holds responsibility, and the vendor competes for the software budget. An autopilot delivers the outcome. The end buyer gets the result delivered, the professional intermediary is removed from the equation, and the vendor competes for the services budget. One of those markets is six times bigger than the other.

AI-native services firms are increasingly delivering outcomes directly, with no software licence and no headcount to manage. Companies paying for professional service outcomes across accounting, legal, insurance, compliance, IT, procurement, and recruiting are sitting on cost structures that AI-native providers are beginning to target directly. That is simultaneously an EBITDA opportunity and a meaningful exposure depending on which side of the equation your portfolio sits on.

Every improvement in the underlying AI models makes an autopilot service faster, cheaper, and more capable. For a copilot vendor, the same improvement is an existential threat. That asymmetry matters enormously for how you evaluate AI vendors and how you think about your companies’ longer-term positioning.

The Intelligence vs. Judgement Distinction

The most practically useful diagnostic for evaluating AI readiness in any function is understanding where intelligence ends and judgement begins.

Intelligence work follows rules, however complex those rules might be. Contract drafting, medical coding, compliance review, financial modelling, accounts payable, IT helpdesk triage. The domain knowledge is dense, but it is ultimately learnable and executable by AI today, consistently and at a cost curve that only goes down. Judgement work is different in kind, not just degree. Knowing which company needs attention before the numbers show it. Sensing when a management team is masking problems. Making a directional call under genuine uncertainty with incomplete information. That work is built on years of pattern recognition that AI does not yet handle reliably.

The ratio of intelligence to judgement in any function is what determines how quickly an AI system can take full ownership of that domain. A function that is 80% intelligence work is immediately addressable. A function that is 80% judgement work needs a longer runway. Knowing the difference before deploying capital saves a significant amount of wasted effort and, more importantly, prevents the kind of over-engineered pilots that stall out and erode internal confidence in AI broadly.

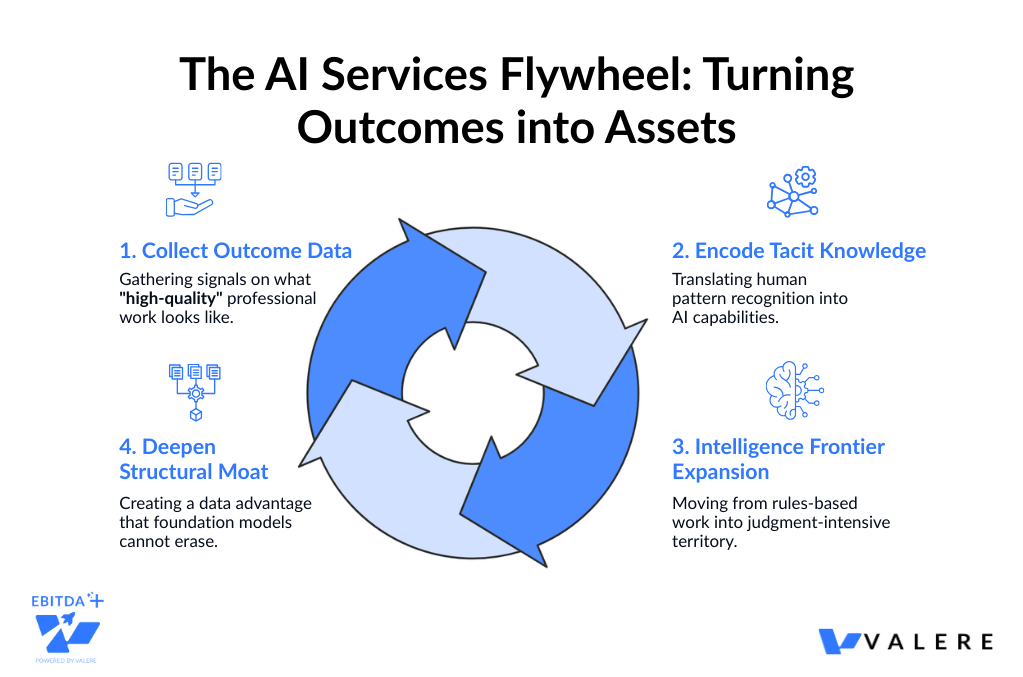

What we have observed repeatedly is that the intelligence-judgement boundary is not fixed. As AI systems accumulate domain-specific outcome data, work that genuinely required experienced professional judgement two years ago increasingly presents as a learnable pattern today. The firms and companies that establish AI positions early, even in narrow starting use cases, are building the data assets that allow them to expand into progressively more complex territory over time. Starting position determines not just the immediately addressable opportunity but the trajectory of compounding advantage.

The Model Distinction That Drives the Multiple

A copilot augments a professional’s workflow, the professional retains responsibility, and the revenue model competes for software budgets. The strategic risk is straightforward: a new foundation model release can commoditise a copilot’s differentiation in a single cycle, and there is no structural protection against it.

An autopilot delivers the outcome directly to the end buyer, removes the professional intermediary, and competes for the services budget. Every improvement in the underlying model makes the service faster and cheaper rather than more exposed. The risk profile is fundamentally different.

For companies, buying copilot tools requires skilled internal operators to extract value. Buying autopilot services converts a fixed cost into a variable, outcome-priced line item that scales without additional headcount. Beyond the operational difference, there is a data accumulation difference that compounds over time. Copilots generate usage data and largely leave it with the customer. Autopilots retain outcome data, meaning real signal about what correct, high-quality professional work looks like at scale across thousands of actual engagements. That outcome data trains better models, allows the system to expand into increasingly judgement-intensive territory over time, and constitutes a moat that deepens with every engagement. The firm that owns the outcome data in a given domain is continuously narrowing the gap between what AI can handle autonomously and what still requires human oversight.

This is the compounding dynamic that matters most from an investment standpoint. Today’s judgement becomes tomorrow’s intelligence, and the firms with the most relevant outcome data get there faster.

The practical first screen for operating partners: the fastest EBITDA-positive AI deployments will almost always start with functions where work is already flowing externally. Replacing an outsourcing contract with an AI-native provider is a vendor swap that happens in a procurement conversation. Replacing internal headcount is a restructuring that happens over quarters and requires a very different kind of organisational will.

The Opportunity Map: Near-Term Addressable Spend

Insurance Brokerage: $140 to $200 Billion

The largest near-term opportunity. Standard commercial lines brokerage covers policy comparison, coverage terms analysis, and carrier form completion. It is structurally intelligence-heavy work with no proprietary strategic insight being applied, just structured comparison and process execution. For companies with meaningful insurance spend, autopilot placement services represent direct procurement substitution with minimal change management. The incumbent broker relationship is the primary friction, and the vendor swap logic applies cleanly.

Accounting and Audit: $50 to $80 Billion Outsourced in the US

The accounting profession is facing a structural talent problem that is accelerating AI adoption faster than almost any other sector. AICPA pipeline research documents the loss of roughly 340,000 accountants from the US workforce over approximately five years, with a disproportionate share of remaining licensed CPAs approaching retirement. This is not a cyclical staffing shortage. It is a structural supply-demand imbalance that is simultaneously driving up costs and degrading service quality from traditional providers.

The entire close cycle, covering journal entries, reconciliations, variance analysis, and reporting, is intelligence work. Complex and jurisdiction-specific, but fundamentally rule-based. Across finance teams we have worked with, the pattern is consistent: the rules governing a close cycle are well-documented. The bottleneck is not complexity. It is the human bandwidth required to apply those rules consistently across high transaction volumes every period. The talent shortage makes the internal case for substitution considerably easier than it would be in a stable-talent environment.

Healthcare Revenue Cycle Management: $50 to $80 Billion Outsourced in the US

Healthcare carries an unearned reputation for being impenetrable to AI because clinical work is so judgement-intensive. That reputation obscures a significant adjacent opportunity in the administrative layer. Medical coding is rules-based work of real complexity, but the codes are standardised, the mapping rules are documented, and the exceptions are enumerable. Complexity and judgement are not the same thing. RCM outsourcing is already the dominant procurement model for PE healthcare holdings, meaning AI substitution is a vendor swap against an existing outcome-based contract rather than a new category of spend. Healthcare companies carrying RCM outsourcing contracts have a realistic 12 to 24 month window before this becomes a more crowded and competitively urgent conversation.

Claims Adjusting: $50 to $80 Billion Including TPAs

On the other side of the insurance policy sits an equally large opportunity. Standard-line claims across property, auto, and straightforward liability are settled by applying policy language to damage schedules and actuarial tables, which is dense rule-based work rather than strategic judgement. Workforce dynamics here mirror accounting: the profession is aging out and replacement pipelines are thin. In building AI systems to evaluate large volumes of outsourced work against detailed structured rulebooks, a consistent observation has emerged. Rule-based work executes more consistently at scale through AI than through human review, not because humans make more individual errors, but because consistency degrades at volume in ways that AI does not experience. Insurance companies are in a relatively unusual position of being able to evaluate AI deployment on both the placement and claims sides simultaneously, each with a clean outsourcing substitution wedge.

Tax Advisory: $30 to $35 Billion

CPA licensing creates a regulatory moat around tax advisory, but the requirement applies to the sign-off, not the underlying analytical work. Research, compliance preparation, cross-jurisdictional reconciliation, filing, and optimisation modelling do not require a licensed professional to execute. In building a tax intelligence platform for a client, we integrated directly with their financial tools to analyse data, identify optimisation opportunities, and track regulatory changes across jurisdictions. What became apparent was where CPA time was actually going. The bulk of it was intelligence work: pulling data, cross-referencing rules, modelling scenarios. The genuine advisory judgement was a relatively small proportion of total hours. Automating the rules-based analysis layer while preserving CPA involvement for advisory decisions and client relationship management lets firms scale capacity meaningfully, even against structural talent shortages. The licensed sign-off layer stays human throughout.

Transactional Legal Work: $20 to $25 Billion

Contract drafting, NDAs, regulatory filings, and standard commercial agreements exist in virtually every company’s cost structure and are routinely sent to outside counsel at rates that are hard to justify for work that is largely formulaic. One characteristic accelerating adoption here is that quality is independently verifiable, meaning well-drafted transactional documents can be reviewed with reasonable confidence by in-house legal without a full external counsel engagement. The opportunity to standardise these workflows across an entire portfolio is particularly compelling at the GP level, where the same document types recur constantly across holdings. When work is defined enough to be outsourced, it is generally defined enough to be automated.

IT Managed Services: $100 Billion and Above

Every company outsources some portion of IT operations, covering server patching, system monitoring, user provisioning, helpdesk triage, and security alert response. This is intelligence work executed on near-identical processes across thousands of similar business environments. Working with a client to rebuild an AI-driven IT licensing help desk, investing in model fine-tuning and prompt optimisation improved response accuracy by 50 percent per the client’s internal benchmarking, which was enough to successfully displace fragmented help-desk arrangements with large enterprise customers. What that engagement reinforced is how often the real barrier to AI adoption in managed services is not technical capability. It is whether the AI can handle the long tail of edge cases reliably enough that clients trust it without a human reviewing every interaction. Getting that threshold right is where the work actually lives. IT managed services spend is among the most universal cost lines across a PE portfolio, and fragmentation in existing MSP markets creates favourable conditions for substitution.

Supply Chain and Procurement: $200 Billion and Above

Procurement presents a strategically distinctive entry point. The wedge does not require displacing an incumbent or winning an existing budget. It requires recovering money that is currently being lost. Most enterprises actively manage only the top tier of their supplier base. The long tail receives minimal negotiation or contract management because deploying human resources at that scale is uneconomical, resulting in systematic contract leakage running at 2 to 5 percent of total procurement spend. An AI service that recovers this leakage represents found EBITDA from the company’s perspective.

We built this architecture in a government contracting context, where AI agents continuously monitored procurement portals, aggregated contract budgets, and scored win probability for each opportunity. Human executives handled final bid and no-bid decisions, covering the layer requiring relationship awareness and strategic context. What became apparent was how much senior human judgement was being consumed by research and pattern matching that could be handled algorithmically. Freeing that capacity had a measurable impact on decision quality, not just on throughput. Tail-spend recovery requires no internal restructuring, which makes it the lowest-friction AI value creation play for most companies.

Recruitment and Staffing: $200 Billion and Above

Recruiting maps cleanly onto the intelligence-judgement spectrum. The top of the funnel, covering sourcing, resume screening, initial outreach, and skills matching, is intelligence work that can be automated today with high reliability. The lower funnel, including closing strong candidates, assessing cultural fit, and navigating interpersonal dynamics, remains judgment-dependent. The practical entry point is high-volume, lower-judgement hiring where speed is the primary differentiator. For companies growing through acquisition or organic expansion, recruiting velocity is often a direct constraint on value creation plan execution, which makes this a lever with implications well beyond the HR budget line.

Management Consulting: $300 to $400 Billion

Consulting is the largest total spend on this map and simultaneously the most judgement-intensive category. Strategic recommendations, stakeholder alignment, and navigating organisational complexity remain deeply human activities, and nothing on the near-term horizon changes that in any meaningful way. The more interesting question for PE is whether consulting engagements can be disaggregated. Most projects contain both intelligence components, such as data gathering, market sizing, benchmarking, and financial modelling, as well as judgement components covering interpretation, recommendation, and execution support. AI that handles the intelligence layer systematically changes the effective cost and delivery speed of consulting work, even when the judgement layer stays entirely human.

The government contracting engagement referenced above demonstrates this in practice. AI agents handled continuous monitoring, data aggregation, mathematical scoring, and pipeline generation. Human executives handled strategic positioning, relationship navigation, and final decisions. The system did not try to replace strategic judgement. It eliminated the intelligence work that was consuming the hours those humans should have spent on judgement. For operating partners deploying consulting resources across companies, that disaggregation is a real and near-term cost reduction lever. Consulting budgets are worth evaluating through that lens: the portion attributable to intelligence-layer work is increasingly addressable, even if the advisory layer is not.

Start With What Is Already Outsourced

One of the most consistent observations from our engagements is that the fastest path to EBITDA-positive AI deployment is almost always through functions where work is already flowing externally.

When a function is outsourced, three things are already true: the organisation has accepted that the work happens outside, a substitutable budget line exists, and success is defined by an outcome rather than effort. Replacing an outsourcing contract with an AI-native provider is a vendor substitution that happens in a procurement conversation. Replacing internal headcount with AI is a reorganisation that happens over quarters and requires a very different kind of organisational will. For operating partners looking for meaningful near-term wins across a portfolio, that is the first screen. Find the outsourced, intelligence-heavy functions. That is where the ROI timeline is shortest and the friction is lowest.

What Accountability Actually Looks Like in Practice

The companies that achieve real AI ROI share one structural characteristic: someone owns the outcome end to end, without handoffs.

Watching the fragmented model fail across engagements led to a fairly fundamental shift in how we structure our work. Treating workforce readiness, custom development, and AI orchestration as separate service lines that clients coordinate between is precisely the model that produces the $400,000, eighteen-month pilots that never reach production. When those workstreams are integrated and accountability for the result sits in one place from the first assessment through autonomous execution, the failure mode largely disappears because there is nowhere for responsibility to fall between the cracks.

In practice this means the teams we train are trained on the systems we build. The systems we build are designed around business outcomes rather than technical specifications. And the organisational knowledge that typically walks out the door when a vendor engagement ends gets captured and embedded into the systems themselves, so the intelligence compounds over time rather than resetting with each engagement.

Framing AI adoption as a data strategy rather than purely a cost reduction play is the difference between building enterprise value that survives the next model release and getting a one-time efficiency improvement that fades within eighteen months. The professional services budget, the six-times-larger market, is what AI autopilot providers are competing for. The copilot versus autopilot distinction is not a product detail. It is a thesis about which layer of the market captures durable value as AI capability continues to improve. The companies that come out ahead will not necessarily be the ones with the best technology. They will be the ones that took clear ownership of the work and built the data assets to keep getting better at it.

Stop Buying Tools. Start Buying Outcomes

The companies that generate measurable value from AI in the next 24 months will not be the ones with the most tools. They will be the ones with a single accountable partner who owns the outcome from assessment through production. Valere works with private equity firms and their portfolio companies to deliver those outcomes directly, starting with the functions where the ROI timeline is shortest and the organizational friction is lowest. Here is what that looks like in practice:

- Identification of the outsourced, intelligence-heavy functions across your portfolio where AI substitution is a procurement decision rather than a restructuring, with a clear timeline and outcome metric attached to each

- End-to-end ownership of deployment across strategy, development, and workforce readiness, so the business result belongs to one accountable party rather than falling between vendors

- Compounding data assets embedded into the systems themselves, so the intelligence your organization has built does not walk out the door at the end of an engagement but continues to improve with every production cycle

If you are a PE firm or portfolio company ready to move from pilot to production, we would like to show you what that path looks like.

Start the conversation: valere.io

Frequently Asked Questions

How do PE-backed mid-market companies typically approach AI implementation?

Most mid-market companies start with a function that is already outsourced, because that is where the ROI math is simplest and the organisational friction is lowest. A vendor substitution requires a procurement decision. A headcount reduction requires a restructuring. For companies without large internal AI teams, starting with an outsourced function, whether that is accounting, RCM, or IT managed services, tends to produce the fastest measurable returns. AICPA and Gartner data both point to the same pattern: successful deployments tend to be narrow in scope initially and expand from a position of demonstrated ROI rather than projected potential.

What does an AI readiness assessment typically include?

A readiness assessment generally covers three things: an audit of where intelligence work versus judgement work sits across key functions, a review of how well the organisation’s tacit knowledge is documented and accessible, and a gap analysis of the data infrastructure needed to support AI at scale. The distinction between intelligence and judgement work is the most practically useful output. Functions that are predominantly rules-based can often be addressed within 6 to 12 months. Functions that depend heavily on accumulated human judgement require longer timelines and a different sequencing approach.

What are the main reasons AI pilots fail to reach production?

Based on patterns across many engagements, the most common failure mode is not technical. It is accountability fragmentation. When strategy, development, training, and tooling are spread across separate vendors, no single party owns the outcome. Each vendor performs against their individual scope, but the business result falls through the cracks between them. The second most common failure is deploying general-purpose AI capability without first capturing the organisation-specific knowledge that makes the outputs actually useful. A system that is accurate in a general sense but wrong in the ways that matter commercially will look like it is working while quietly underperforming.

How do you evaluate whether an AI consulting partner is the right fit?

The most useful filter is accountability structure. Does the partner own the outcome end to end, or do they hand off to other vendors at key stages? Partners who integrate strategy, development, and training within a single engagement structure tend to produce more durable results, because there is no ambiguity about who is responsible when something does not perform. It is also worth asking specifically how they handle the knowledge capture problem, meaning how they extract the tacit knowledge inside your organisation and encode it into the systems they build. That layer is what separates a technically sound deployment from one that actually performs in your specific operating context.

When does it make sense to build internal AI capability versus buying an outcome-based service?

The honest answer depends on the function. For intelligence-heavy work that is already outsourced, buying an outcome-based service is almost always faster and cheaper than building internal capability from scratch. The outsourcing substitution logic applies cleanly. For functions where the work is deeply embedded in proprietary processes or where competitive differentiation depends on how that work is done, building internal capability tends to make more strategic sense over time, even if the initial cost is higher. The practical question is whether the function is one where your organisation’s specific approach to the work is a source of competitive advantage. If it is not, buying the outcome is generally the right call.