By: Alex Turgeon, President at Valere

TL;DR: 3 Key Takeaways

- Standalone AI agents like Claude Code and OpenClaw excel at narrow tasks but lack the governance, audit trails, and security architecture that regulated enterprises require to deploy AI responsibly.

- Orchestration platforms don’t compete with AI models; they make them enterprise-ready by providing PII masking, human-in-the-loop escalation, legacy system integration, and centralized compliance in a single governed framework.

- Organizations that invest in an orchestration layer now are building compounding AI Capital, while those relying on ungoverned tools are accumulating compounding risk.

Every week, a new AI tool promises to hand autonomous power to individual users. Claude Code lets developers execute complex tasks from a terminal. OpenClaw controls email, calendars, and home appliances. They’re fast, impressive, and genuinely fun to demo.

But for anyone serious about creating durable AI value inside an enterprise, they are architecturally insufficient on their own.

The real question facing AI leaders in 2026 isn’t whether to adopt AI. It’s who is conducting the orchestra. The difference between organizations that unlock compounding AI value and those that stumble into regulatory or operational chaos comes down to one architectural decision: do you build on an orchestration layer, or do you let a thousand ungoverned agents proliferate across your organization?

This article breaks down that decision and explains why the orchestration layer isn’t just an IT concern. It is the primary strategic factor determining whether your AI investments create lasting value or lasting liability.

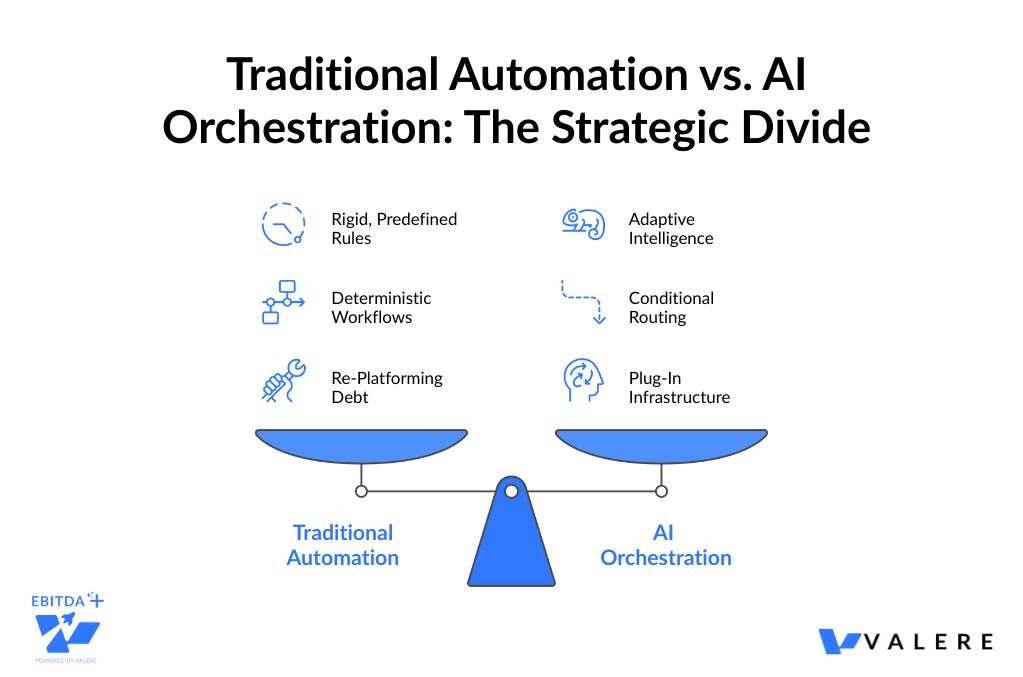

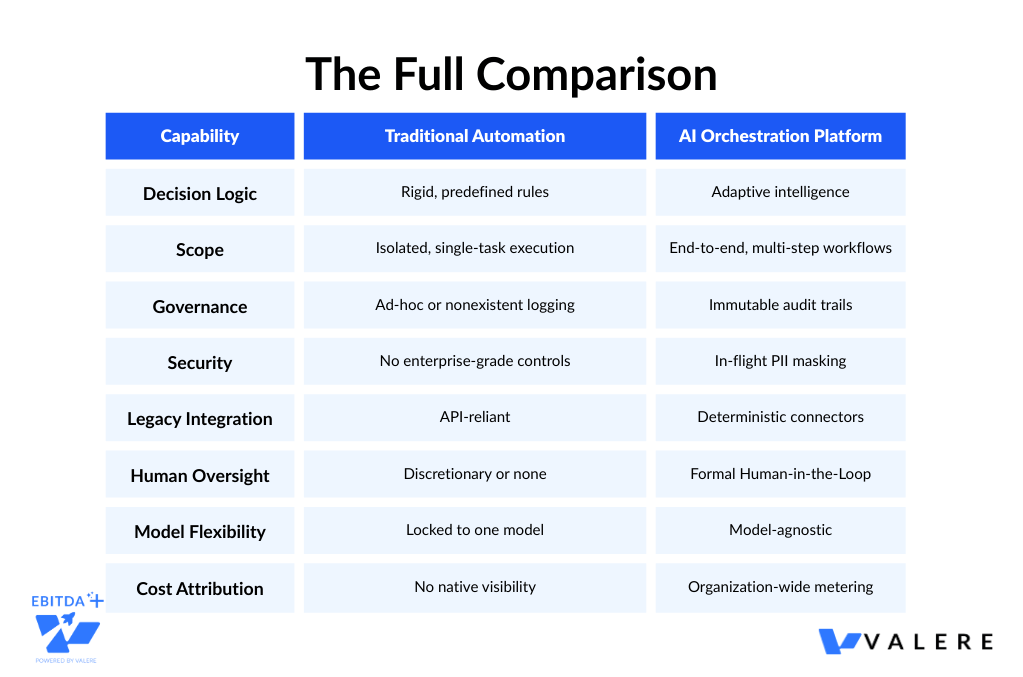

Traditional Automation vs. AI Orchestration: Why the Distinction Matters

Before examining where ungoverned AI agents fall short, it’s worth clarifying something that gets collapsed in vendor marketing constantly: the difference between traditional automation and AI orchestration.

Traditional automation follows rigid, predefined rules to move data between systems. It executes deterministic workflows and cannot adapt without manual reprogramming. A traditional RPA bot runs the same steps in the same order every time, regardless of context.

AI orchestration is fundamentally different. It uses adaptive intelligence to coordinate multiple AI agents, complex models, and real-time decisions interoperably across multiple workflows and systems at once. Rather than simply routing data, the orchestration layer reasons about it. Routing conditionally, escalating when confidence is low, and continuously improving its own decision-making over time.

This distinction has real implications for AI value creation. Organizations that mistake traditional automation for AI orchestration are building on a foundation that will require complete rearchitecting as AI capabilities advance. Organizations that build a true AI orchestration layer are building infrastructure that turns every future model upgrade into a plug-in operation rather than a replatforming project.

The Conductor Analogy

Think of individual AI models as musical instruments. Each one is impressive in isolation, but without coordination they produce noise, not music. A conductor’s value isn’t in playing an instrument better than any musician. It’s in ensuring every instrument produces the right sound at the right moment, and that the ensemble can absorb a new, superior instrument without rewriting the entire score.

That’s what AI orchestration does. The orchestration layer is the conductor. The AI models, Claude, GPT-4, Gemini, whatever frontier model arrives next, are the instruments. The primary differentiator between enterprises that thrive and those that get disrupted is not which model they’re using. It’s whether they’ve built a conductor.

Orchestration is not a feature. It is the foundational strategic architecture that manages multiple models and data sources as components, rather than trying to be a single smarter model. In a high-performing enterprise, you need to be able to plug in a new, superior AI model without rebuilding the entire system. That capability only exists in the orchestration layer.

Organizations that build this layer gain four compounding advantages:

- Model-agnosticism: Swap AI providers without rearchitecting workflows

- Governance at scale: Enforce consistent policies and audit standards across every AI interaction

- Compounding value: Every human decision and feedback loop makes the entire system more capable over time

- Risk containment: Centralized oversight means a model hallucination can’t propagate undetected across the organization

Where Standalone AI Agents Fall Short

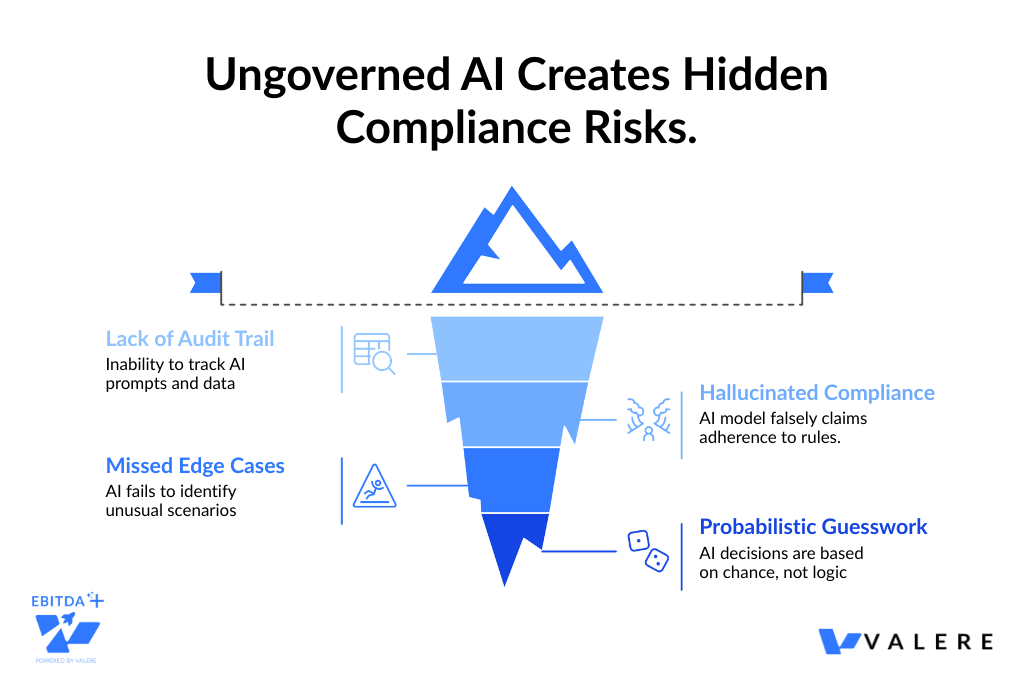

The Governance Gap

When a developer runs Claude Code locally, or an employee uses an ungoverned AI assistant for sensitive tasks, the enterprise has no way to audit what prompts were submitted, what data was exposed, or what decisions were made. In regulated industries, every AI-driven decision has to withstand scrutiny. A tool without centralized logging isn’t just inconvenient. It’s a compliance liability that accumulates silently until it surfaces in an audit or an incident.

We’ve seen this directly. One data annotation company was using unmanaged AI to review high-stakes annotations against a 50-page rulebook. The model hallucinated compliance and missed edge cases regularly, with no audit trail to identify when or why. That kind of probabilistic guesswork masquerading as a governed process is exactly what creates downstream risk. The fix was replacing that setup with a logic bridge that mapped human guidelines into precise JSON logic, backed by a full audit trail and a golden dataset. Mathematically consistent quality scores instead of a coin flip. But that outcome is only possible inside an orchestration framework.

The Last-Mile Integration Gap

Most enterprises still run critical processes on SAP, Citrix, and mainframe environments that predate the web. AI agents that interact with GUIs by interpreting screenshots have shown reliability rates as low as 14.9% in independent testing. They misclick interface elements, miss data in dropdowns, and misinterpret visual layouts in ways that create cascading errors downstream.

AI orchestration platforms use deterministic UI automation with certified connectors that understand the underlying metadata of legacy applications, not just what they look like on screen. The result is success rates above 96.5%. For any enterprise where a missed field in an SAP workflow has financial or compliance consequences, that gap is not a performance metric. It’s an operational risk.

A practical example: a national building materials distributor needed engineers to query project information buried across more than 10TB of PDFs and legacy SQL databases. A standalone LLM couldn’t securely access that data or guarantee it was referencing the correct version of building codes. An orchestrated RAG system solved for this with:

- Natural-language answers grounded in traceable, verifiable citations

- Active Directory-based access controls that respect enterprise permissions

- Scoped retrieval so users only see answers derived from data they’re authorized to view

None of that is achievable with a standalone agent.

The Shadow AI Problem

Without an orchestration layer, AI tool deployments fragment across teams. Each group configures tools differently, connects them to different data sources, and operates under different or no security policies. MIT researchers have called this the shadow AI economy: a growing ecosystem of unsanctioned tools that IT departments can’t see, govern, or shut down. Every ungoverned agent is a potential data exposure, a compliance gap, and a cost the organization can’t measure.

None of this is a critique of the underlying models. It’s what happens when you deploy intelligence without infrastructure.

The OpenClaw Warning

If Claude Code represents the risks of ungoverned intelligence in a professional context, OpenClaw takes it to the logical extreme.

OpenClaw, the open-source personal AI agent built by Peter Steinberger, reached 196,000 GitHub stars and 2 million weekly users in weeks. It promised control over email, calendars, messaging platforms, Spotify, and home appliances. Users loved it. The security community did not.

Cisco’s AI security research team analyzed over 31,000 OpenClaw agent skills and found that 26% contained at least one vulnerability. One tested skill performed data exfiltration and prompt injection without the user’s knowledge. Shodan scans revealed hundreds of OpenClaw instances connected to the internet with zero authentication, their configuration files storing API tokens and keys for every connected service, accessible to anyone who found them. Infostealer malware has already begun specifically targeting these files. Gartner classified it as an unacceptable cybersecurity risk.

The pattern is consistent across tools like this: built for speed and individual empowerment, shipped without the governance architecture enterprises require. OpenClaw is a consumer experiment that employees are actively bringing to work, and that’s exactly the kind of gap that creates the most organizational risk.

The AI Trust Layer

In a regulated business environment, raw AI is a liability. Enterprises can’t afford hallucinations or data leaks. This is where the AI Trust Layer of an orchestration platform becomes non-negotiable. It rests on three pillars.

1. PII Masking and Data Privacy

A standalone LLM request typically involves sending sensitive data directly to a cloud-hosted model. AI orchestration platforms implement in-flight masking, pseudonymizing personally identifiable information before it reaches the model. The Trust Layer replaces names, account IDs, and other sensitive identifiers with reversible tokens, rehydrating the data only after the model’s output returns to the secure enterprise environment. The model never sees the real data. There is no equivalent protection in a standalone agent by default.

2. Immutable Audit Trails

Orchestration platforms provide centralized audit logging and observability, capturing every interaction for compliance and debugging. When regulators ask why an AI system denied a claim, approved a transaction, or flagged a patient record, the enterprise can produce a complete chain of evidence:

- What prompt was sent

- Which business rules were applied

- Which model version responded

- Who approved the final output

This is critical for sectors like finance and healthcare, where every AI-driven decision has to hold up under regulatory scrutiny.

3. Human-in-the-Loop as a Design Principle

Trust isn’t just about security. It’s about accountability. AI orchestration platforms build mandatory human approval points directly into workflow design. When an agent encounters a low-confidence result, a rule breach, or a decision above its authorization threshold, it escalates through a predefined path rather than proceeding autonomously. Standalone agents often cycle indefinitely on complex problems. An orchestrated workflow has structured escalation built in from the start.

We saw the stakes of this firsthand working with a healthcare technology company whose automated AI was communicating directly with patients about benefits, with no oversight, audit capability, or mechanism to intercept a response before it reached the recipient. In healthcare, that’s not a quality issue. It’s a liability. The corrective architecture was a Human-in-the-Loop portal acting as a firewall between the model and the patient, with real-time review and modification of every AI-generated message before delivery, full audit trails, and integration with Salesforce and AWS Bedrock. That kind of architecture is only possible inside an orchestration framework.

Orchestration Capital: The Compounding Return

Here’s the insight that transforms AI orchestration from an IT cost center into a strategic asset: every component of a well-built orchestration layer compounds in value over time.

Every immutable audit trails make each compliance review faster and cheaper than the last. Human-in-the-loop decisions that get logged become training signals that sharpen the next AI recommendation. Successful outcomes, a churn intervention that worked, a contract won, a claim processed correctly, continuously refine the scoring model that generates the next recommendation. Additionally, any new AI model that arrives can be plugged in as a component without rearchitecting anything.

This is what Orchestration Capital means in practice: a foundation of trust, visibility, and control that compounds with every deployment. The organizations investing in an orchestration layer today aren’t just deploying AI. They’re building a self-improving system where every layer makes the others smarter.

Organizations that skip this foundation in favor of faster, cheaper ungoverned tools aren’t just accumulating technical debt. They’re accumulating compounding risk. Compliance exposure that grows with each ungoverned interaction, security gaps that widen with each new shadow AI tool, and an architecture that becomes progressively harder to govern the more embedded it becomes.

What This Looks Like in Practice

Government Contracting: From Reactive to Predictive

A government contracting firm we worked with had a business development process that was entirely reactive. They were chasing opportunities after solicitations dropped, relying on manual searches and institutional memory spread across dozens of people. A standalone agent couldn’t solve this because the problem required a multi-layered system, not a single model.

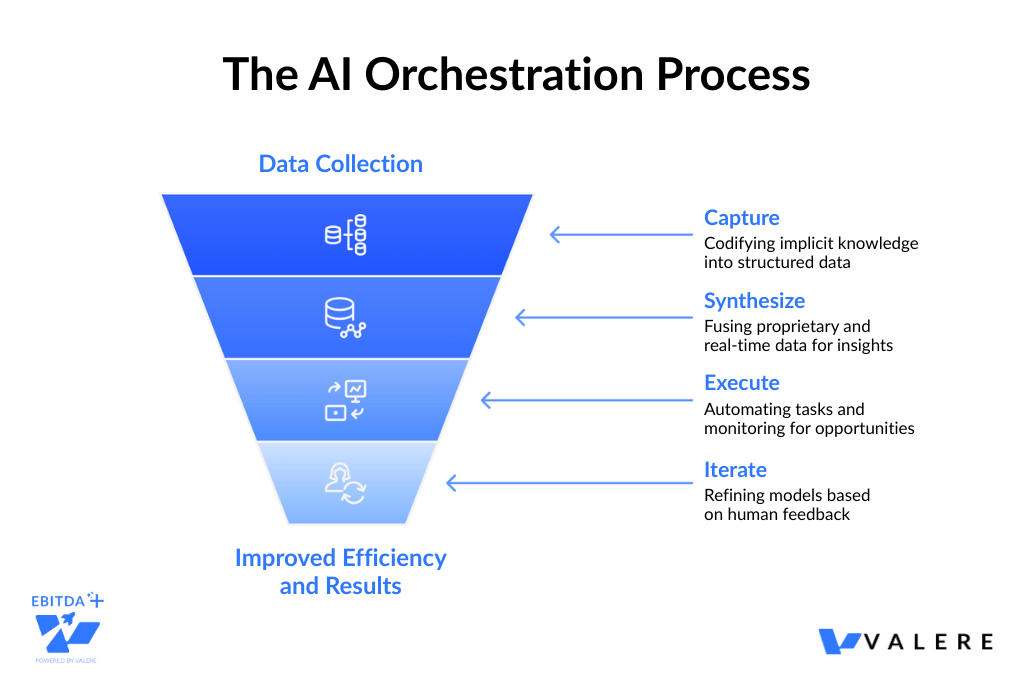

The orchestrated system we built works in four coordinated layers:

- Capture: Automated interviews with sales executives and engineers codify decades of relationship history, win/loss patterns, technical capabilities, and program manager priorities into a structured, searchable knowledge base

- Synthesize: A reasoning layer fuses that proprietary intelligence with real-time data from SAM.gov, USAspending, and agency budgets, applying predictive scoring to calculate win probability

- Execute: Autonomous agents run 24/7 monitoring of government portals, aggregating and normalizing data when matching solicitations drop

- Iterate: A Human-in-the-Loop decision engine lets sales leaders review every AI recommendation, and every Bid/No-Bid decision feeds back into the system, continuously sharpening the scoring model

The result: opportunities identified 30 to 90 days earlier than manual searching, with 80% less research time.

Automotive SaaS: Turning Tribal Knowledge into a Churn Defense

An automotive SaaS platform needed to shift customer success from reactive account auditing to proactive churn intervention. Their most experienced reps could sense when an account was at risk, but that intuition had never been documented or operationalized. A standalone agent would only see one slice of the problem.

The orchestrated system works the same way:

- Capture: The implicit wisdom of experienced CS reps, the subtle behavioral cues and early warning patterns they recognize instinctively, gets mapped into structured Intervention Playbooks

- Synthesize: That qualitative intelligence combines with quantitative data from Salesforce and platform usage logs to generate continuous Account Health Scores tuned to the specific risk factors the frontline team identified

- Execute: Agents monitor the entire portfolio autonomously, detecting risk patterns and routing the correct playbook to the assigned rep

- Iterate: Every successful save feeds back into the knowledge base, creating a self-improving retention strategy

The outcome was a 15% reduction in CS bandwidth spent on manual monitoring and a 20% reduction in early-stage churn. These are real, deployed systems producing measurable results, not concept decks. And they’re the kind of thing you simply cannot build with a standalone agent running in a terminal.

The Split in Enterprise AI

The enterprise AI landscape is splitting into two distinct camps.

On one side are organizations treating AI as a collection of individual tools, deployed ad hoc and governed by good intentions. These organizations will face growing compliance exposure, security incidents, and the compounding cost of fragmented AI efforts they can’t see or control.

On the other side are organizations investing in an orchestration layer: a centralized platform that treats AI models as swappable components within a governed, auditable framework, using adaptive intelligence to coordinate those components across multiple workflows and systems in real time. These organizations are building Orchestration Capital. Every audit trail makes each compliance review easier than the last. Feedback loops, in turn, sharpen the next prediction. And crucially, each human-in-the-loop decision makes the system more capable tomorrow without making it less accountable today.

The models will keep getting smarter. The tools will keep improving. But intelligence without governance isn’t an asset. It’s a risk. The organizations that understand the difference between deploying intelligence and architecting for intelligence are the ones that will define what AI value creation actually looks like over the next decade.

Start Building AI Capital with Orchestration

The gap between a promising AI pilot and a governed, production-grade system isn’t going to close with the next model release. It closes with architecture. Conducto and Valere design and build the orchestration infrastructure that turns standalone AI into auditable, secure, enterprise-ready systems. Here’s what you’ll walk away with:

- A Trust Layer assessment identifying where your current AI deployments lack the governance, audit trails, and human oversight needed to operate at scale

- A clear path from fragmented tools to orchestrated systems that bridge your legacy infrastructure, enforce compliance, and improve with every interaction

- A personalized roadmap from isolated AI experiments to structured, self-improving execution powered by the Dactic-to-Conducto framework

Start building your orchestration layer: valere.io