Why many AI initiatives stall before reaching production and what it takes to build systems that actually run real operations.

There is a pattern we see repeatedly when organizations begin experimenting with AI. Many initiatives start with promising pilots. The demos look impressive. The system summarizes documents, answers questions, or automates a few simple steps. But the moment these systems connect to real operational workflows, new challenges emerge.

Outputs become inconsistent. Processes lose state. External systems fail. Teams start questioning whether the system can be trusted.The difference between AI that works in a demo and AI that can operate inside real business processes rarely comes down to the model itself.

In most cases, it comes down to architecture.The organizations generating real value from AI are not necessarily using different models. They are building systems around those models that make the behavior predictable, auditable, and resilient enough to operate in production environments.

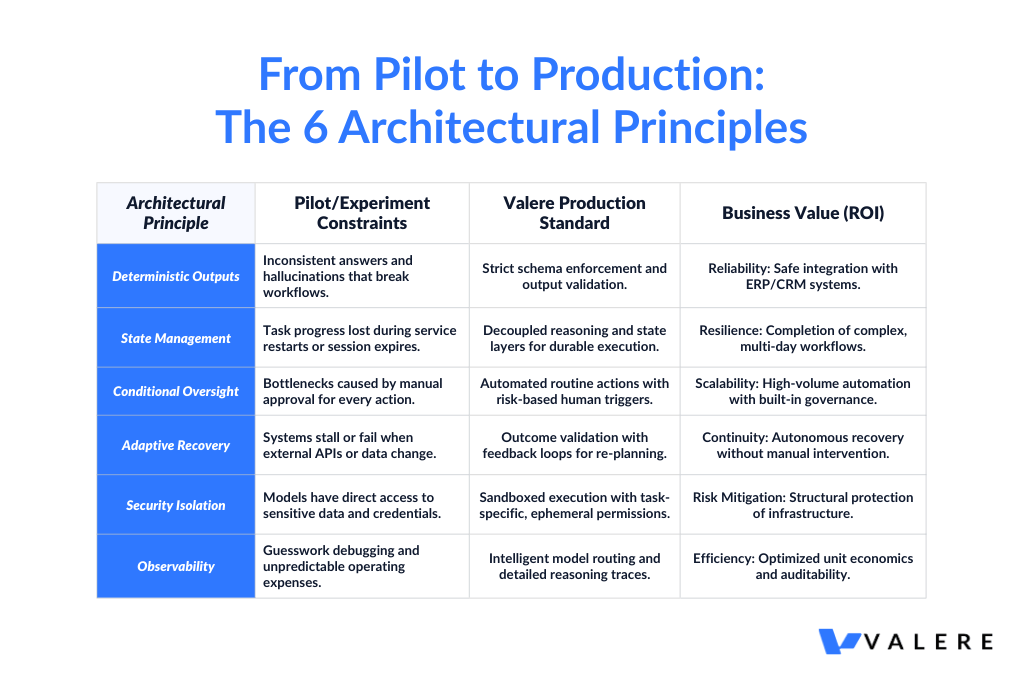

Across hundreds of deployments, a small number of architectural decisions appear consistently in systems that successfully move from pilot to production. Here are six that matter most.

1. AI That Gives You the Same Answer Twice

AI models are not deterministic. Ask the same question twice and you may receive slightly different answers. For conversational assistants this is acceptable. For systems that pass outputs into operational workflows, it quickly becomes a problem.

When an output feeds another system or supports a real decision, small variations can break processes in ways that are difficult to diagnose. Teams often struggle to determine whether a failure came from the model, the data, or the workflow itself.

The solution is to define exactly what a valid output looks like before the system is built and enforce it strictly. If a result does not match the expected structure, the system rejects it immediately rather than allowing it to move further into the workflow. This approach transforms probabilistic responses into predictable outputs that other systems can safely depend on.

In one quality assurance project, a team had been manually checking image and text annotations against a fifty-page rulebook. When the process was handed to a standard AI system without guardrails, the outputs were inconsistent and edge cases slipped through.

By converting the rulebook into structured logic and validating every output before acceptance, the system produced consistent and auditable scores in a single step. The manual review process disappeared, not because the AI was smarter, but because the outputs became reliable enough to trust.

2. AI That Remembers Where It Left Off

One of the most common failure points in early AI deployments is the loss of task state.An agent begins a multi-step process, a service restarts or a session expires, and the system loses track of what has already been completed.

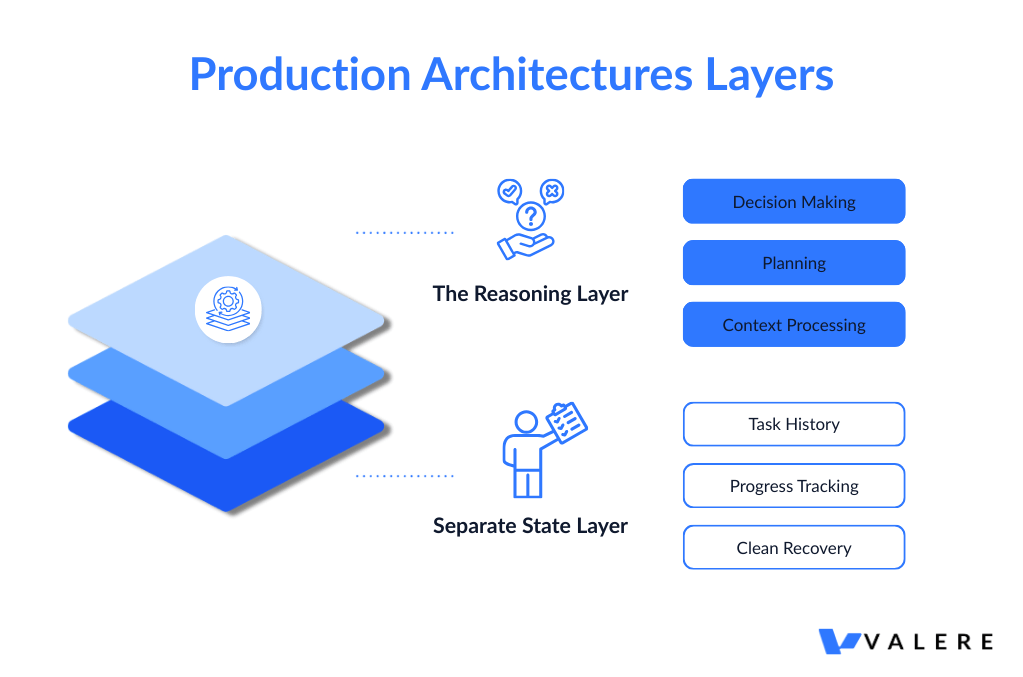

For simple tasks this is frustrating. For complex workflows that run across multiple systems, it quickly becomes unacceptable. Production architectures separate two layers:

- The reasoning layer handles planning and decision making.

- A separate state layer records progress, decisions, and task history.

If part of the system restarts, the process can resume exactly where it stopped. This architecture allows agents to run workflows that last hours or even days while remaining fully auditable.

A marketing organization running outreach across hundreds of thousands of contacts needed exactly this capability. By separating the reasoning layer from the execution layer, the system managed hundreds of sending threads simultaneously while maintaining compliance with sending limits and recovering cleanly from individual process interruptions.

3. Human Oversight Without Slowing Everything Down

Organizations often face a tension when deploying AI automation. They want the efficiency automation offers, but they cannot remove human oversight entirely when decisions carry real consequences. Requiring approval for every action defeats the purpose of automation. Removing oversight entirely introduces unacceptable risk.

The practical solution is conditional oversight. Routine actions proceed automatically. Situations involving higher risk trigger a human review step based on predefined rules such as confidence thresholds, transaction size, or the type of action being taken.

While one task waits for review, others continue running. Every escalation is logged and timestamped, creating a clear audit trail. In regulated environments, this record can be as important as the decision itself.

4. AI That Learns From What Happens

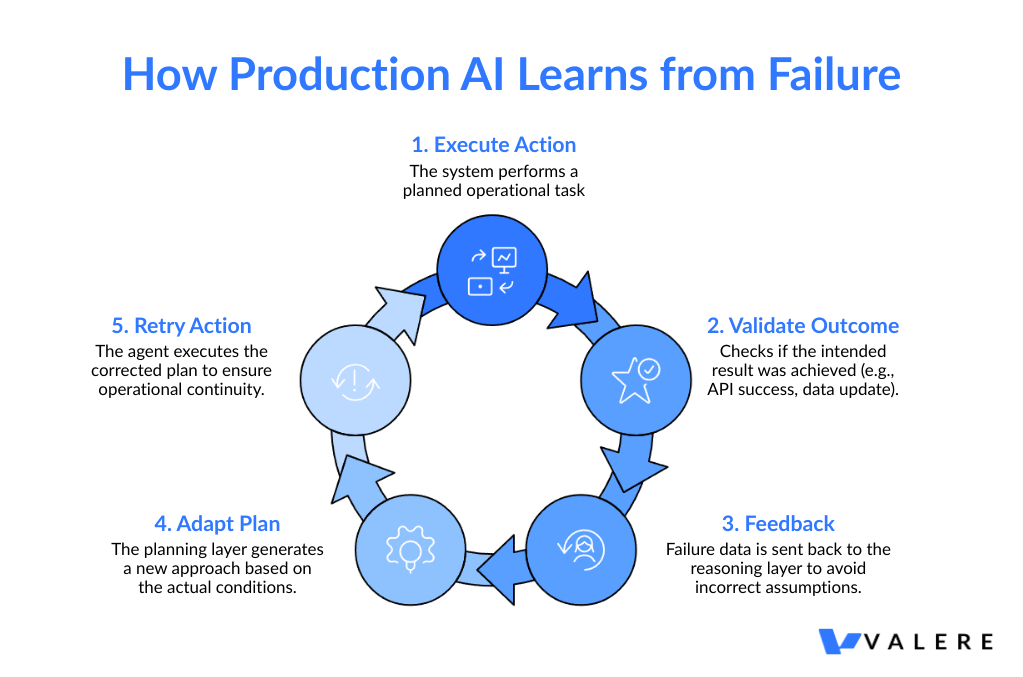

Production environments rarely behave exactly as expected, APIs fail, data becomes outdated, conditions change between the moment a plan is made and the moment it is executed. Fragile systems either stop functioning or continue operating based on incorrect assumptions.

Resilient systems validate whether an action actually achieved the intended result before committing it. If the outcome fails validation, the result feeds back into the planning layer so the system can attempt a different approach.

Instead of retrying the same action repeatedly, the system adapts based on what actually happened. This ability to recover and adjust is one of the defining characteristics of production AI systems.

5. Security Built Into the Architecture

When AI systems interact with internal tools and data, security becomes a structural concern. Production architectures prevent the reasoning model from having direct access to credentials or sensitive data.

When the system needs to perform an action that requires access, it calls a tool operating in a sandbox environment. That environment receives only the permissions required for the specific task and only for the duration of that task.

Once the action is complete, those permissions disappear. This approach ensures that even if the model behaves unexpectedly, the underlying infrastructure remains protected.

6. Knowing What Your AI Is Actually Doing

Enterprise AI systems must remain observable and manageable. Two elements are particularly important.

The first is intelligent model routing. Not every task requires the most capable model. Assigning each task to the most appropriate model based on complexity and cost significantly reduces operating expenses.

The second is visibility. Detailed traces that capture how the system made decisions make it possible to diagnose failures, improve performance over time, and demonstrate accountability to internal stakeholders and regulators.

Without that visibility, debugging becomes guesswork.

Questions That Surface Real Answers

Organizations evaluating AI platforms often benefit from asking a small set of practical questions.

How are outputs validated before they reach downstream systems?

- How are outputs validated before they reach downstream systems?

- Where is task state stored, and how does the system recover if a process stops unexpectedly?

- What conditions trigger human oversight?

- What happens when an external service fails?

- How are permissions handled when the AI interacts with internal systems?

- What level of visibility exists into the system’s reasoning and actions?

These questions quickly reveal whether a system is designed for experimentation or for real operations.

The Pattern Worth Paying Attention To

Organizations generating durable value from AI are not necessarily the ones experimenting the fastest. They are the ones building systems reliable enough to operate inside real workflows. Systems that can be audited, explained, improved, and trusted by the teams who depend on them.

The six principles described here reflect patterns emerging from real deployments across healthcare, government contracting, industrial operations, and large-scale sales environments. The patterns themselves are becoming clearer. The real question organizations face today is whether these architectural decisions are made before deployment or after the system begins to fail in production.

If your team is exploring how to move beyond pilots and build AI systems capable of operating inside real workflows, architecture becomes the critical starting point. At Valere we work with organizations designing and deploying enterprise AI systems built for reliability, security, and operational impact.

We are always happy to exchange perspectives with teams navigating this transition.

About Valere

Valere is an award-winning AI-native & custom software development company that transforms mid-market companies into AI-first organizations through building, learning, and scaling.

As an expert-vetted, top 1% agency on Upwork, Clutch, G2, and AWS, Valere serves as the trusted AI value creation partner for PE firms, mid-market companies, and Fortune 500 enterprises alike seeking comprehensive AI transformation that drives measurable ROI. With over 220 dedicated professionals and domain experts, we specialize in end-to-end AI-native solutions using our proven crawl-walk-run methodology, guiding organizations through every stage of their AI journey — from initial assessment and strategy to full-scale implementation and optimization.

Our hybrid framework delivers enterprise quality at mid-market economics, prioritizing excellence through continuous process optimization, a unified culture, and rigorous hiring standards — all in service of building something meaningful for every client we partner with.

Build Something Meaningful at Valere.io