From the desk of Guy Pistone,CEO, Valere

TL;DR

Companies are running expensive searches for AI-fluent talent, and the talent is already inside the building. Performance reviews can’t see them. Permission culture punishes them. Compensation doesn’t reward them. Calendars give them no room to operate. Mid-market companies, with fewer approval layers and less entrenched governance, are positioned to fix this faster than enterprises can.

The pattern I’m watching

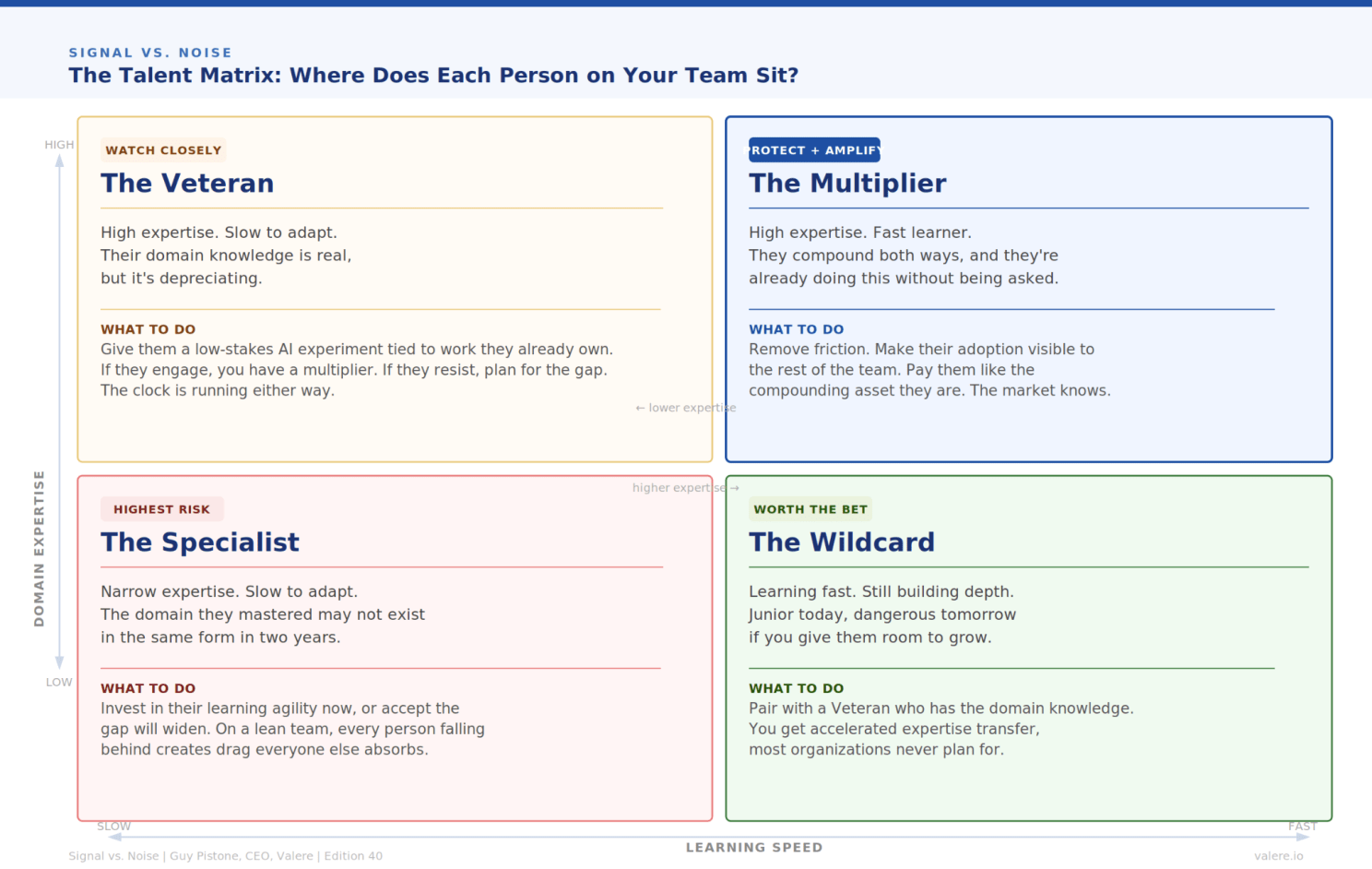

Inside Valere, and across the operators I talk to, the people moving fastest with AI don’t show up where you’d expect. They’re often quiet, and a few weeks ahead of everyone else without anyone noticing. They aren’t on the high-potential list.

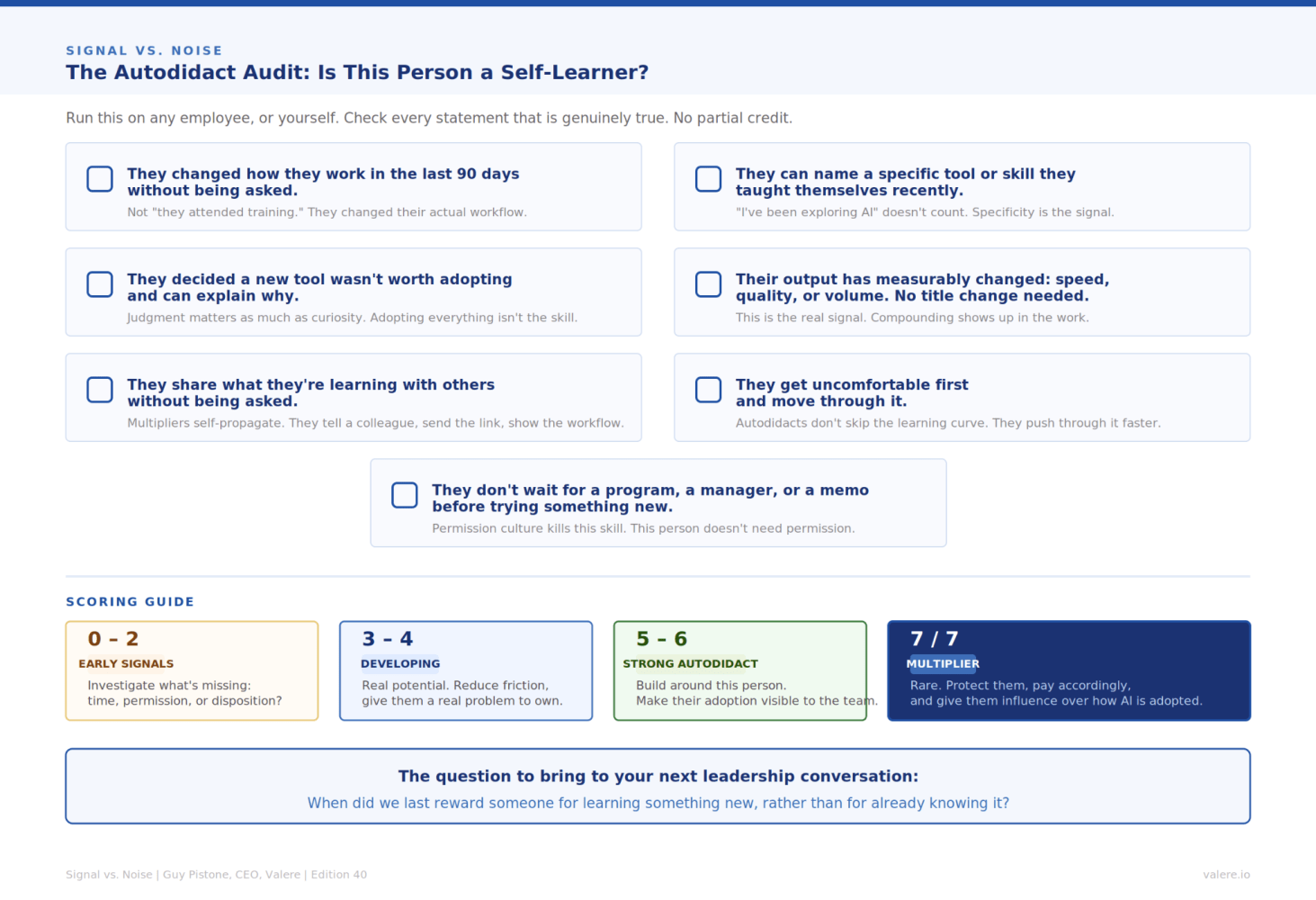

What they have in common is structural. They’ve quietly added two or three AI tools to their workflow. They can name them. They can explain what they stopped doing as a result. Their output is faster and, in many cases, sharper. They didn’t ask for permission or post about it on LinkedIn. They folded the new tools into their work and kept producing.

William Gibson wrote that the future is already here, just not evenly distributed. For mid-market companies in 2026, that line is literally true at the team level. The future of how your company will operate is already happening inside it, in pockets. The right question is why your management systems can’t see the people in those pockets.

The mistake the industry keeps making

The published research on AI talent describes an external phenomenon. McKinsey reports that jobs explicitly calling for AI fluency rose from about 1 million in 2023 to about 7 million by 2025. Deloitte’s 2026 State of AI in the Enterprise puts the AI skills gap at the top of the barrier list, ahead of data quality. LinkedIn’s 2025 Workplace Learning Report says 49% of L&D teams believe execs are worried their employees can’t carry out their AI plans.

These reports describe the labor market, training programs, and hiring practices. They never ask the question that’s relevant for a 200-person company: are the people we employ already doing this, and would we know if they were?

Most of the time, the answer is yes and no. They are. We wouldn’t.

Why your systems are hiding your autodidacts

Four specific failures, each fixable, each cheap.

Performance reviews measure the wrong work. Your OKRs cover what people are supposed to be doing. They don’t cover what people have stopped doing because AI does it for them. The autodidact who saved four hours a week by replacing a recurring task isn’t visible on a performance review, because the work they replaced wasn’t being measured. Their better outcomes can look identical to average outcomes, produced with significantly less effort. The system can’t tell the difference.

Permission culture is a liability problem. Managers who hesitate before letting their team test a new tool are absorbing the risk the org has implicitly assigned to them. Their hesitation is rational once you see what they’re carrying. The fix is a written policy stating who owns the downside if something goes wrong. Once managers know they aren’t carrying it personally, the bottleneck dissolves on its own. Memos about psychological safety won’t move this lever.

Compensation doesn’t reward compounding. A 90th-percentile AI-augmented analyst can now produce several times the production of a 70th-percentile peer in the same role. Comp bands assume linear performance distribution. That assumption is breaking for the highest-output roles, and the people generating outsized output are aware of it. They will leave for companies that price the gap correctly. Most companies are pricing it the way they did three years ago.

Slack got squeezed out. The autodidacts moving fastest share an invisible condition: they have unscheduled time. Many companies have systematically removed slack from every role in the name of efficiency. That decision is now suppressing the behavior leadership says it wants. You cannot evaluate, integrate, and stress-test a new tool inside the 30-minute calendar gap between two meetings.

The mid-market advantage that gets missed

The standard line is that the mid-market is at a disadvantage in talent. The other side doesn’t get said enough, though. A 200-person company has fewer approval layers, fewer competing internal stakeholders, and fewer entrenched legacy systems than a 20,000-person enterprise. Move correctly, and the autodidact’s compounding has a clearer runway than it would inside a Fortune 500, where governance, procurement, and IT review absorb most of the speed advantage.

Mid-market is disadvantaged on the talent side and advantaged on the execution side. You can fix systems faster than an enterprise can. That’s your ticket.

What to redesign

Audit reviews for what’s no longer being done. Add one question to your next performance review template: What work do you no longer do because a tool now does it for you, and what did you do with the time you got back? You will discover autodidacts you didn’t know you had. You will also discover departments where nobody can answer the question. Both findings are useful.

Make liability explicit, in writing. Decide where the downside lives if an experiment fails. Write it down. Tell managers and individual contributors. Build it into the next vendor-procurement and tool-evaluation template, so the question gets asked once and answered for the whole org.

Pay for compounding. The retention math on a multiplier is different from the retention math on a typical role. When that person leaves, the loss is bigger than a headcount. They walk out with the workflows they built, the prompts they refined, and the tooling they wired into their daily work. Almost no comp plan reflects that. The companies whose plans do will collect the talent the others can’t keep.

Protect 90 minutes a week. Set aside recurring, unscheduled, unaccountable time for everyone in a knowledge-work role. Tie it to a problem you actually want solved. “Figure out whether this can cut proposal review time in half” is a better instruction than “go explore.” You will not need to find autodidacts. You will find them in the slack you just gave them.

The question to bring to leadership

Almost every published article on this topic, including the one I wrote in the last edition, frames the AI-talent problem as something to solve in the labor market. Find the people. Hire them. Train your existing team. The framing externalizes a problem that is mostly internal.

We should be asking: show me the three people in this company who added an AI tool to their workflow in the last 90 days without instruction. Show me what they’ve stopped doing as a result, and how it’s appeared in our metrics.

If you can’t answer that quickly, look at your instrumentation before you look at hiring. In a 200-person company, instrumentation is a fixable problem.

Drucker had a line for moments like this. The greatest danger in times of turbulence is not the turbulence itself. It is to act with yesterday’s logic. Your performance reviews, your permission structures, your comp bands, and your calendar architecture are yesterday’s logic. The autodidacts they’re hiding are tomorrow’s.

Frequently Asked Questions

- How do I find autodidacts already inside my company? Don’t run a survey. Pull a sample of recent work product across departments and ask the producers what tools they used to make it. People who can name three or four AI tools without prompting are the ones. People who can name none either don’t have any or don’t think they should mention it. Both groups are useful to identify.

- What’s the first system to audit? Performance reviews. The fix takes one quarter, costs almost nothing, and the data it surfaces will redirect the next twelve months of decisions about training, comp, and hiring.\

- How do I tell a hidden autodidact from someone who just hates new tools? Look at output and cycle time. Hidden autodidacts produce more, faster, with the same quality. Tool resisters produce the same with more effort. The work shows the difference. Surveys usually don’t.

- How do I compensate for compounding without redesigning my whole comp structure? Start with retention bonuses for people whose departure would cost you more than a headcount. That’s a smaller change than band redesign. It also forces a comp committee discussion that surfaces who the multipliers actually are, because the case has to be made specifically.

- How much slack is enough? 90 minutes a week, recurring, protected against meetings. Less than that and people don’t switch out of execution mode. More than that gets hard to defend in efficiency-focused cultures.

- What if our performance review system can’t be redesigned this year? Add the single question and skip the rest of the redesign. “What work did you no longer have to do this period because of a tool you adopted, and what did you do with that time?” That one question will surface most of what a full redesign would.

Key Takeaways

- The AI-fluent talent you want to find is already on your team.

- Performance reviews can’t see them because the work they’ve replaced wasn’t being measured.

- Permission culture is a liability problem. Fix it by writing down where the downside lives.

- Compensation that assumes linear performance will lose multipliers to companies that price the curve correctly.

- Slack of 90 minutes a week, protected and unscheduled, is the precondition for autodidact behavior. Many companies have removed it.

- Mid-market has a structural advantage on execution that the talent conversation usually ignores.

- The question to bring to your next leadership meeting: why can’t our systems see the autodidacts you already have?

Resources & Sources

Cited in this edition:

- McKinsey, The State of AI in 2025.

- Deloitte, State of AI in the Enterprise 2026.

- LinkedIn, 2025 Workplace Learning Report.

- William Gibson «The future is already here -it’s just not very evenly distributed», v. Chatterton, Newmarch 2016[8].

- Drucker, Peter. Managing in turbulent times. Routledge, 2012. Print.

Further reading on AI adoption in mid-market and enterprise:

- OECD, AI Adoption by SMEs (2025). https://www.oecd.org/content/dam/oecd/en/publications/reports/2025/12/ai-adoption-by-small-and-medium-sized-enterprises_9c48eae6/426399c1-en.pdf

- OpenAI, State of Enterprise AI (2025). https://cdn.openai.com/pdf/7ef17d82-96bf-4dd1-9df2-228f7f377a29/the-state-of-enterprise-ai_2025-report.pdf

- Federal Reserve, Monitoring AI Adoption in the U.S. Economy. https://www.federalreserve.gov/econres/notes/feds-notes/monitoring-ai-adoption-in-the-u-s-economy-20260403.html