TL;DR

Most companies treat AI training as an HR problem. It is a business architecture problem. How you distribute AI capability across your organization determines how fast it adapts as the tools change. A company where one or two people understand AI is not an AI company. It is a company with an AI dependency. This article lays out the exact framework we use at Valere to fix that, including the six-step loop, the selection rubric, and what leaders need to provide for it to actually work.

A few days ago, I sent a mandate to our entire company. It’s something you should consider company-wide, too. But wait, because it’s not a training calendar or a course enrollment.

My mandate asked every single employee to choose their own AI project.

The message was simple. Find a real friction point in your current workflow, pick a tool that might address it, run the experiment for a defined period, and come back with what you learned. No generic AI basics course. No certification track. Just real work, real tools, and a deadline to report back.

I sent it because we help companies become AI native. That claim means very little if we are not willing to do the uncomfortable work ourselves. But I also sent it because I have watched enough AI training programs fail to know that the problem is almost always the container.

Workshops teach people to talk about AI. Organizations need people who can use it, on actual work, under actual conditions, with actual stakes. Those are different skills, and one does not lead to the other the way most people assume.

Why Self-Directed Learning Works Where Workshops Don’t

In a survey of workers in personalized learning programs, 79% reported higher engagement because the benefit felt immediate and connected to their actual job. The mechanism behind that number is worth understanding: people disengage from learning because they cannot see how what they are being taught connects to the problem sitting on their desk right now.

Which is why generic AI training fails. A sales rep and a graphic designer sitting through the same foundational AI course will both walk out with vocabulary, and neither will walk out with capability. Capability requires reps on real problems. And the person closest to a real problem is always the person doing the actual job.

Think about how shooting coaches work in basketball. You do not become a reliable free-throw shooter by watching film or sitting through technique lectures. It can play a part, sure. But you become reliable by shooting thousands of free throws in practice, under increasing pressure, with a coach watching what breaks down. The practice has to mirror the game, or the skill does not transfer. AI capability works the same way.

Self-directed AI projects work for three specific reasons.

- Choice creates intrinsic motivation. When people select the tool themselves, they select something they are curious about, which means they invest time in it willingly.

- Employees know their pain points better than any central training department does. They are closer to the bottlenecks and inefficiencies in their own workflow. Giving them freedom to experiment on those problems creates ownership that a top-down mandate never produces.

- AI expertise has to be distributed. The tools are evolving fast enough that no single person can remain the definitive expert across every domain. Organizations build stronger capability through peer-driven learning, with different people developing depth in different areas over time.

Traditional training still has a place. Foundational AI literacy, tool onboarding, ethics, and safe use policies belong in the curriculum. But they are a floor, not a strategy. The floor tells people what AI is. The experiment teaches them what it can do under the conditions of their specific job.

What an Employee Self-Selected AI Project Actually Is

An employee self-selected AI project is a hands-on work experiment where each employee explores an AI tool based on their interests/curiosity, and most importantly, the work on their desk. It is not an extra task created for the experiment. It is the work they already own, with an AI tool applied to it during a defined practice window.

A concrete example of how this plays out: a marketing associate on our team ran an AI copywriting tool against a live email campaign. The first output was awkward. Their instinct was that the tool did not work. But the real lesson was that their prompt was too vague, that the output was a starting point, not a deliverable, and that iteration was the entire point. They refined the prompt, ran it again, and the second version was substantially better. That lesson only became available because they had room to fail inside a real project and time to try again. A webinar would not have gotten them there.

That cycle, use the tool, study the result, document what happened, adjust the approach, try again, is what builds skill. Everything else I categorize as AI theater. It is also what most organizations structurally prevent by treating AI learning as something that happens in addition to work rather than inside it.

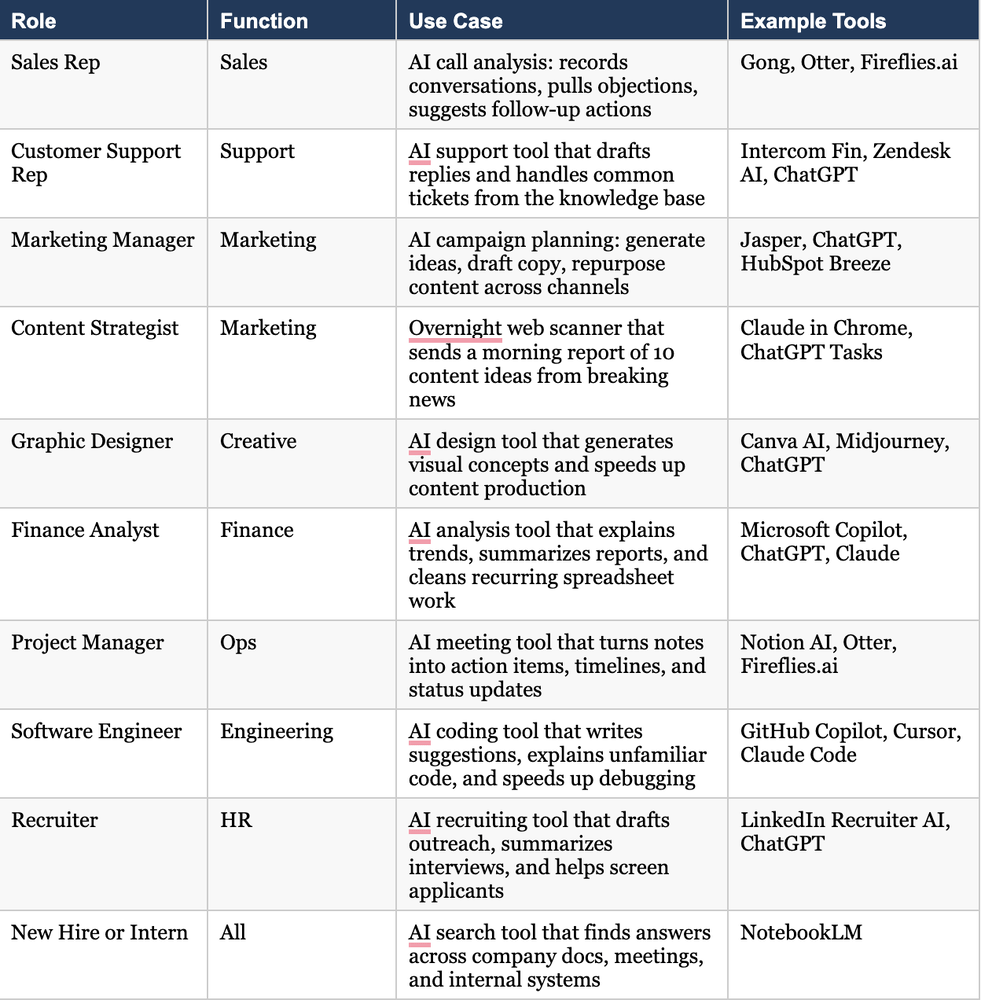

What This Looks Like Across Roles

The right AI project varies significantly by role. Here is how this plays out in practice across a range of functions:

The Six-Step AI Learning Loop

This only works if the organization follows the entire loop. Each step is there for a reason. Skipping any one of them degrades the outcome significantly.

Step 1. Let employees select a tool worth testing.

Ask each employee to identify a real friction point in their work, then choose an AI tool that helps address it, ties to a KPI they are already tracking, and matches something they are genuinely interested in exploring. The project selection rubric below helps assess whether the tool fits the task, the expected payoff, and the time required to learn it.

Step 2. Define a specific outcome before testing begins.

Before the experiment starts, managers should ask each employee to define what they want to improve. For example, I believe this AI chatbot will reduce my response time on customer inquiries. Or, I believe this code assistant will help me refactor one legacy function faster. The goal should be reviewed by a manager before work begins so the effort stays grounded in a real business outcome rather than general exploration.

Step 3. Run the experiment for a time-bound practice period.

Have employees use the selected tool on a task they already own during a defined practice window, typically two to three hours per week for a set number of weeks. The point is to apply the tool to live work, not to a task created just for the experiment. Set the shareback date on the calendar before the testing period begins.

Step 4. Require documentation throughout.

Have employees keep a simple journal or tracker recording what got better, what broke, what surprised them, and what the experiment taught them. They should also save useful assets: prompts, context files, reference materials, and workflow steps that can be repeated, improved, or reused by others. If the learning stays in someone’s head, it does not compound.

Step 5. Run the shareback session. Do not skip this.

This is the step most organizations skip, and it is the most important one. When the practice period ends, bring everyone back together in a shared space and have each person briefly cover: what tool or use case did I test, what task did I apply it to, what worked, what failed or underdelivered, what part of this do I want to keep testing, and what assets or prompts are worth saving for reuse. Management should be present, listening for what can be scaled and whether any tool deserves a paid license or an internal owner. The learning only becomes organizational capability when it becomes visible to the team.

Step 6. Decide what happens next before the meeting ends.

After the shareback, leaders should put follow-up steps on the calendar before anyone leaves the room. That might mean scheduling another test round for a promising workflow, commissioning a paid license for a tool that proved useful, or scheduling a manager follow-up with employees who need additional support. If no decision gets made in the room, the momentum dies.

The Project Selection Rubric

Use this rubric to help employees choose the right experiment. Start with friction in the work itself, then assess the tool or project against five questions:

- Relevance: Does it solve a real work problem or a challenging task I face frequently?

- Impact on KPIs: If successful, how much could this improve efficiency, quality, or outcomes on my performance metrics?

- Learning curve: Is it realistic to learn with the time and skills I currently have available?

- Interest: What part of this tool or project am I genuinely curious or excited about?

- Risk and support: What is the risk if things go wrong, and do I have the support I need?

What Leaders Have to Provide for This to Work

A good rubric helps people choose the right experiment. Good choices alone do not create a learning culture. The organization has to provide the conditions that make repeated experimentation possible.

Protected time.

This will not happen if AI learning is piled on top of a full workload or treated as a separate course disconnected from real work. In some cases, that means a manager extends a deadline by a few days so the employee has room to learn without falling behind. That is a reasonable cost.

Psychological safety.

During the experimentation phase, employees should not feel graded on the quality or immediate business value of their first attempts. That is not the point. At least, not yet. The point is to learn the process of working with AI. If leaders create pressure around outcomes too early, employees hide mistakes and avoid the experimentation that builds capability. Say this clearly: during this phase, we are not keeping score on what you produce. We are looking for how you used the tool, learned from the result, captured your process, and improved your approach.

Manager involvement.

Managers should not treat this as an initiative they announce once and forget. They need to check in regularly, help employees connect experiments to real business goals, and model the same behavior themselves. When managers share their own wins and struggles with AI tools, the initiative feels supported rather than abandoned.

Safe use guidelines.

Employees need clear rules for what they can and cannot share with an AI tool. The company should require people to protect proprietary information, redact sensitive material when needed, and formally agree to both the company’s policy and the tool provider’s terms before any experiment begins.

The Bigger Architecture Problem

Success comes from someone with a real problem, a specific tool, and permission to figure it out.

That is the point most leaders miss when they think about AI training as an HR initiative. The goal is not a more informed workforce. The goal is a more adaptive one. A company where AI capability is concentrated in one or two people is a company whose adaptability is constrained by those people’s bandwidth and domain knowledge. A company where capability is distributed, with different people developing depth in different tools across different functions, compounds over time in a way the centralized model cannot.

Formal training still has a role. Foundational literacy, ethics, tool onboarding, and vendor-led instruction all belong in the curriculum when the situation calls for it. But none of that replaces the harder work of becoming the kind of company that uses AI inside real workflows, measures what changes, and gets more adaptive week by week.

If your AI training program disappeared tomorrow and you replaced it with protected time for every employee to run one live experiment on a real problem, would your organization build more capability than it has right now, or less?

Frequently Asked Questions

What is an employee self-selected AI project? An employee self-selected AI project is a time-bound work experiment where an individual employee chooses an AI tool based on their own role, interests, and a real friction point in their workflow. Unlike top-down training mandates, the employee selects both the tool and the use case, applies it to work they already own, documents what they learn, and shares findings with their team. The goal is to build practical AI capability through direct experience rather than classroom instruction.

How is this different from traditional AI training programs? Traditional AI training programs deliver standardized content to a broad audience, typically through workshops, courses, or vendor-led sessions. They are effective for building foundational literacy and introducing tools at a surface level. Self-directed AI projects go further by requiring employees to apply tools to real work under real conditions. Research consistently shows that training perceived as directly relevant to someone’s job drives significantly stronger adoption and retention. In one survey, 79% of workers in personalized learning programs reported higher engagement because the benefit felt immediate and connected to their role. The two approaches work best in combination: formal training sets the foundation, self-directed projects build the capability.

How long should an employee AI experiment run? Most teams find that two to four weeks is the right window for an initial experiment, with two to three hours per week of dedicated practice time applied to live work. Shorter than two weeks does not give employees enough time to move past the initial awkward phase of learning a new tool and into genuine iteration. Longer than four weeks without a shareback session allows momentum to stall. The length should be set by leadership before the experiment begins, with the shareback date on the calendar from day one.

What should employees document during their AI experiment? Employees should track four things throughout the experiment: what improved as a result of using the tool, what broke or produced unexpected results, what surprised them, and what the experience taught them about how to work with AI more effectively. They should also save reusable assets created during the experiment, including effective prompts, context files, reference materials, and documented workflow steps. These assets are what allow individual learning to scale across the team after the shareback session.

How do leaders prevent employees from sharing sensitive data with AI tools? Before any experiment begins, companies should establish and communicate clear safe use guidelines that specify what categories of information employees may not input into AI tools, how to handle proprietary or client data, and when redaction is required. Employees should formally agree to both the company’s internal policy and the tool provider’s terms of service. Leaders should address this in the kickoff conversation rather than after an incident occurs. Building safe use into the experiment framework from the start makes compliance straightforward rather than punitive.

What AI tools are most useful for business teams getting started? The right tool depends entirely on the role and the specific friction point being addressed. Sales teams have found consistent value in AI call analysis platforms like Gong and Fireflies.ai for reviewing conversations and surfacing follow-up actions. Marketing and content teams frequently start with ChatGPT, Claude, or Jasper for copy generation and content planning. Engineering teams commonly begin with GitHub Copilot or Cursor for code suggestions and debugging. Project managers and executive assistants typically start with Notion AI or Microsoft Copilot for meeting summarization and task management. For new hires and interns navigating internal knowledge, NotebookLM has shown strong early results for finding answers across company documents and past meetings. The role-by-role table earlier in this article provides a fuller breakdown.

Key Takeaways

- AI training programs that teach people to talk about AI are not the same as programs that build people who can use it. The container matters as much as the content.

- Self-directed AI projects work because choice creates motivation, employees know their own pain points best, and AI expertise has to be distributed across the organization to be durable.

- The six-step loop works only when all six steps are followed. The shareback session is the most commonly skipped and the most important.

- The project selection rubric, relevance, KPI impact, learning curve, interest, and risk help employees choose experiments that produce real business outcomes rather than interesting activity.

- Leaders have to provide protected time, psychological safety, consistent manager involvement, and clear, safe use guidelines for this to work.

- The goal is not a more informed workforce. It is a more adaptive one. Distributed AI capability compounds over time in a way that centralized expertise cannot.

Formal training still has a place. But none of it replaces the harder work of becoming the kind of company that uses AI inside real workflows, measures what changes, and gets more adaptive week by week.

Resources & Sources

- Valere Learning — AI Readiness and Workforce Education

- Valere

- Gong — Revenue Intelligence Platform

- Notion AI

- GitHub Copilot

- Cursor

- NotebookLM by Google

- Microsoft Copilot