What Operators Need to Know About AI That Actually Moves EBITDA

TL;DR: 3 Key Takeaways

- The gap between companies running AI in production and those stuck in pilot is widening fast, and the architecture decisions being made right now determine which side an organization lands on.

- Most AI pilots fail because no single party owns the outcome across data readiness, orchestration, security, and adoption.

- Organizations that encode proprietary institutional knowledge into production AI systems build compounding advantages across a hold period that generic platform deployments cannot replicate.

A divide is forming inside the mid-market, and it is moving faster than most deal teams realize.

On one side are portfolio companies that have moved past AI experimentation. They run systems that execute real business processes end-to-end, at scale, with meaningful reductions in headcount dependency and cycle time. On the other are companies still cycling through pilots, accumulating proofs-of-concept that never reach production. The gap between what AI promises and what their deployments actually deliver grows wider each quarter.

That gap is where a lot of equity value in this cycle gets won or lost. Working inside PE-backed businesses across healthcare, financial operations, construction services, and enterprise SaaS, the pattern is consistent enough to be worth laying out clearly for value creation teams.

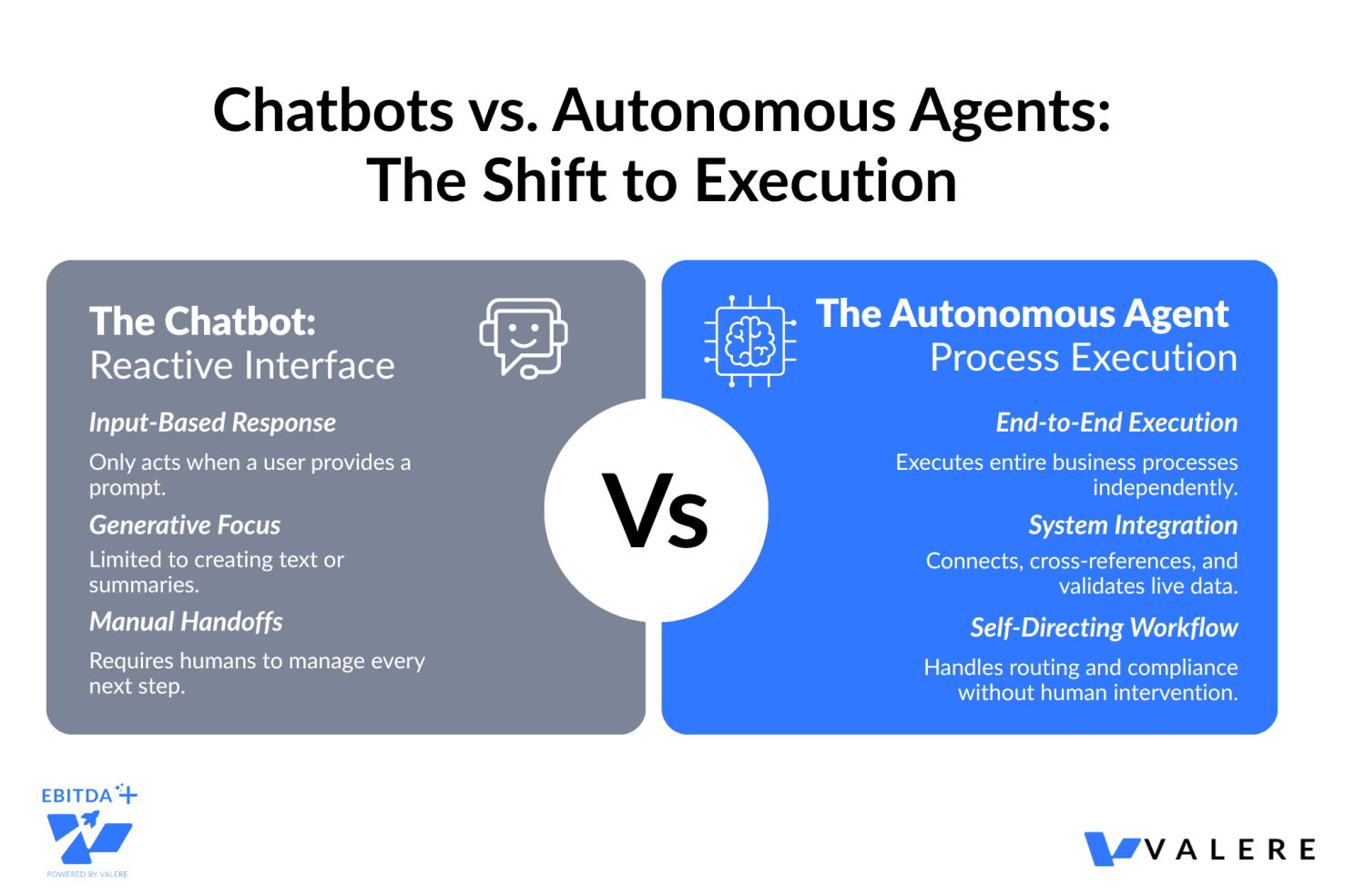

Why the Distinction Between Agents and Chatbots Matters

The term autonomous gets stretched to cover a lot. A chatbot responds to inputs. An autonomous agent executes business processes.

When a user asks a chatbot to summarize a document, it generates text. When an autonomous agent handles the same task, it retrieves the document from a connected system, cross-references it against a live database, and flags anomalies against historical thresholds. It then routes the output to the appropriate stakeholder and logs every action for compliance purposes, without a human initiating each step. That chain of connected execution across real business systems is what autonomous looks like in practice. It replaces a series of human handoffs with a continuous, self-directing workflow.

This distinction matters when evaluating an AI initiative inside a portco. Building a chatbot and calling it an agent is not the same as building a system that executes business workflows. The difference shows up directly in your EBITDA bridge.

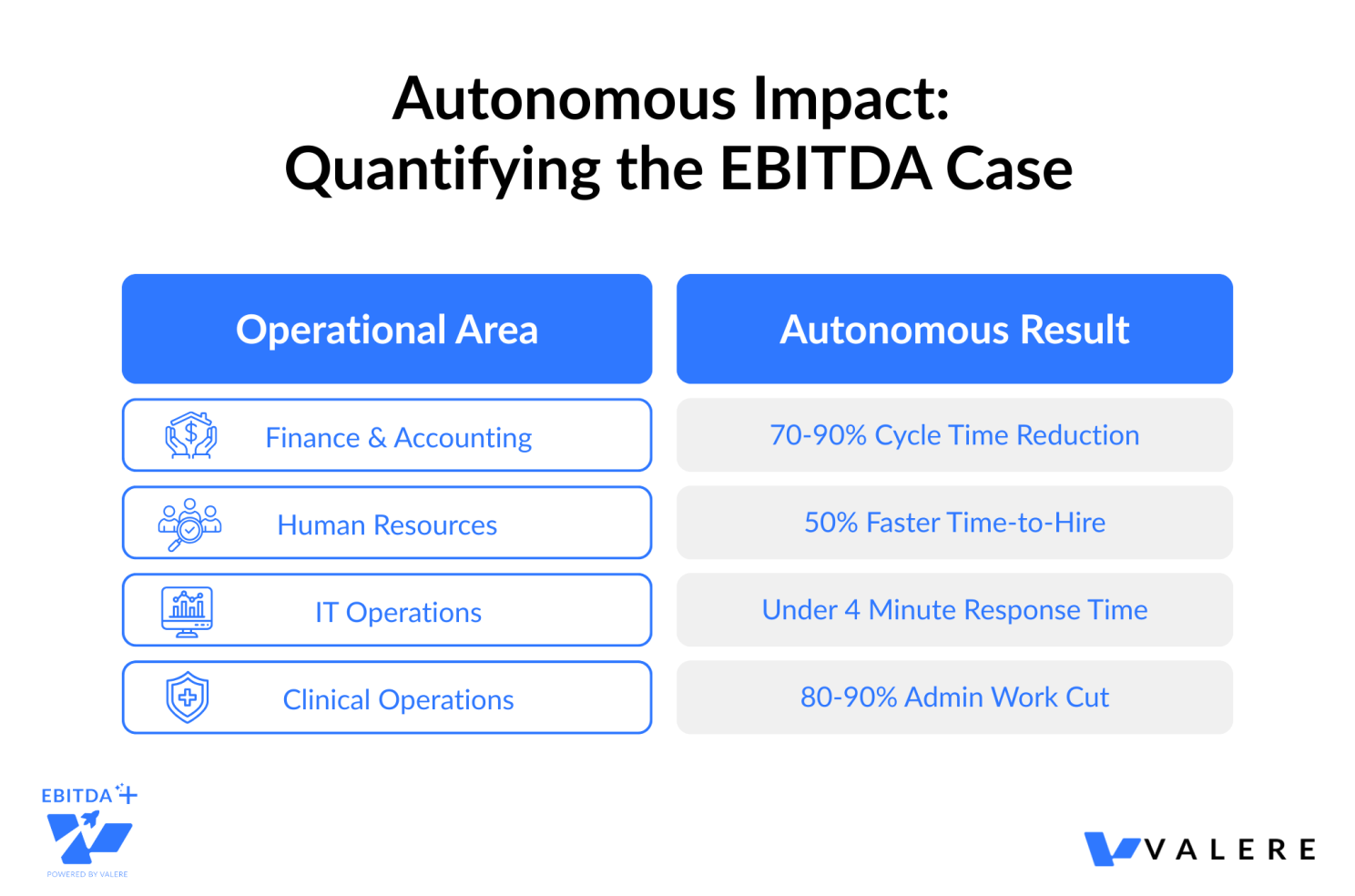

Where the EBITDA Case Is Coming From

Finance and Accounting

The invoice management lifecycle is one of the cleaner opportunities in mid-market operations. Platforms built to handle invoice reading, validation, and payment processing end-to-end make human involvement the exception rather than the rule. Across deployments in this space, cycle time reductions land in the 70 to 90 percent range. In a portco with meaningful AP or AR volume, that is a real labor cost conversation.

Operations and Field Services

One national construction services business managed over 3,000 active projects through spreadsheets, email threads, and text messages. That model generated more than $200,000 annually in losses from scheduling failures and dispatch bottlenecks. A single orchestrated system unified scheduling, dispatch, and field communications, eliminating the manual dispatch layer that had been capping growth. Agents now handle crew assignments autonomously and interface directly with over 70 field installers via GPS tracking and voice-to-text.

Human Resources

Time-to-hire is an underappreciated margin lever in high-growth portcos. Organizations running AI-assisted recruitment consistently report around 50 percent faster time-to-hire. At the self-service layer, agents reasoning over internal policy documents deliver HR specialist-level accuracy around the clock. That matters in businesses scaling headcount quickly without equivalent back-office capacity.

IT Operations

In cloud environments generating thousands of daily alerts, autonomous incident response agents detect and contain issues in under four minutes. The manual equivalent typically runs 30 minutes or longer. In an enterprise SaaS portco where uptime drives customer retention, that gap has a direct revenue correlation.

Healthcare and Clinical Operations

A HIPAA-compliant AI system for a clinical operations engagement went live in five months. The industry standard runs 12 to 18. That deployment achieved 90 percent clinical data extraction accuracy and cut manual administrative work by 80 to 90 percent. For a healthcare services portco trying to extract margin without compromising care quality, administrative automation at that accuracy level meaningfully changes the unit economics of the business.

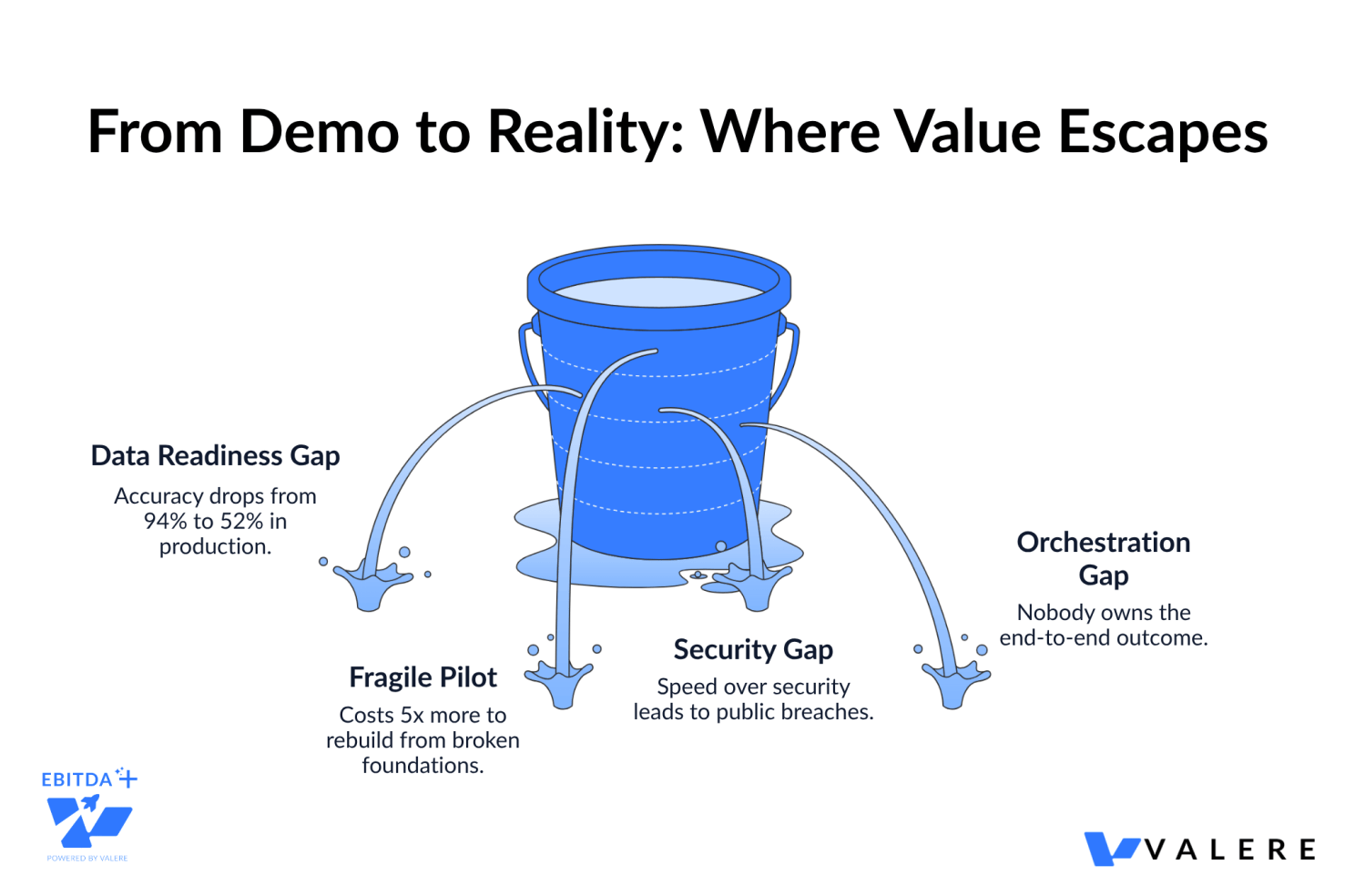

Why Most Portco AI Initiatives Stall Between Pilot and Production

Industry estimates put the percentage of AI pilots that never reach production somewhere between 80 and 95 percent. The pilots work. The demos impress. Then the initiative quietly dies between proof-of-concept and operational reality.

1. The Data Readiness Gap

Pilots run on clean, curated, manually prepared data. Production runs on whatever the enterprise systems actually contain. That means inconsistent formats, legacy structures, missing fields, and naming conventions nobody has touched in a decade. A system achieving 94 percent accuracy in a demo can degrade to 52 percent in the first week of live operation. The environment got real and the model performance followed.

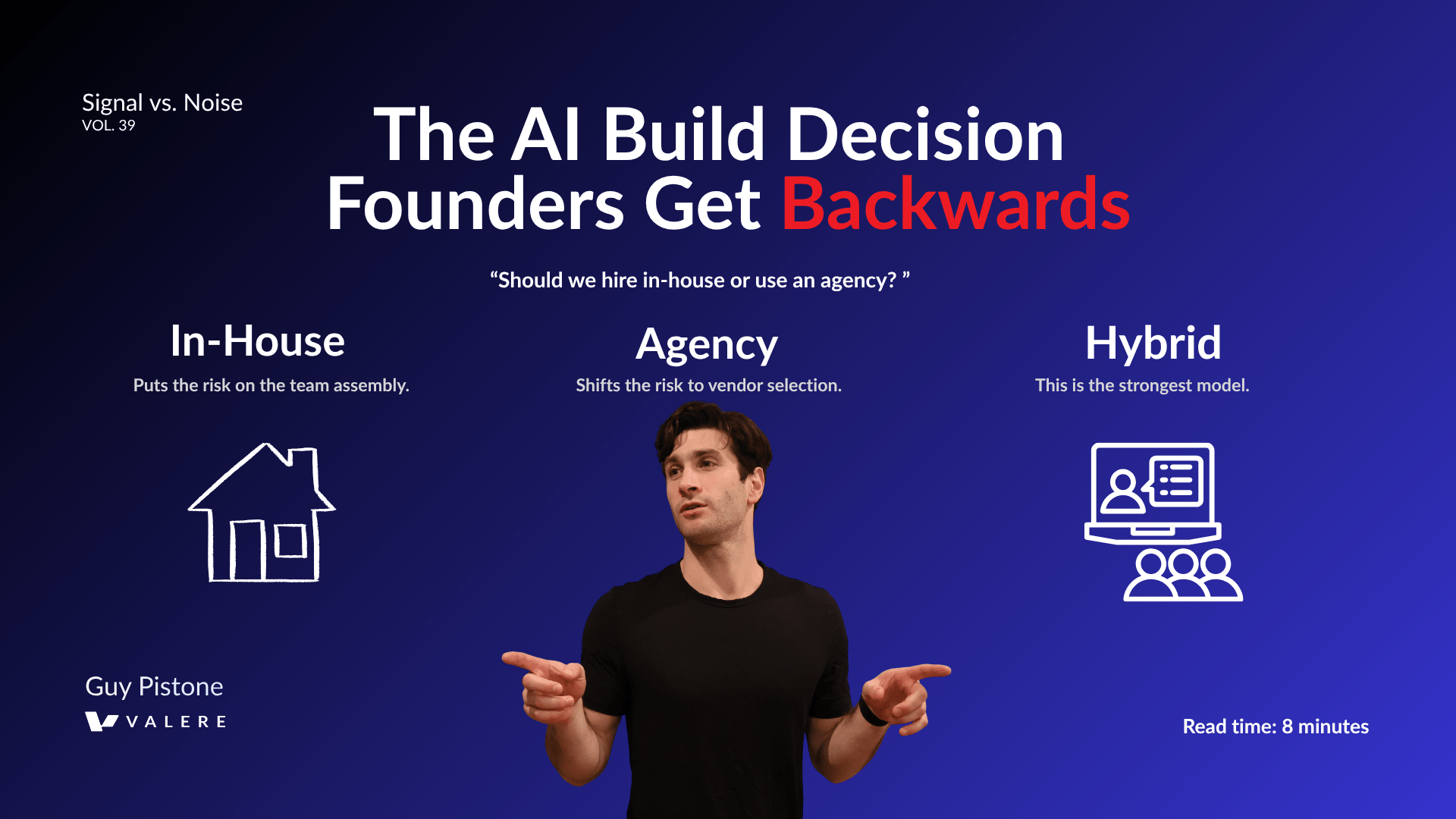

2. The Orchestration Gap

This is where most initiatives actually die, and it is also the least visible failure mode at the pilot stage. A strategy consultant tells you what to automate. A platform vendor sells you a tool. A training provider runs a workshop. Nobody owns how those pieces connect to actual business processes.

One B2B marketing automation portco needed to orchestrate email operations across more than 250,000 contacts. A basic generative AI tool could not manage the complexity of rotating sending infrastructure, dynamic personalization at scale, and compliance management across hundreds of active inboxes. The team had to engineer a custom orchestration layer built against actual operational requirements rather than demo requirements. That gap between the two is where most initiatives lose their footing.

3. The Security Gap

Default AI configurations prioritize speed over production security. API credentials leak through URL history. Shared conversation contexts expose user data across sessions. Unvetted inputs create injection vectors that redirect agent behavior in ways nobody anticipated in the demo. These are predictable failure modes of systems deployed without production hardening, and they are behind breach incidents that have already made headlines.

The Fragile Pilot

The costliest failure mode is the AI system that appears to be working until the moment it cannot. One enterprise AI firm needed a full architectural rescue after building their core platform on a framework that handled simple queries adequately but collapsed under complex enterprise interactions. It passed every internal review and performed well in every sales demonstration while simultaneously failing the enterprise clients the firm was trying to win, clients representing more than $100 million in potential annual revenue.

Full architectural migration and iterative prompt optimization eventually delivered a 50 percent improvement in response accuracy. Rebuilding from a broken foundation consistently runs three to five times the cost of building correctly from the start. Roughly a quarter of the engagements we see begin in exactly that situation.

How AI Creates Durable Advantage Across a Hold Period

The financial case for autonomous AI extends well beyond cost reduction, though the cost numbers are real. The deeper mechanism is compounding advantage, and that matters significantly across a five to seven year hold.

Operational Leverage

The cycle time reductions and headcount efficiency gains described above create cost structures that competitors running manual processes cannot match. That gap translates directly to margin and reinvestment capacity, and it compounds the longer the system runs.

Proprietary Knowledge as a Moat

Every portco has access to the same foundational AI models. When the agents inside your business draw from the same publicly available knowledge as every other company, the outputs are commoditized. The advantage comes from agents that know what only your organization knows. That includes the institutional wisdom senior employees carry, the undocumented workflows teams have refined over years, and the exception-handling patterns that exist nowhere in writing but drive how decisions actually get made.

We saw this most clearly in a government contracting portco whose revenue growth depended on identifying the right opportunities early. That intelligence lived entirely in the heads of their senior sales executives. Structured knowledge extraction captured scoring logic, competitive pattern recognition, and bid qualification heuristics developed over many years. Those agents now scan federal procurement portals around the clock and score win probability on new contract opportunities 30 to 90 days earlier than the manual team could manage. A competitor licensing the same tools is not starting at parity. They are years behind.

Workforce Adoption

AI literacy built around actual workflows and specific systems produces durable behavior change. Generic AI training produces far less. The gap between organizations whose AI investments generate lasting adoption and those whose deployments collect dust comes down almost entirely to whether workforce education was a first-class requirement from the start.

Architecture as a Multiplier

Organizations that build modular AI foundations find each subsequent initiative builds on the last. The cost of capability expansion falls and deployment speed increases across the hold period. That compounding effect is the difference between a one-time efficiency gain and a structural change in how the business operates.

What This Means Practically for Value Creation Teams

Own the Outcome, Not Just the Delivery

Ask which party is accountable for production outcomes, not just delivery milestones. The conventional software licensing model puts execution risk entirely on the buyer. Most portcos are not equipped to manage that risk without a mature internal technical team. The engagement structure should reflect where accountability actually lives.

Fix the Data Before Deploying the Agent

Treat data readiness as a pre-deployment requirement. Deploying agents against enterprise data that has never been cleaned or structured for machine consumption is the single most predictable way to kill an AI initiative. Address it first.

Capture Institutional Knowledge Early

The institutional intelligence inside your portco’s senior team is a competitive asset. If that knowledge is not encoded into your AI systems before those individuals retire, leave, or become unavailable, it is gone. Prioritize this before deployment.

Build One Thing Correctly Before Expanding

Mid-market portcos with 50 to 200 employees consistently see the strongest early returns from automating one repeatable, high-volume operational process before expanding. The temptation to land a broad AI transformation initiative inside a 100-day value creation plan is understandable. The cost of a fragile pilot at that scope is significant and difficult to recover from mid-hold.

The Window for Getting This Right Is Narrower Than It Looks

Enterprise AI is no longer a strategic experiment. It is an operational decision with compounding consequences.

The organizations pulling ahead are the ones that treated AI as infrastructure from the start, chose partners who owned outcomes rather than delivered tools, and invested in the layers that determine whether a deployment actually holds: data readiness, knowledge capture, orchestration, security, and adoption.

The execution gap is real and it is widening. It is also entirely bridgeable for portcos and value creation teams willing to shift from pilot thinking to production thinking. The decisions made in the next 12 to 18 months will determine, for most mid-market businesses, which side of that divide they end up on.

Ready to Stop Piloting and Start Compounding?

Valere works with PE sponsors and portfolio companies to design, build, and scale AI systems that deliver measurable outcomes across a hold period. Whether you are evaluating AI maturity across existing assets, preparing a portco for transformation, or identifying where a current initiative is breaking down between pilot and production, Valere brings the expertise, platform, and outcome accountability to turn AI potential into portfolio performance.

- An AI Readiness Assessment identifying exactly where your current deployments lack the data quality, knowledge capture, and orchestration architecture needed to move from demo performance to production reliability

- A clear path from disconnected pilots to a governed, end-to-end execution layer that integrates with your existing operations, encodes your institutional knowledge, and improves with every cycle it runs

- A personalized value creation roadmap from isolated AI experiments to production-grade autonomous infrastructure, with the security hardening, human oversight, and proprietary intelligence that no competitor can replicate by licensing the same tools

Start building AI that compounds: https://www.valere.io/

About Valere

Valere is an award-winning AI value creation & delivery partner, providing end-to-end AI transformation & custom software solutions that transform companies into AI-first organizations through building, learning, and scaling. As an expert-vetted, top 1% agency on Upwork, Clutch, G2, and AWS, Valere serves as the trusted AI value creation partner for PE firms, mid-market companies, and Fortune 500 enterprises alike seeking comprehensive AI transformation that drives measurable ROI. With over 220 dedicated professionals and domain experts, we specialize in end-to-end AI-native solutions using our proven crawl-walk-run methodology, guiding organizations through every stage of their AI journey—from initial assessment and strategy to full-scale implementation and optimization.

About Alex

Alex Turgeon is President of Valere, serving as an embedded AI/ML strategic partner for private equity firms and their portfolio companies. He and his team operate as a vertically integrated AI solution provider throughout the PE value chain, delivering enterprise-grade solutions that enable greater operational control, cost reduction, and efficiency gains across the investment lifecycle. Connect with Alex to discuss how your organization can begin its transformation to the agent era.

Build Something Meaningful with Valere.

Frequently Asked Questions

How do PE-backed mid-market companies typically get started with AI implementation?

Most start with an AI readiness assessment that maps existing data infrastructure, workflow complexity, and organizational capacity against realistic implementation timelines. It surfaces two or three high-value use cases where data is clean enough to support a production deployment, along with the blockers most likely to prevent a deployment from reaching production. Companies with 50 to 200 employees consistently see the strongest early returns from automating one repeatable, high-volume process before expanding scope.

What does an AI readiness assessment include?

A useful assessment covers data quality and accessibility across core systems, workflow complexity and integration requirements, organizational capacity for change management, and realistic timeline and cost benchmarks. It should surface blockers as clearly as it surfaces opportunities, including data issues, legacy system constraints, and skill gaps that will determine whether a deployment reaches production.

How do you evaluate an AI implementation partner for a portco engagement?

Center the evaluation on production track record rather than demo performance. How many deployments are currently running in production versus still in pilot? What does their production hardening and security process look like? Can they provide specific outcome data from comparable engagements, and what happens when something breaks after launch? Partners who are vague on any of these are selling deliverables rather than outcomes, which is a meaningful distinction when the engagement is funded against a value creation plan.

Why do most AI pilots fail to reach production?

The primary causes are data readiness gaps that only surface under production conditions, orchestration failures when multiple AI components need to work together inside real business systems, security configurations built for speed rather than production, and adoption failures when teams deploy tools without role-specific training. The highest-cost failure mode is the fragile pilot: a system that passes every internal review but fails under the complexity of real enterprise use. In a PE context, that failure is particularly costly when the initiative was funded against a specific EBITDA target.