By: Alex Turgeon, President at Valere

TL;DR: 3 Key Takeaways

- The 2026 National AI Policy Framework establishes federal preemption over state AI regulations, materially reducing the compliance uncertainty that has slowed AI investment and deployment across multi-state operations.

- The organizations generating measurable AI-driven value are those treating implementation as operational transformation rather than a software procurement decision, embedding AI into core processes that directly affect margin, capacity, and competitive position.

- Across child safety architecture, data provenance, federal procurement, and workforce readiness, the framework makes clear that AI governance decisions made at the design stage determine legal defensibility, exit value, and investment thesis outcomes.

On March 20, 2026, the Trump administration released its National Policy Framework for Artificial Intelligence. It is a sweeping set of legislative recommendations that changes how the federal government intends to govern, accelerate, and leverage AI as a tool of economic and geopolitical power.

For private equity firms and mid-market companies, the timing is significant. AI is moving from a speculative line item in an investment thesis to a measurable driver of enterprise value. This framework does not just shape the regulatory environment. It reshapes the competitive calculus for every portfolio company and mid-market operator working through where AI fits in their business model and what it is actually worth. What follows is our practical read on where the real implications land.

The Regulatory Fog Is Lifting

One of the most persistent blockers we hear from firms is regulatory uncertainty. Sponsors have been cautious about making deep operational AI commitments when the compliance landscape could shift dramatically depending on which states a company operated in.

Federal Preemption Replaces the State Patchwork

The 2026 Framework largely resolves that concern. The administration’s push for federal preemption establishes a single national standard. This replaces the web of conflicting state regulations that has complicated AI deployment across multi-state operations. The Department of Justice AI Litigation Task Force, stood up in January 2026 and chaired by Attorney General Pam Bondi, has an explicit mandate to challenge state laws the Department of Commerce identifies as overly burdensome. States that resist face potential withholding of federal funding, including the $42 billion BEAD broadband program.

State laws in the crosshairs include Colorado’s SB 24-205 (mandatory bias mitigation and impact assessments), California’s SB 53 (safety incident reporting), California’s AB 2013 (training data transparency), Texas’s TRAIGA (prohibited uses and governance protocols), and Illinois’s HB 3773 (employment-based AI notice requirements).

What This Means for Sponsors and Operators

Multi-state portfolio companies that deferred AI implementation waiting for regulatory clarity now have their signal. The compliance risk that made some AI investments harder to underwrite is materially reduced under a permissive federal standard. For mid-market operators, the message is similar but more immediate. The companies moving now will have a structural advantage by the time the next planning cycle comes around.

The Shift From Software to Service

There is a broader transition happening in enterprise technology that this framework will accelerate. PE and mid-market leaders need to understand it clearly.

From Tool Access to Outcome Delivery

The old model was software as a service. You bought a license, deployed a tool, and hoped adoption followed. ROI was indirect and often hard to attribute. The model replacing it is service delivered through software, where the value is an outcome rather than access to a platform. AI makes this practical in ways it simply was not before. Intelligent systems can now perform work, not just support it.

The 2026 Framework reflects this shift by prioritizing deployment over restriction. Regulatory sandboxes, accessible federal datasets, and sector-specific oversight through existing agencies like the FTC, FDA, and SEC all point toward an environment designed to let outcome-oriented AI solutions operate at scale.

Why Embedded Capability Beats Tool Procurement

The AI investments that generate real enterprise value are not the ones where a company adds a tool to their tech stack. They are the ones where AI gets embedded into core business processes in ways that directly affect margin, capacity, customer experience, or competitive position. That requires a different implementation philosophy than most software deployments, and it is a distinction we come back to on almost every engagement. Software can be replicated. Operational AI capability, embedded in processes, supported by clean data, and adopted by a workforce that knows how to use it, is considerably harder to close the gap on once a competitor has built it.

Child Safety: Compliance Is a Product Architecture Decision

For Consumer-facing companies running platforms with any possibility of minor access, the child protection provisions in the 2026 Framework deserve specific attention.

What the Framework Requires

The Take It Down Act, signed in May 2025, requires platforms to remove AI-generated non-consensual intimate imagery within 48 hours of notification. It also establishes federal criminal penalties for violations. The 2026 Framework extends this further. It calls on Congress to mandate age-assurance requirements for AI platforms likely accessed by minors, require features that reduce risks of sexual exploitation and self-harm, and confirm that COPPA protections apply in full to AI systems. That includes restrictions on using minor-generated data for model training or advertising.

Building It Right From the Start

This is not a compliance checkbox. It is an architecture decision that has to be made at the foundation of any consumer AI product, not retrofitted after launch. We built an AI-powered mentorship platform for youth sports, featuring AI coaching and access to professional athletes for young users. COPPA compliance and teen-focused security shaped every technical decision from the start. They were not features added at the end. Getting it right early also meant the company avoided post-launch remediation costs that can meaningfully erode a growth investment’s return profile.

For PE sponsors conducting diligence on consumer technology assets, child safety architecture is increasingly a material risk factor. For operators building or acquiring consumer-facing AI products, it belongs in the requirements document before a line of code is writt

Infrastructure Is a Value Creation Lever

The energy and infrastructure provisions of the 2026 Framework have direct implications for AI-intensive businesses and the capital decisions surrounding them.

The Scale of Capital Commitment

Seven major technology companies, including Amazon, Google, Meta, Microsoft, OpenAI, Oracle, and xAI, signed the Ratepayer Protection Pledge on March 4, 2026. They committed to bearing the full infrastructure costs of powering their data centers. The numbers reflect the scale of that commitment: approximately $700 billion in 2026 alone, projected to reach $820 billion in 2027. That is six times the level of 2022.

The proposed DATA Act of 2026 would allow fully off-grid AI infrastructure operators to bypass Federal Power Act oversight. It would also let them skip the grid interconnection queues that currently average five years nationally.

Infrastructure as a Strategic Variable

The intersection of physical and digital infrastructure is something mid-market companies frequently underestimate. We worked with an education technology company developing an AI platform to help educators redesign physical classrooms using computer vision and mixed-reality technology. What appeared to be a software challenge had significant physical infrastructure dependencies that required design decisions from day one. The environment your AI operates in is a strategic variable, not an IT procurement decision.

For firms evaluating AI-intensive businesses, energy costs, permitting timelines, and compute access are no longer background considerations. They affect margin, scalability, and ultimately exit value.

Intellectual Property and Data Are Balance Sheet Issues Now

The administration believes training AI on copyrighted material constitutes transformative fair use. However, it has declined to codify that view legislatively, leaving resolution to the courts. Given the volume of pending litigation, that process will take years.

What Early Court Rulings Are Telling Us

The pattern emerging from early rulings is instructive. Bartz v. Anthropic found training on lawfully acquired books to be fair use. Thomson Reuters v. ROSS rejected fair use where training on legal headnotes caused demonstrable market harm. The common thread is data sourcing. How training data was acquired and documented is increasingly determinative.

The Framework also calls for a federal digital persona protection standard aligned with the NO FAKES Act. This would establish IP rights in an individual’s voice and likeness with a DMCA-style takedown mechanism.

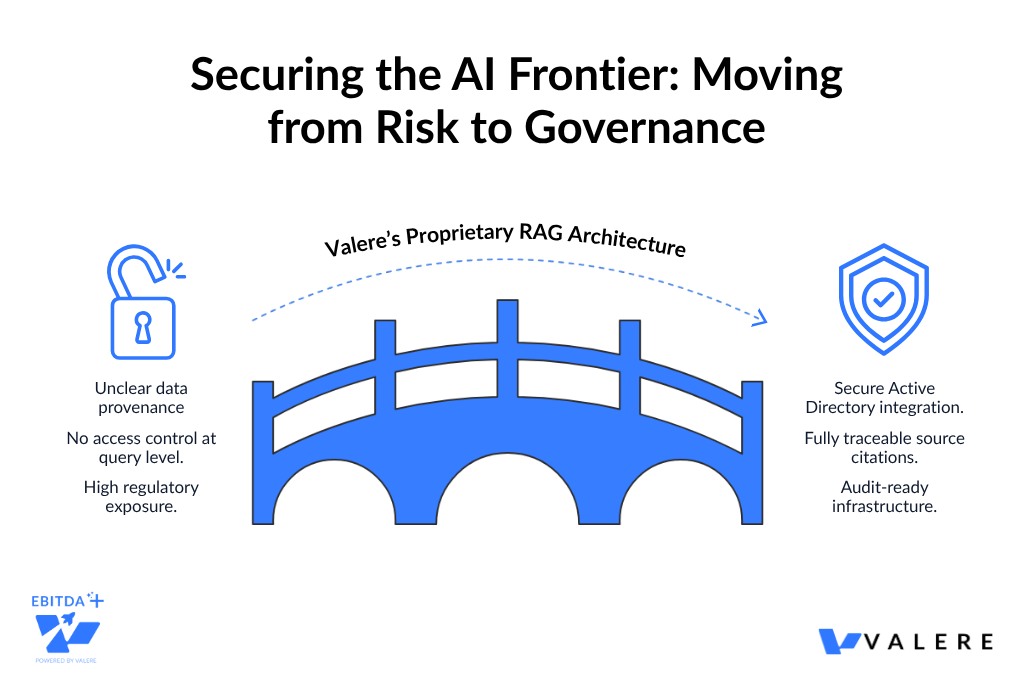

Data Provenance as a Diligence Question

For companies, proprietary data assets are increasingly a primary value driver in AI-oriented initiatives. But data provenance is now a diligence question, not just a technical one. Acquirers need to understand not only what data a target company holds, but how it was sourced, structured, and whether its training practices would survive legal scrutiny.

We built an AI system for a national specialty contractor. Their engineers needed to search and reason across more than ten terabytes of proprietary building codes, blueprints, and technical documentation. We used a Retrieval-Augmented Generation architecture protected by Active Directory-based access control. This enforced permissions at the query level, so the system only surfaced data the requesting engineer had authorization to access. Every output included a traceable citation back to a specific source document. The result was a system that was technically sound and legally defensible. In the current environment, that is the only combination that holds up.

Proprietary data, properly structured and protected, is a durable competitive moat. Data that cannot be defended legally is a liability masquerading as an asset, and that distinction is beginning to show up in deal valuations.

Federal Procurement: A Growth Channel With Real Entry Requirements

For mid-market companies and PE-backed businesses with government contracting exposure or aspirations, the TRUMP AMERICA AI Act carries significant implications.

What the Legislation Would Require

The proposed legislation would codify unbiased AI principles as requirements for all AI systems used in federal procurement. Those principles include truthfulness, historical accuracy, scientific objectivity, and ideological neutrality. It would also mandate annual third-party audits of high-risk government AI systems, with financial penalties for noncompliant vendors.

Compliance requirements now extend directly to the AI systems embedded in the products and services companies sell to the government. Vendors who cannot demonstrate audit-ready, explainable AI will find the channel closed to them. And audit readiness cannot be retrofitted. It has to be designed in from the start.

How This Plays Out in Practice

We have seen this across several engagements. One produced a secure, AI-powered government contracting platform built natively on AWS GovCloud. It unified the full capture lifecycle with integrated compliance checks and document intelligence. GovCloud was not a preference in that project. It was a condition of procurement eligibility.

We also worked with a regulatory compliance technology firm that needed AI decisions to survive federal audit. The solution replaced probabilistic outputs with a deterministic logic bridge built on AWS Bedrock. It evaluated each case against a 50-page rulebook and produced an immutable audit trail in a single API call. In healthcare, we built a Human-in-the-Loop validation portal integrated with Salesforce and AWS Bedrock. This created a mandatory oversight gate for AI-generated communications, with full documentation of what was sent, when, and why.

For companies evaluating AI-enabled businesses with federal exposure, this is a material diligence consideration. For operators pursuing federal growth, it is a go-to-market prerequisite.

Workforce Readiness Determines Whether the Investment Thesis Plays Out

The TRUMP AMERICA AI Act would require publicly traded companies and federal agencies to disclose the workforce impact of AI adoption. That includes data on layoffs, retraining programs, and new hiring.

The Coming Shift in How AI Programs Get Evaluated

For PE-backed businesses approaching an exit, and for mid-market companies with institutional investors, this signals a meaningful shift in how outside parties evaluate AI programs. The question will no longer just be whether a company has AI. It will be what that AI is doing to the workforce, and whether the organization managed that transition responsibly.

Where Most AI Programs Actually Fail

The operational reality is worth addressing directly. Most AI implementations we have been involved with that underperformed did not fail technically. They failed because the organization was not ready to absorb the change. Leadership had not aligned on what AI-first operations actually look like in practice. Frontline teams were not trained on how to work with AI outputs. Middle management skepticism quietly suppressed adoption. The technology worked correctly. It simply was not being used.

Surfacing Intelligence the Organization Is Not Ready For

We have also seen AI surface workforce intelligence that created entirely new management challenges. Deploying AI to detect early burnout indicators and identify fragmentation in distributed teams revealed dynamics that traditional management tools had never surfaced. The data was valuable. But acting on it constructively required a level of organizational readiness that not every company had developed at the time.

Workforce readiness is not a soft consideration. It is a determining factor in whether the investment thesis plays out, and skipping it to accelerate deployment is one of the more common and more expensive mistakes we see.

The Window Is Open, But It Will Not Stay That Way

The 2026 Framework signals clearly that the federal government views AI leadership as a national economic imperative. The regulatory clarity it provides, combined with permissive deployment conditions and sector-specific oversight, creates a genuinely different investment and operating environment than existed twelve months ago.

For private equity, AI is no longer a feature to add to portfolio companies. It is a source of structural competitive advantage when built correctly, and a narrative liability when treated as a checkbox. The friction that made certain AI investments harder to underwrite is being cleared. Portfolio companies that build genuine AI capability now will carry meaningfully stronger positions to exit.

For mid-market operators, the question is simpler and more urgent: are you building genuine AI capability, or buying access to tools? The former compounds. The latter gets replaced. The companies that treat the 2026 Framework as a green light and execute against it will look back on this period as the moment they built a structural advantage. The ones that wait will find the window has narrowed considerably.

Turn Policy Clarity Into a Competitive Advantage

The 2026 Framework clears the path. What you build on it determines whether that advantage compounds or stalls. Valere works with private equity sponsors and portfolio companies to design, build, and scale AI systems that deliver measurable outcomes. Whether you are evaluating AI maturity across existing assets, preparing a company for operational transformation, or assessing a new acquisition target, Valere brings the expertise, implementation depth, and partnership model to turn the policy window the 2026 Framework opens into durable portfolio performance.

- A Framework Readiness Assessment identifying where your current AI deployments lack the data provenance, governance architecture, and audit infrastructure needed to perform under the compliance standards the 2026 Framework establishes

- A clear path from disconnected AI pilots to embedded operational capability that integrates with your existing processes, encodes institutional knowledge, and compounds in value with every deployment cycle

- A personalized value creation roadmap from isolated AI experiments to production-grade infrastructure with human-in-the-loop governance, defensible data pipelines, and proprietary capability that no competitor can replicate by licensing the same tools

Start building AI that holds up legally, operationally, and at exit: https://www.valere.io/

Frequently Asked Questions

How do mid-market software companies typically start with AI implementation?

Most mid-market software teams start by identifying where they are losing the most productive capacity: manual review processes, repetitive outbound, reactive customer success workflows. The companies that see the most durable results begin with knowledge capture work before building any automation. The underlying judgment in those workflows is usually more complex than it appears. Starting with agentic tooling before codifying the decision logic tends to produce agents that perform well in demos and poorly in production.

What does an AI readiness assessment typically include for a software company?

A thorough readiness assessment covers four things: the current cost and output structure of the workflows most likely to be automated; the state of existing data and knowledge documentation; the organizational capacity to absorb change without disrupting the core business; and an honest look at which path is actually executable given the company’s current position. Most assessments that skip the last question produce technically valid recommendations that never get implemented.

How do you evaluate whether to pursue revenue acceleration or margin improvement first?

The answer usually comes down to growth endurance. If net revenue retention is above 115% and the market genuinely supports 10+ percentage points of growth acceleration through AI-native products within 18 months, Path One tends to be the higher-value choice. If growth has already decelerated and NRR is under 110%, Path Two is typically more honest. Most companies assume they can pursue both simultaneously. The evidence suggests that committing to one path produces significantly better outcomes than a divided effort.

What is the difference between AI strategy and AI execution?

AI strategy identifies which workflows and business model changes represent the highest-value targets for transformation. AI execution gets those changes into production without disrupting the business while they are being built. Most of the gap between companies that generate meaningful AI ROI and those that do not comes down to execution: the knowledge capture work, the architecture decisions, and the organizational redesign. Strategy without execution infrastructure tends to produce well-reasoned slide decks and very little else.

How do PE-backed software companies typically approach AI transformation differently than VC-backed ones?

PE-backed software companies tend to move faster on the margin side because the path to exit is more clearly defined and the tolerance for extended investment cycles is lower. The firms with the strongest outcomes apply PE-style discipline to cost structure while simultaneously investing in the agent infrastructure that allows remaining headcount to operate at significantly higher output. The combination of zero-based budgeting and aggressive token spend per engineer tends to produce better results than either approach on its own.